Table of Contents

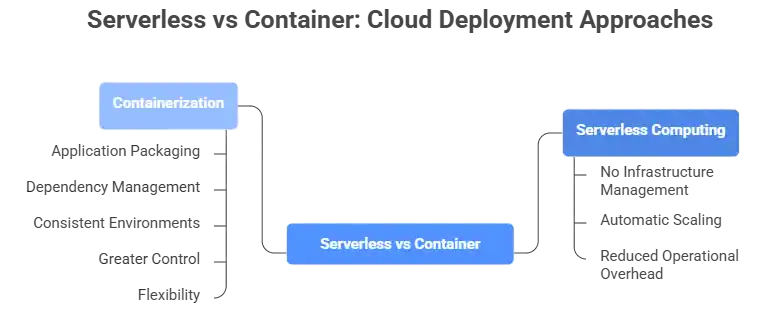

When building a modern cloud application, one of the most debated architectural decisions you’ll face is serverless vs. containers. Both approaches have reshaped the DevOps and cloud computing landscape, but they serve different needs, different teams, and different workloads. Whether you’re a startup scaling your first product or an enterprise refactoring legacy systems, understanding the serverless vs. container debate is essential to making the right infrastructure choice.

In this complete comparison guide, we’ll break down everything from architecture and performance to cost, scalability, security, and real-world use cases so you can confidently decide which path fits your next project.

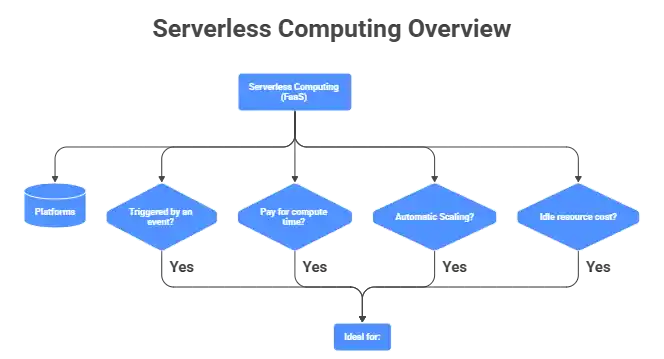

What Is Serverless Computing?

Serverless computing, often called Function as a Service (FaaS), allows developers to run code without provisioning or managing any underlying server infrastructure. Platforms like AWS Lambda, Google Cloud Functions, and Azure Functions handle all the infrastructure automatically.

In a serverless model:

- Code runs only when triggered by an event

- You pay only for the compute time consumed

- Scaling happens automatically with zero manual configuration

- No idle resource cost: if your function isn’t running, you aren’t billed

Serverless is ideal for event-driven architecture, REST APIs, data processing pipelines, and lightweight backend tasks where speed of deployment matters most.

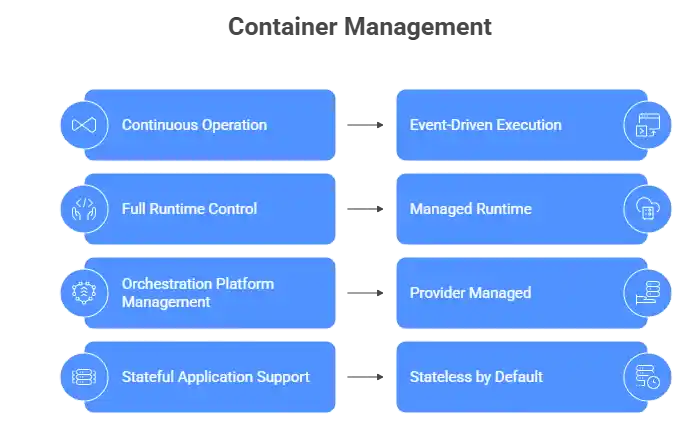

What Are Containers?

A container is a lightweight, portable unit that packages your application code along with its dependencies, runtime, and configuration. Tools like Docker, Kubernetes, and Podman are the backbone of the container ecosystem.

Unlike serverless containers:

- Run continuously until explicitly stopped

- Give developers full control over the runtime environment

- Are managed via container orchestration platforms like Kubernetes

- Support stateful applications that require persistent memory or storage

Containers power microservices, enterprise-grade applications, and workloads that require consistent, long-running environments.

Serverless vs Container: Core Architecture Differences

Understanding the architectural differences is the foundation of the serverless vs. container comparison.

| Feature | Serverless | Containers |

| Infrastructure Management | Fully managed by a cloud provider | Developer-managed (via Kubernetes, etc.) |

| Scaling | Automatic, instant | Manual or orchestrated |

| Startup Time | Milliseconds (with cold start risk) | Seconds (consistent) |

| State Management | Stateless by design | Supports stateful workloads |

| Execution Model | Event-driven | Continuous process |

| Runtime Control | Limited | Full control |

When examining the serverless vs container debate at the architecture level, the biggest distinction is control. Serverless abstracts infrastructure entirely, while containers give you ownership of the runtime environment.

Serverless vs Container: Performance & Scalability

Performance is one of the most critical dimensions in any serverless vs container evaluation.

Serverless Performance

Serverless functions excel in auto scaling; they can go from zero to thousands of concurrent executions instantly. However, a major trade-off is the cold start problem: when a function hasn’t been invoked recently, the cloud provider must spin up a new instance, introducing latency (typically 100ms–2s depending on the runtime).

For latency-sensitive applications, cold starts are a real concern. Solutions include keeping functions “warm” with scheduled pings or choosing runtimes with faster initialization, like Node.js or Python.

Container Performance

Containers eliminate cold starts because they run continuously. Once a container is up, requests are handled immediately with consistent container startup time and predictable latency. For high-throughput, latency-sensitive workloads, containers often have the edge.

In the serverless vs container performance battle, containers win for sustained, predictable traffic, while serverless wins for bursty, unpredictable workloads.

Serverless vs Container: Cost Comparison

Cost is often the deciding factor in the serverless vs. container decision.

Serverless Pricing Model

Serverless uses a pay-per-use billing model; you only pay when your function runs. AWS Lambda, for example, charges per 1 million requests and per GB-second of execution time. For low-traffic or irregular workloads, this results in dramatically lower cloud cost management overhead. There’s no cost for idle time.

Container Hosting Cost

Containers run on virtual machines or managed services like AWS ECS, Google Kubernetes Engine (GKE), or Azure AKS. You pay for compute resources whether your app is serving requests or sitting idle. For consistently high-traffic applications, this cost is justified by the performance and control gained.

The serverless vs container cost comparison ultimately depends on traffic patterns:

- Low or sporadic traffic → Serverless wins

- High, consistent traffic → Containers may be more cost-effective

Serverless vs Container: Scalability

Scalability is where the serverless vs container gap becomes most apparent.

Serverless platforms handle scaling automatically at the platform level, with no configuration needed. If your application suddenly receives 10,000 concurrent requests, serverless scales to match, then shrinks back to zero when traffic drops.

Containers scale via container orchestration tools like Kubernetes, which require configuration of replicas, autoscalers, and resource limits. This offers more fine-grained control but requires deeper DevOps expertise.

For teams that want scalability without infrastructure complexity, the serverless vs container answer leans toward serverless. For teams needing custom scaling logic and workload control, containers are the better fit.

Serverless vs Container: Security

Security is a nuanced area of the serverless vs container comparison.

Serverless Security

In serverless, the cloud provider manages OS patches, runtime updates, and infrastructure hardening, reducing the attack surface significantly. However, developers must secure their functions at the code level, manage IAM permissions carefully, and protect against injection attacks and over-privileged functions.

Container Security

Container security requires managing container images, scanning for vulnerabilities, securing the Kubernetes cluster, and controlling network policies. Tools like Trivy, Falco, and OPA (Open Policy Agent) help enforce security best practices. The container registry must also be hardened against image tampering.

In the serverless vs container security comparison, serverless reduces infrastructure security burden, while containers demand more responsibility but offer deeper control over the security posture.

Serverless vs Container: Developer Experience & DevOps

From a developer productivity standpoint, the serverless vs container experience differs significantly.

Serverless allows developers to focus purely on writing business logic. Deployment is often a single CLI command. No Dockerfiles, no Helm charts, no cluster management. This simplicity accelerates development cycles and is especially valuable for small teams and serverless startups.

Containers require more setup, writing Dockerfiles, managing container images, configuring CI/CD pipelines, and handling container lifecycle management. However, this investment pays off in portability, reproducibility, and environmental consistency. Containers shine in complex container DevOps pipeline setups where multiple services interact.

When evaluating serverless vs. containers for developer experience, serverless has a gentler learning curve. Containers reward teams with the expertise and time to manage them properly.

Serverless vs Container: Best Use Cases

Choosing the right tool means matching the technology to the workload. Here’s how the serverless vs container use cases compare:

When to Use Serverless

- REST API backends with variable traffic

- Data transformation and ETL pipelines

- Scheduled background tasks (cron jobs)

- Webhook handlers and event-driven processing

- Chatbots and notification systems

- Serverless CI/CD automation tasks

When to Use Containers

- Long-running microservices and APIs

- Stateful databases and session-heavy applications

- Machine learning model serving

- Containers for enterprise applications with strict compliance needs

- Applications requiring GPU access or custom runtimes

- Multi-service platforms with complex inter-service communication

Can Serverless and Containers Work Together?

Absolutely, and this is where modern cloud-native architecture gets exciting. The serverless vs container debate doesn’t have to be an either/or choice. Platforms like AWS Fargate, Google Cloud Run, and Knative blend both paradigms, running containerized workloads in a serverless execution model.

A common hybrid architecture:

- Use serverless functions for event-driven tasks, lightweight APIs, and background jobs

- Use containers for heavy-compute services, persistent workloads, and stateful microservices

- Connect them via message queues (SQS, Pub/Sub) or API gateways

In this hybrid model, the serverless vs container question transforms into: “Which tool is right for which part of my system?”

Conclusion

The serverless vs container debate doesn’t have a single winner; it has a right answer for every context. Serverless wins on simplicity, cost-efficiency for variable workloads, and developer speed. Containers win on control, performance consistency, and support for complex, stateful enterprise applications.

As backend architecture continues to evolve, the smartest teams aren’t choosing one over the other; they’re building hybrid systems that use the strengths of both. The key to mastering the serverless vs container decision is understanding your workload, your team’s expertise, and your long-term cloud strategy.

Whether you go serverless, container-first, or hybrid, the most important thing is that your

FAQs

Is serverless really cheaper than containers?

Only for low or sporadic traffic at high, consistent volume, containers on reserved instances often cost less.

Can serverless and containers work together?

Yes, hybrid architectures use serverless for event-driven tasks and containers for persistent, long-running services.

Which is better for microservices, serverless or containers?

Containers (via Kubernetes) offer more control for complex microservices; serverless works best for stateless, single-purpose functions.

Can serverless run long-running tasks?

Not well, most platforms cap execution at 15 minutes (AWS Lambda), making containers the right choice for long-running jobs.

Is serverless suitable for machine learning workloads?

Rarely, ML models need GPU access, large memory, and long runtimes that exceed serverless limits; containers are the right fit.

What is the main difference between serverless and containers?

Serverless abstracts all infrastructure away and runs code on-demand; containers package your app with its dependencies and run continuously on managed or self-hosted infrastructure.

What is Function as a Service (FaaS)?

FaaS is the serverless execution model where individual functions are deployed and billed per invocation. AWS Lambda, Google Cloud Functions, and Azure Functions are the leading examples.

Which is more secure, serverless or containers?

Serverless reduces infrastructure attack surface; containers give deeper security control, both of which require careful IAM and code-level hardening.