Table of Contents

Building AI-powered healthcare applications that ensure HIPAA Compliance, protect patient data, satisfy federal regulations, and deliver real clinical value without cutting corners.

Why HIPAA Compliance Matters for AI Health Apps

The convergence of artificial intelligence and healthcare has opened extraordinary possibilities from AI-assisted diagnostics and predictive patient monitoring to personalized treatment recommendation engines.

But this potential comes with a non-negotiable foundation: HIPAA compliance.

The Health Insurance Portability and Accountability Act of 1996 governs how protected health information (PHI) is collected, stored, transmitted, and accessed.

For developers building AI-powered healthcare applications, ignoring HIPAA is not merely a legal risk; it is a direct threat to patient safety and organizational trust.

| $1.9M Avg. healthcare breach cost (IBM 2024) | $1.5M Max annual HIPAA civil penalty per category | 71% Patients say data privacy affects care choices | 3×Healthcare breach cost vs. other industries |

As AI systems increasingly handle sensitive patient data conversations, lab results, imaging data, and behavioral patterns, understanding and implementing HIPAA safeguards is an essential engineering competency, not just a legal afterthought.

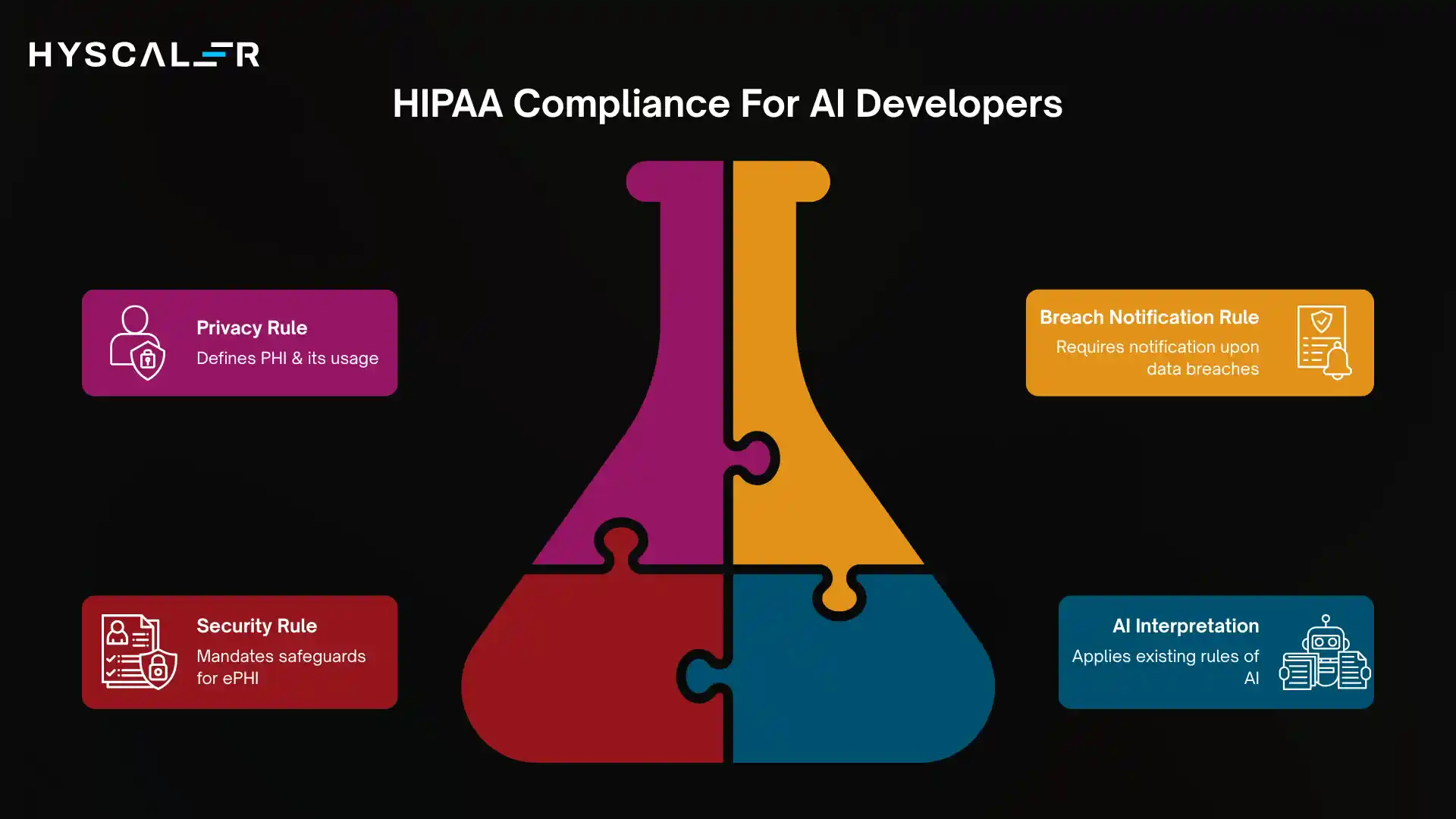

HIPAA Fundamentals Every Developer Must Know

HIPAA compliance is organized around three core rules.

Understanding all three shapes how you architect your AI application from the ground up.

The Privacy Rule

The Privacy Rule defines what constitutes protected health information (PHI), individually identifiable health data in any format, and sets standards for its permissible use and disclosure.

AI systems that process PHI must do so only within strict consent and authorization frameworks.

The Security Rule

The Security Rule specifically addresses electronic PHI (ePHI).

It mandates administrative, physical, and technical safeguards to protect ePHI from unauthorized access, corruption, or disclosure.

AI apps that ingest clinical notes, lab data, EHR records, or patient-reported outcomes are directly subject to this rule.

The Breach Notification Rule

If a breach of unsecured PHI occurs, covered entities must notify affected individuals within 60 days.

For breaches affecting over 500 individuals, entities must also notify the HHS and local media.

Your AI app must have incident response and logging capabilities to accurately detect, characterize, and report breaches.

| Key Insight: HIPAA does not specifically mention AI or machine learning. This does not exempt AI systems; it means existing rules apply to AI in ways that require careful, case-by-case interpretation. The OCR (Office for Civil Rights) evaluates AI use cases under existing HIPAA frameworks. |

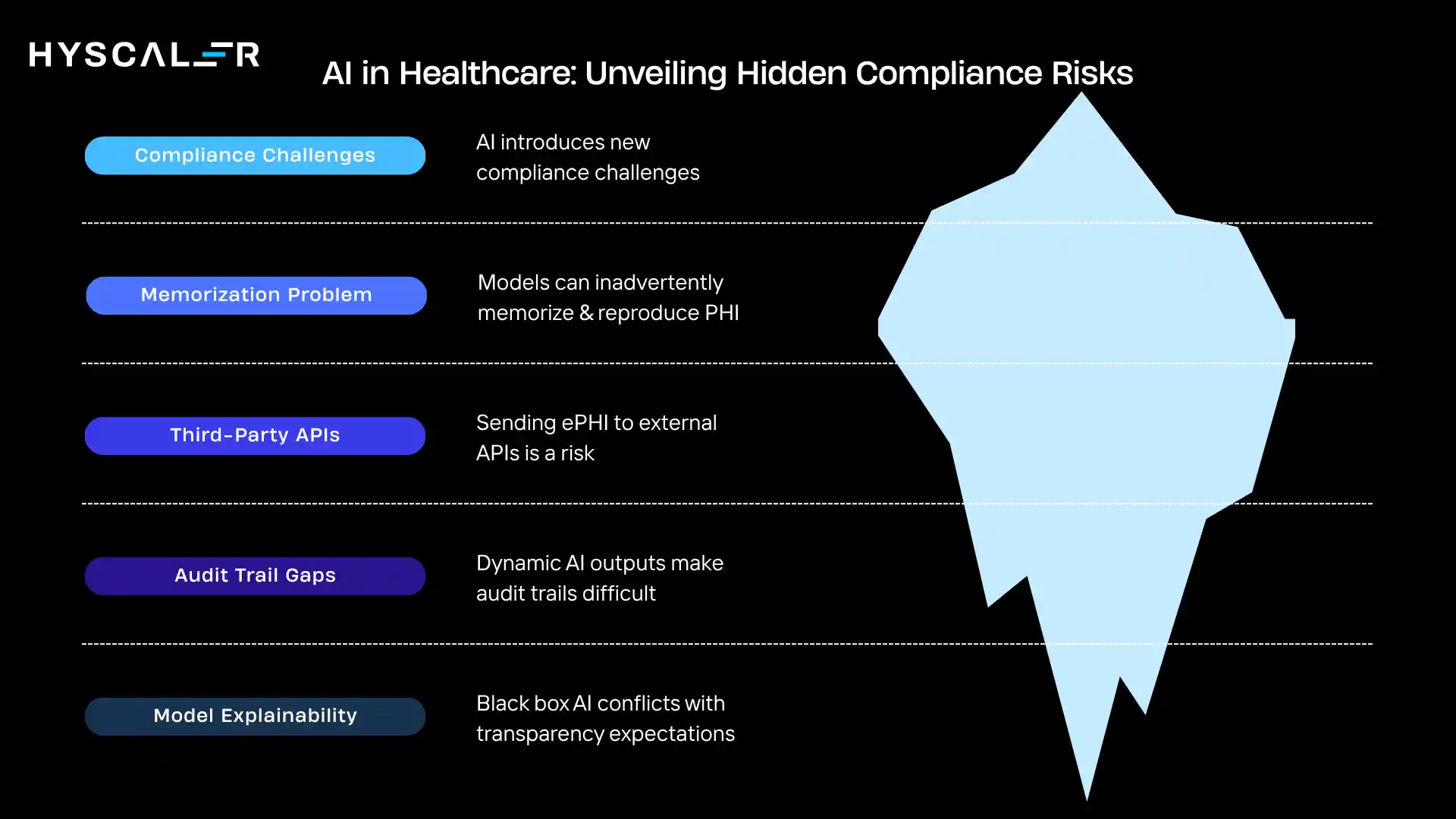

Risk Areas Unique to AI in Healthcare

AI introduces compliance challenges that traditional healthcare software does not face.

Developers must be aware of how machine learning workflows create new PHI exposure vectors.

Training data and model memorization

Large language models trained on clinical datasets can inadvertently memorize and reproduce PHI.

This is known as the memorization problem; a model may regurgitate specific patient records when prompted in certain ways.

De-identification alone is insufficient if re-identification remains possible through model outputs.

Third-party AI APIs and cloud inference

Sending ePHI to external AI APIs for inference is a major compliance risk unless a valid Business Associate Agreement (BAA) is in place.

Most consumer-facing AI APIs are not HIPAA-eligible by default.

Audit trail gaps

Traditional HIPAA auditing expects clear, deterministic logs of who accessed what data and when.

AI systems that generate dynamic outputs, use probabilistic reasoning, or run asynchronous inference pipelines can make audit trails difficult to construct without deliberate engineering.

Model explainability and patient rights

Patients have the right to understand how clinical decisions are made about them.

AI systems that operate as black boxes may conflict with transparency expectations, particularly as the FDA and HHS publish updated guidance on AI-assisted clinical decision support (CDS) tools.

| Watch Out: Using public LLM APIs to process patient conversations or clinical documents without a signed BAA is a direct HIPAA violation, regardless of whether you consider the data ‘de-identified.’ |

The 6 Pillars of Compliant AI App Architecture

Building a HIPAA-compliant AI healthcare application requires designing compliance into every layer of the architecture, not bolting it on at the end.

| 1. Encryption at rest & in transit: AES-256 for stored ePHI; TLS 1.2+ for all data transmission. No exceptions. | 2. Access control & authentication: Role-based access control (RBAC), multi-factor authentication, and least-privilege principles. |

| 3. Immutable audit logging: Every access, query, inference call, and data transformation is logged with timestamps and user IDs. | 4. De-identification pipelines: Safe Harbor or Expert Determination methods for training data; automatic PII scrubbing before model input. |

| 5. BAA coverage for all vendors: Every cloud provider, AI API, analytics tool, and subprocessor touching ePHI must have a signed BAA. | 6. Explainability & model governance: Version control for models, documented training datasets, and explainability layers for clinical outputs. |

Encryption standards in practice

All ePHI must be encrypted both at rest and in transit.

For cloud-hosted AI applications, this means configuring server-side encryption on all storage (e.g., AWS S3 with SSE-KMS, GCP Cloud Storage with CMEK), enforcing TLS 1.2 or higher for all API communications, and ensuring that any caching layers also encrypt stored data.

Minimum necessary data principle

AI models should only receive the subset of PHI genuinely required to perform their function.

Engineers must design data pipelines that enforce the minimum necessary standards programmatically, not just as policy guidelines.

Model versioning and reproducibility

Compliance audits may require you to reproduce an AI system’s outputs at a given point in time.

This demands robust model versioning tracking, not just weights and hyperparameters, but training data snapshots, pre-processing logic, and inference configurations.

Tools like MLflow, DVC, or W&B serve this purpose in healthcare ML pipelines.

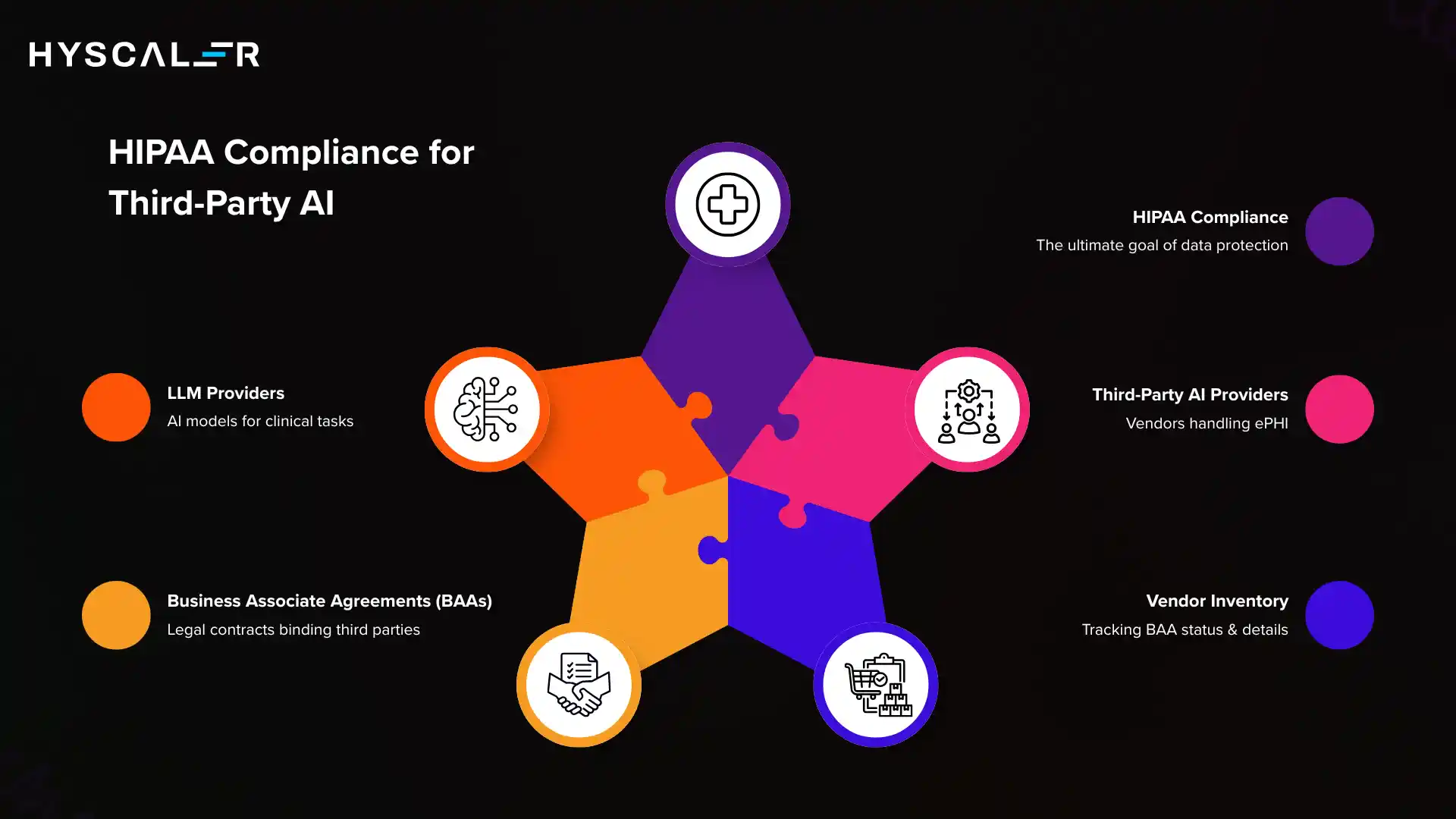

Business Associate Agreements and Third-Party AI

Any vendor, cloud provider, or AI platform that creates, receives, maintains, or transmits ePHI on behalf of a covered entity is a Business Associate under HIPAA law.

Before sending any patient data to a third-party AI service, you must have a signed BAA in place.

| A Business Associate Agreement is not merely a formality; it is the legal instrument that binds external parties to the same PHI protection obligations as your organization. HHS Office for Civil Rights |

Which major AI/cloud providers offer HIPAA BAAs?

Several enterprise cloud and AI providers offer HIPAA-eligible services with BAAs available:

- Amazon Web Services (AWS) selected services

- Google Cloud Platform (GCP) selected services

- Microsoft Azure selected services

- Google Workspace for Enterprise selected services

Crucially, not all services within these platforms are HIPAA-eligible; developers must verify eligibility at the individual service level.

LLMs and HIPAA: what to verify

If your AI healthcare application integrates a large language model for clinical note summarization, patient Q&A, or triage assistance, you must confirm whether the LLM provider offers a BAA and which API endpoints are covered.

Some providers offer separate HIPAA-eligible API tiers with enhanced data handling commitments.

| Best Practice: Maintain a vendor inventory that tracks BAA status, expiration dates, covered services, and subprocessor chains for every third-party integration in your healthcare AI stack. Review and re-sign BAAs when vendors update their service terms. |

Testing and Audit Strategies

Compliance is not a one-time certification; it is a continuous process.

Healthcare AI applications require dedicated testing strategies that go beyond standard software QA.

Penetration testing and vulnerability assessment

Regular penetration testing against ePHI systems is a critical component of HIPAA’s technical safeguard requirements.

This includes testing API endpoints that handle patient data, AI inference pipelines, and data storage layers.

Third-party security assessors with healthcare domain experience are recommended for formal assessments.

PHI data flow mapping

Produce and maintain a complete data flow diagram showing every path that ePHI takes through your AI application from ingestion and pre-processing through model inference, output generation, caching, logging, and deletion.

This map becomes the foundation of your HIPAA risk analysis.

AI-specific testing: adversarial probing

For LLM-based applications, adversarial testing should include:

- Prompt injection attempts designed to extract memorized PHI

- Edge-case inputs that might reveal private training data

- Attempts to manipulate the model into bypassing PHI filtering logic

Red-teaming AI systems for privacy vulnerabilities is an emerging but essential practice in healthcare AI quality assurance.

Developer Compliance Checklist

Use this checklist during design, development, and pre-launch review of any HIPAA-regulated AI healthcare application.

- All ePHI is encrypted at rest (AES-256) and in transit (TLS 1.2+)

- Role-based access control implemented with least-privilege defaults

- Multi-factor authentication is enforced for all ePHI-adjacent system access

- Immutable, timestamped audit logs for every ePHI access event

- Training data de-identified using Safe Harbor or Expert Determination

- Automatic PII/PHI scrubbing is applied before data reaches the AI model inputs

- BAAs signed with all cloud providers, AI APIs, and subprocessors

- Data retention and secure deletion policies are documented and enforced

- Model versioning system in place (weights, training data, configs)

- Breach detection, notification, and response procedures documented

- Annual HIPAA risk analysis completed and documented

- Staff trained on HIPAA obligations relevant to AI system use

- Incident response plan tested with tabletop exercises

- PHI data flow diagram created and kept current

- Adversarial/red-team testing conducted on AI PHI handling

Conclusion

HIPAA-compliant AI healthcare app development is simultaneously a technical discipline, a legal responsibility, and an ethical commitment.

As AI systems take on increasingly consequential roles in clinical care, summarizing patient histories, flagging diagnostic anomalies, and recommending care pathways, the stakes of non-compliance rise in proportion to the potential benefits.

The developers and organizations that will build the most trusted, durable health tech products are those that treat HIPAA compliance not as a checklist to check off before launch, but as a design philosophy baked into every architectural decision, vendor selection, and model training pipeline.

Start with the fundamentals: encrypt everything, audit everything, sign your BAAs, and build explainability into your AI systems from day one.

The regulatory landscape will continue to evolve, but privacy-first engineering never goes out of date.

FAQs

What is HIPAA-compliant AI healthcare app development?

HIPAA-compliant AI healthcare app development ensures that AI-powered applications securely handle protected health information (PHI) and meet all HIPAA requirements.

Why is HIPAA compliance important for AI healthcare apps?

HIPAA compliance is essential to protect sensitive patient data, avoid legal penalties, and ensure trust in AI-driven healthcare solutions.

What makes an AI healthcare app HIPAA-compliant?

A HIPAA-compliant app includes data encryption, secure access controls, audit logs, and adherence to HIPAA privacy and security rules.

Can AI be used safely under HIPAA compliance?

Yes, AI can be used safely if the system is designed with HIPAA-compliant frameworks, ensuring data privacy, security, and controlled access.

What are the key HIPAA compliance requirements for AI apps?

Key requirements include PHI encryption, secure data storage, user authentication, audit trails, and Business Associate Agreements (BAAs).

How do you ensure HIPAA-compliant data storage in AI apps?

HIPAA-compliant data storage involves encrypted databases, secure cloud infrastructure, and access restrictions to protect patient data.

What are the risks of non-HIPAA-compliant AI healthcare apps?

Non-HIPAA-compliant apps can lead to data breaches, heavy fines, legal action, and loss of patient trust.

Are cloud-based AI healthcare apps HIPAA-compliant?

Yes, cloud-based AI apps can be HIPAA-compliant if hosted on compliant platforms with proper security measures and signed BAAs.

How much does HIPAA-compliant AI app development cost?

The cost varies depending on features, security requirements, and compliance needs, but HIPAA-compliant development is typically higher due to strict regulations.

How long does it take to build a HIPAA-compliant AI healthcare app?

Development timelines vary, but building a fully HIPAA-compliant AI app can take several months depending on complexity and compliance scope.