Table of Contents

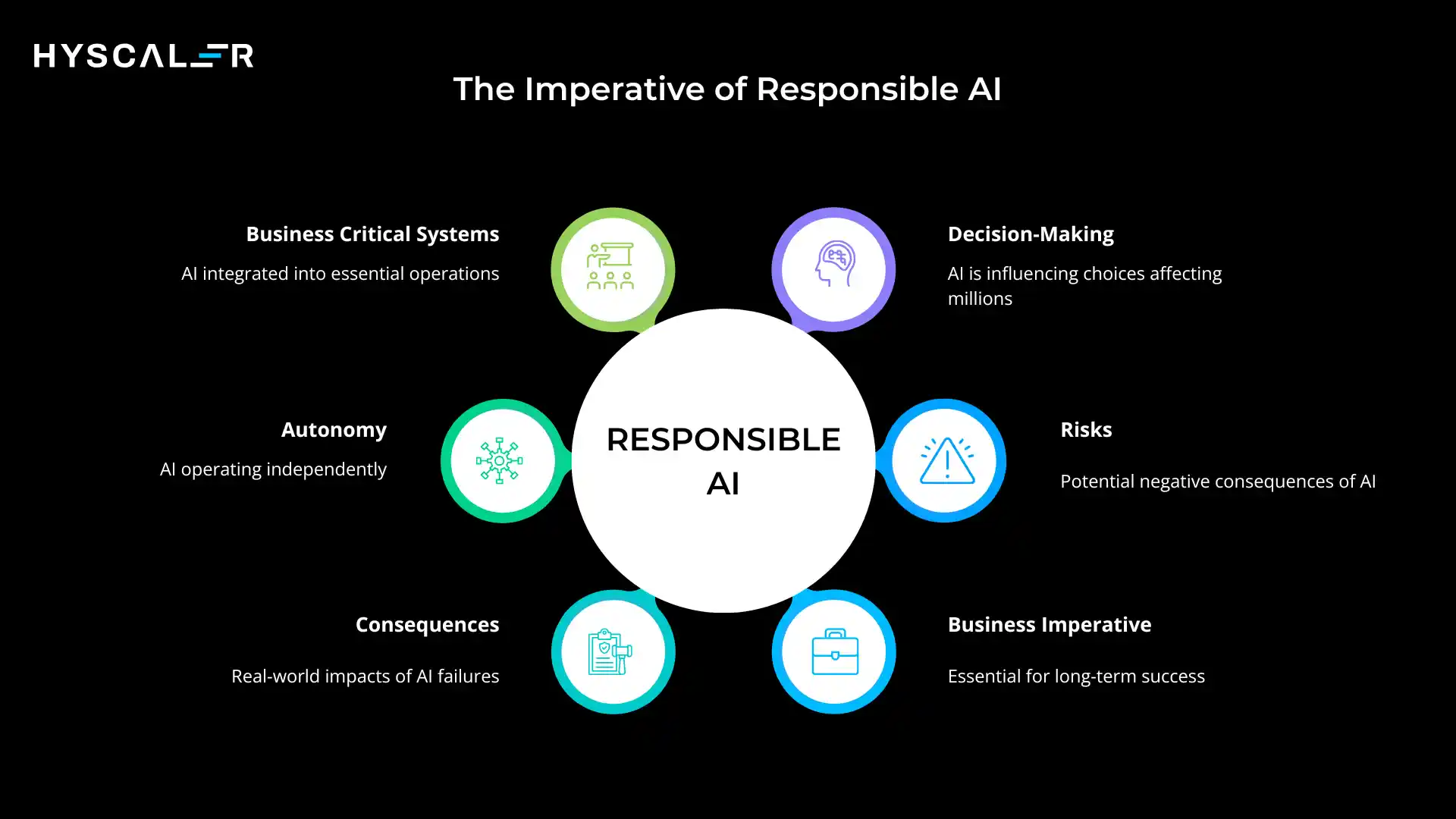

Why Responsible AI Matters Now?

We’ve crossed a threshold.

AI is no longer confined to experimental labs or pilot projects, but it’s running business-critical systems, making decisions that affect millions of lives, and operating with unprecedented autonomy.

In 2026, AI approves loans, screens job candidates, diagnoses diseases, and even acts as autonomous agents managing complex workflows.

But with this power comes substantial risk.

Biased algorithms have denied opportunities to qualified candidates.

Privacy violations have exposed sensitive data.

AI hallucinations have spread misinformation at scale.

The financial and reputational costs of these failures are staggering, with regulatory penalties now reaching into the hundreds of millions.

This is why Responsible AI has evolved from an ethical aspiration into a business necessity.

Organizations that fail to implement responsible practices face not just moral questions, but concrete consequences: regulatory fines, lawsuits, customer defection, and brand damage that can take years to repair.

What is Responsible AI?

At its core, Responsible AI is about building AI systems that work properly and safely in the real world.

It’s the comprehensive approach to ensuring that AI delivers its promised value while minimizing risks and preventing harm.

Definition: Responsible AI revolves around the principles, practices, and governance frameworks that are needed to design, develop, and deploy AI systems that are ethical, transparent, fair, secure, and accountable throughout their entire lifecycle.

Core goal: Enable AI to create genuine value, economic, social, and operational, without causing any harm to people, organizations, or communities.

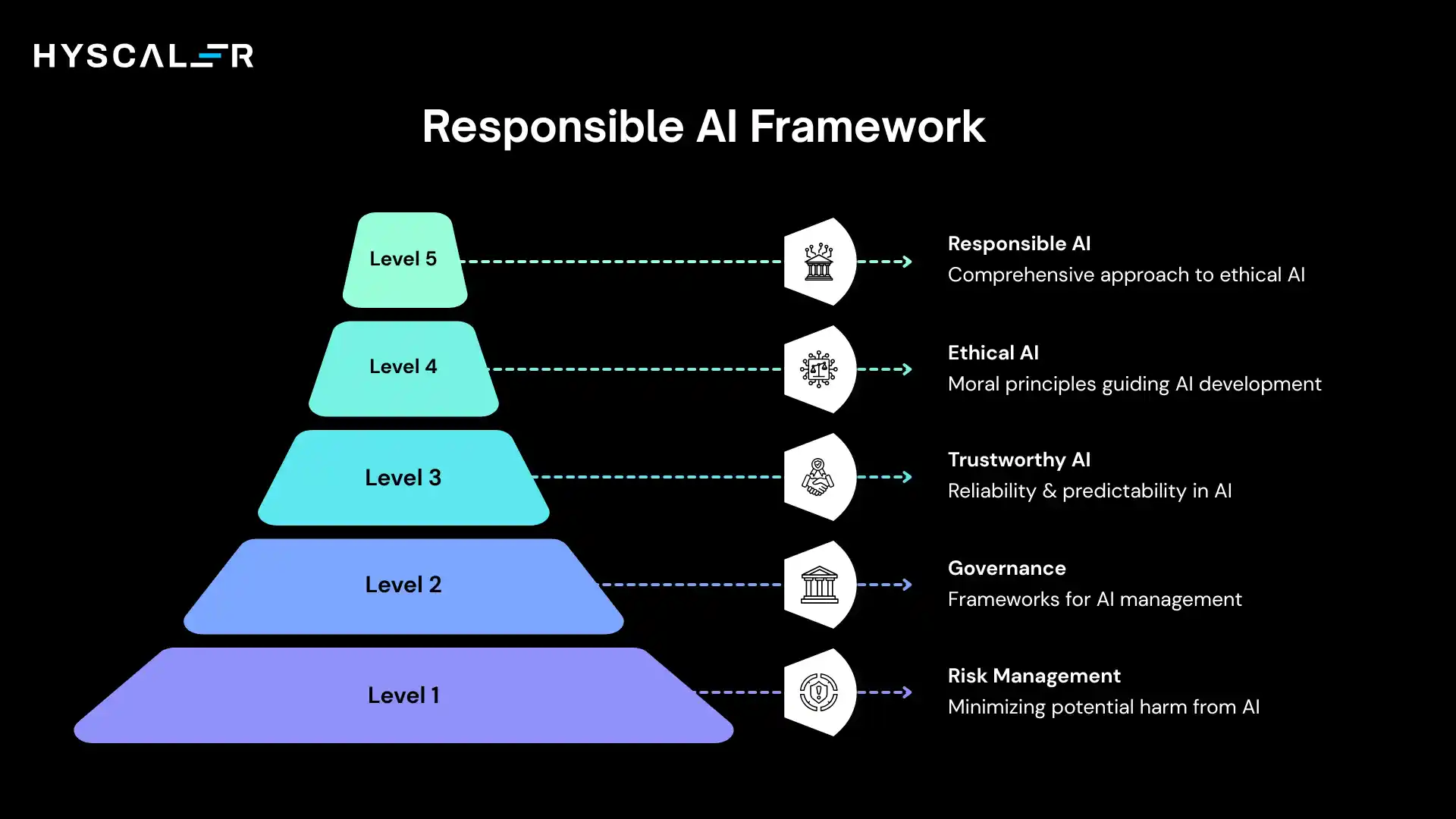

Understanding the terminology:

- Ethical AI focuses specifically on moral principles and values embedded in AI systems

- Trustworthy AI emphasizes building confidence through reliability and predictability

- Responsible AI is the broader operational framework that encompasses both ethics and trust, while adding practical governance, accountability structures, and systematic risk management.

Think of it this way: ethical AI defines what we should do, trustworthy AI ensures we do it reliably, and responsible AI provides the complete system for making it happen at scale.

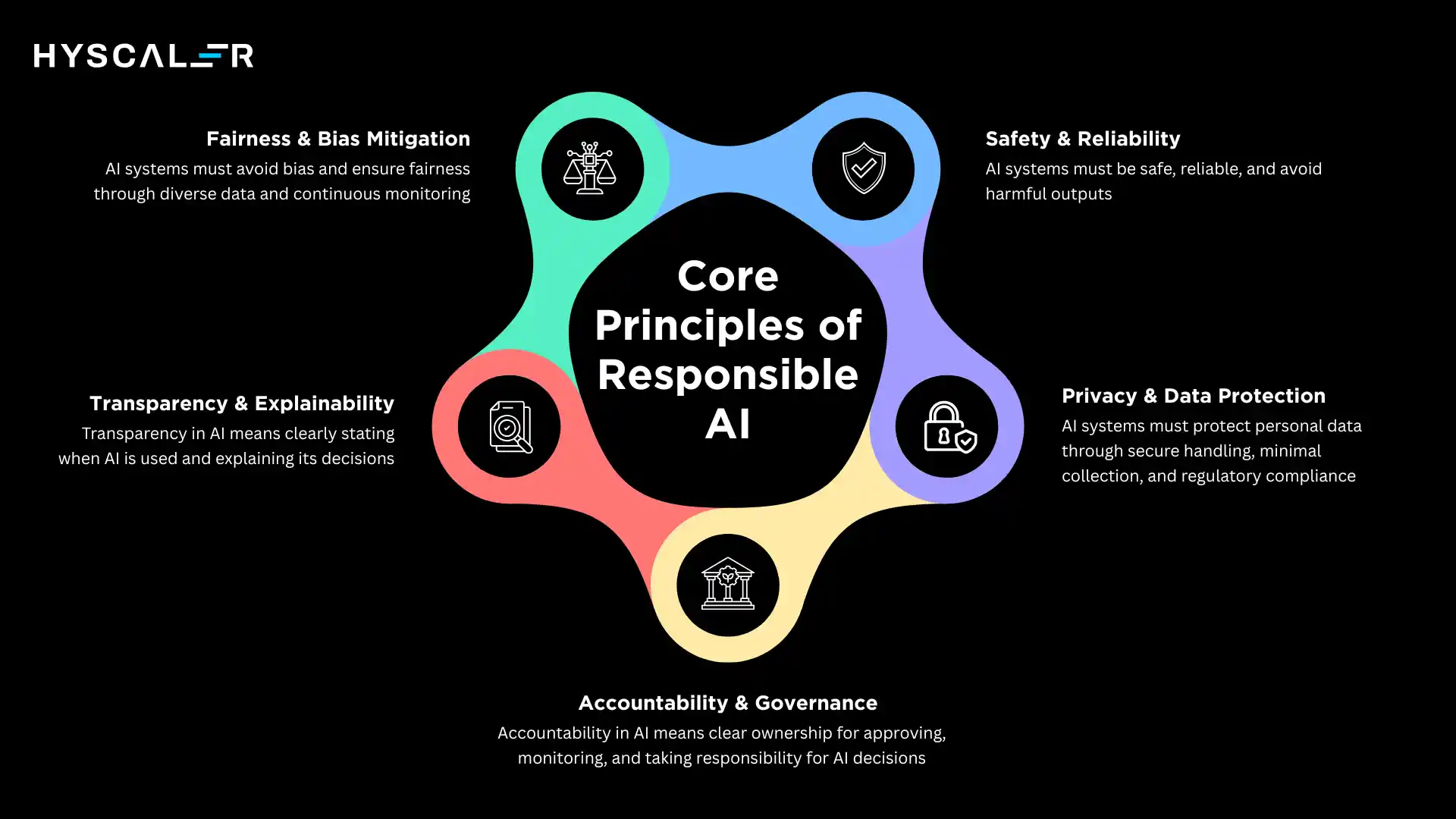

Core Principles of Responsible AI

Fairness and Bias Mitigation

AI systems must treat all individuals and groups equally, avoiding discrimination based on protected characteristics like race, gender, age, or financial status.

The challenge is significant.

AI learns from historical data, which often reflects existing societal biases/discrimination.

Without active intervention, these systems can continue or even amplify discrimination.

Real-world examples:

- Hiring AI: A resume screening tool trained on past hiring decisions may discriminate against women if historical data reflects gender bias in hiring

- Lending AI: Credit scoring algorithms have been found to assign lower scores to qualified applicants from certain zip codes, effectively creating redlining through code

Fairness requires proactive testing across demographic groups, diverse training data, and continuous monitoring for clear impacts.

Transparency and Explainability

People affected by AI decisions deserve to understand how those decisions were made.

This is especially critical in regulated industries like healthcare, finance, and criminal justice.

Transparency operates on multiple levels.

For end users, it means clear communication about when AI is being used and how it influences outcomes.

For technical teams, it requires access to model logic and decision pathways.

For regulators and auditors, it demands comprehensive documentation of model development and deployment.

Explainability goes deeper; it’s the ability to articulate in human terms why an AI system made a specific decision.

When a loan is denied or a job application rejected, the affected person should receive a meaningful explanation, not just “the algorithm decided.”

Accountability and Governance

When AI makes a mistake, someone must be responsible.

Accountability answers the question: who is ultimately answerable for AI decisions and their consequences?

This isn’t just about assigning blame when things go wrong.

It’s about establishing clear ownership throughout the AI lifecycle.

Who validates training data? Who approves model deployments? Who monitors production systems? Who responds to incidents?

Effective AI governance frameworks establish decision rights, approval processes, risk assessment protocols, and escalation paths.

They create organizational structures, like AI ethics boards or responsible AI councils, that provide oversight and ensure alignment with company values and regulatory requirements.

Privacy and Data Protection

AI systems often process vast amounts of personal data.

Protecting individual privacy while extracting analytical value requires sophisticated technical and procedural controls.

This principle demands secure data handling practices, encryption, access controls, and data minimization, collecting only what’s necessary.

It also requires compliance with evolving regulations like GDPR in Europe, the DPDP Act in India, and various state-level privacy laws in the United States.

Organizations must implement privacy-by-design principles, conduct data protection impact assessments, and ensure that AI systems respect individual rights like data access, correction, and deletion.

Safety and Reliability

AI systems must operate predictably and avoid harmful outputs.

This principle becomes increasingly critical as AI takes on more autonomous roles.

Safety encompasses multiple dimensions: preventing the generation of harmful content, ensuring consistent performance across different conditions, maintaining stability under edge cases, and failing gracefully when encountering unexpected inputs.

Reliability means AI systems should perform as intended across their entire operating envelope, with known and acceptable error rates.

Users should be able to trust that an AI system will behave consistently and appropriately, even in novel situations.

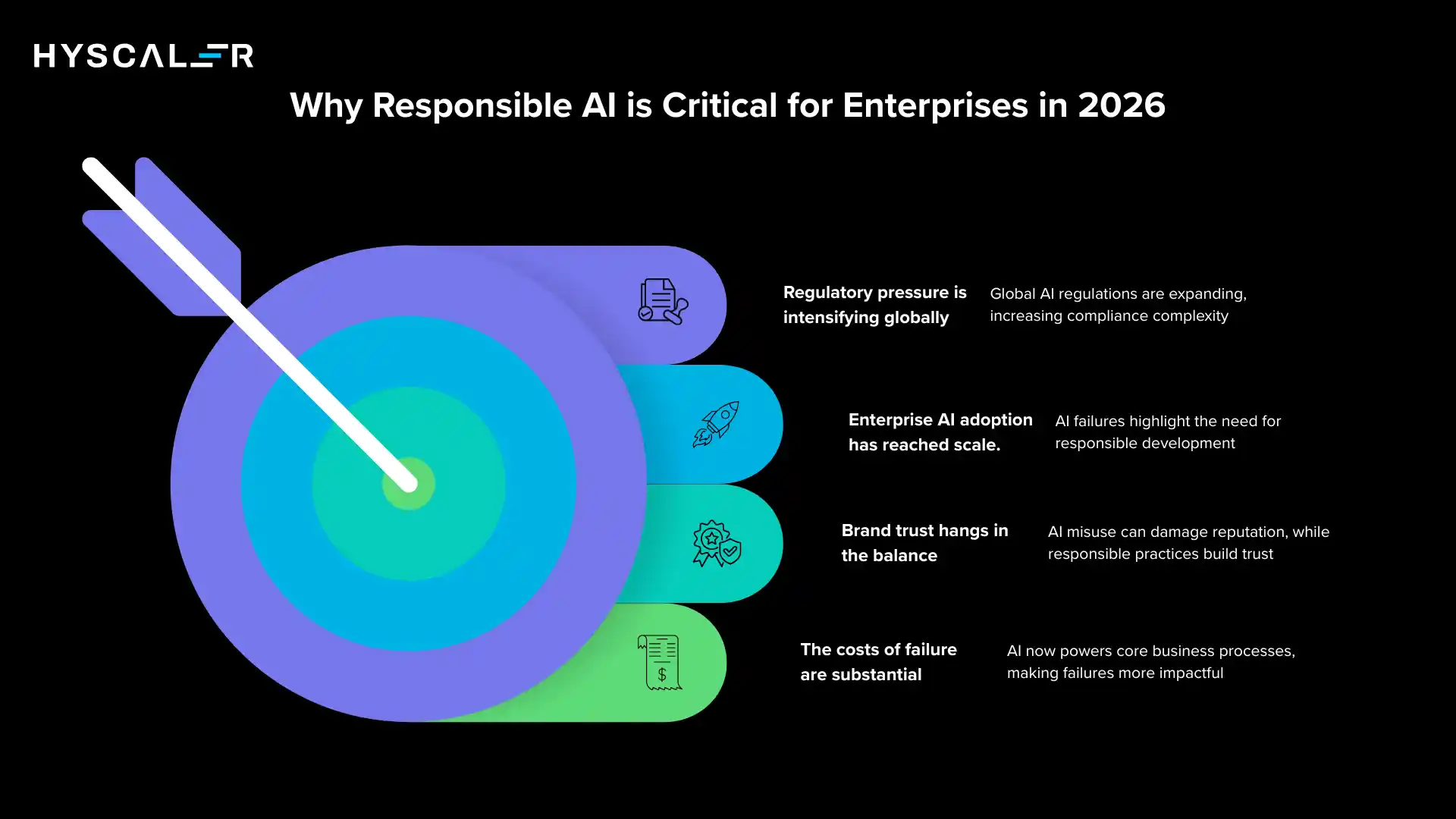

Why Responsible AI is Critical for Enterprises in 2026

The business case for Responsible AI has never been clearer.

Four converging forces make it essential:

Regulatory pressure is intensifying globally

The EU AI Act has taken effect, classifying AI systems by risk level and imposing strict requirements on high-risk applications.

The United States is advancing sector-specific regulations.

India’s Digital Personal Data Protection Act creates new compliance obligations.

China continues expanding its AI governance framework.

Organizations operating internationally face a complex patchwork of requirements, and the penalties for non-compliance are severe.

Enterprise AI adoption has reached scale

AI is no longer a departmental experiment; it’s embedded in core business processes.

Customer service chatbots handle millions of conversations.

AI systems make pricing decisions, manage supply chains, and assess insurance claims. When these systems fail, the impact is immediate and widespread.

Brand trust hangs in the balance

A single incident of algorithmic bias or privacy violation can trigger viral backlash, regulatory investigation, and lasting reputational damage.

Consumers are increasingly aware of AI’s role in their experiences and are increasingly concerned about its misuse.

Organizations that demonstrate responsible practices build competitive advantage through trust.

The costs of failure are substantial

Consider these examples:

- A major tech company paid over $200 million in settlements after its hiring algorithms were found to disadvantage certain demographic groups systematically

- Financial institutions have faced regulatory sanctions for deploying credit models that couldn’t be adequately explained or that showed discriminatory patterns.

- Healthcare AI systems that made diagnostic errors led to patient harm, malpractice claims, and the withdrawal of products from the market.

These aren’t theoretical risks.

They’re business realities that demand systematic approaches to responsible development and deployment.

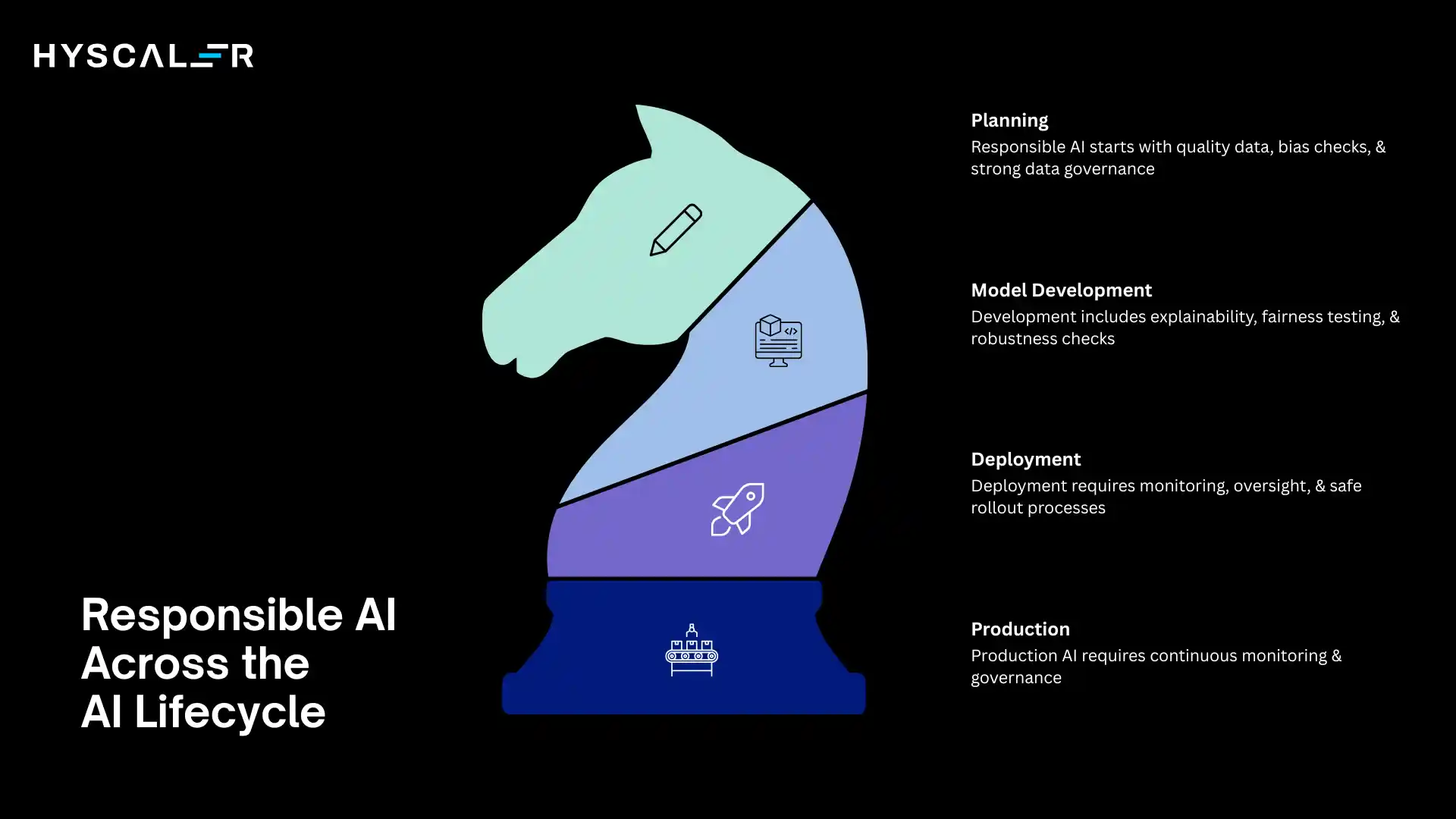

Responsible AI Across the AI Lifecycle

Implementing Responsible AI requires intervention at every stage of development and deployment.

Data Stage

Everything starts with data quality and integrity.

At this stage, organizations must implement rigorous data quality checks, ensuring accuracy, completeness, and relevance.

Bias detection begins here, analyzing training data for imbalances, stereotypes, or historical discrimination that could propagate through the model.

Data governance processes should document data sources, assess data representativeness across relevant demographic groups, and implement controls to protect sensitive information.

This foundation determines everything that follows.

Model Development

During development, teams should integrate explainability tools that provide insight into model behavior.

Fairness testing becomes systematic, measuring performance across demographic groups and testing for disparate impact.

Model documentation, describing architecture, training process, known limitations, and intended use cases, creates the audit trail needed for accountability.

Responsible development also means stress-testing models against adversarial inputs, evaluating robustness, and setting appropriate confidence thresholds for deployment.

Deployment

The transition to production requires careful planning.

Organizations should implement monitoring systems that track model performance, detect drift in input data or predictions, and flag potential bias.

Human-in-the-loop systems create checkpoints where human judgment can override or validate AI decisions, particularly for high-stakes applications.

Deployment processes should include staged rollouts, A/B testing for fairness and performance, and clear rollback procedures if issues emerge.

Production

Once in production, continuous governance becomes essential.

This means ongoing monitoring of model performance and fairness metrics, regular audits against compliance requirements, and systematic processes for investigating and addressing incidents.

Production governance also includes model retraining protocols, documentation updates, and stakeholder communication about AI system capabilities and limitations.

Responsible AI vs Traditional AI Development

The shift toward responsible practices represents a fundamental change in how organizations approach AI:

| Traditional AI | Responsible AI |

| Focus on performance metrics (accuracy, speed) | Focus on performance + ethics, fairness, safety |

| Limited monitoring after deployment | Continuous monitoring across multiple dimensions |

| Minimal governance structure | Strong governance with clear accountability |

| Black-box models are acceptable if performant | Explainable systems are required for high-stakes decisions |

| Reactive approach to problems | Proactive risk management and prevention |

| Technology-driven decisions | Multidisciplinary oversight, including ethics, legal, and business stakeholders |

This transformation requires cultural change as much as technical capability.

It means expanding development teams to include ethicists, social scientists, and domain experts.

It means accepting that some performant models may be rejected due to fairness or explainability concerns.

It means building organizational muscle around difficult tradeoffs between capability and responsibility.

Responsible AI in the Era of Generative and Agentic AI

The rise of generative AI and autonomous agents has dramatically elevated the stakes.

These systems introduce new categories of risk that demand enhanced responsible AI practices.

Generative AI challenges: Large language models and image generators can produce convincing but false information at scale. Hallucinations, plausible-sounding but incorrect outputs, can mislead users who trust the authoritative tone of AI responses. These systems can also generate harmful content, violate intellectual property rights, or reproduce biased patterns from training data.

Agentic AI risks: AI agents that take autonomous actions in the world, booking travel, sending emails, executing financial transactions, create direct pathways from algorithmic decisions to real-world consequences. When these agents operate without adequate oversight, they can make costly mistakes, violate policies, or create legal liability.

These advanced AI systems require enhanced safeguards:

Guardrails are technical controls that prevent AI systems from generating harmful, illegal, or off-brand content. They filter inputs and outputs, enforce content policies, and maintain boundaries around acceptable behavior.

Alignment ensures that AI systems pursue goals that match human values and organizational objectives. This is particularly crucial for agents that optimize toward objectives; without careful alignment, they may achieve goals in unexpected or harmful ways.

Human oversight remains essential, especially for high-stakes decisions. This might mean human approval before agents take irreversible actions, human review of AI-generated content before publication, or human intervention capabilities for monitoring personnel.

The autonomous nature of these systems demands enhanced transparency about their capabilities and limitations, robust testing across diverse scenarios, and fail-safe mechanisms that prevent cascading failures.

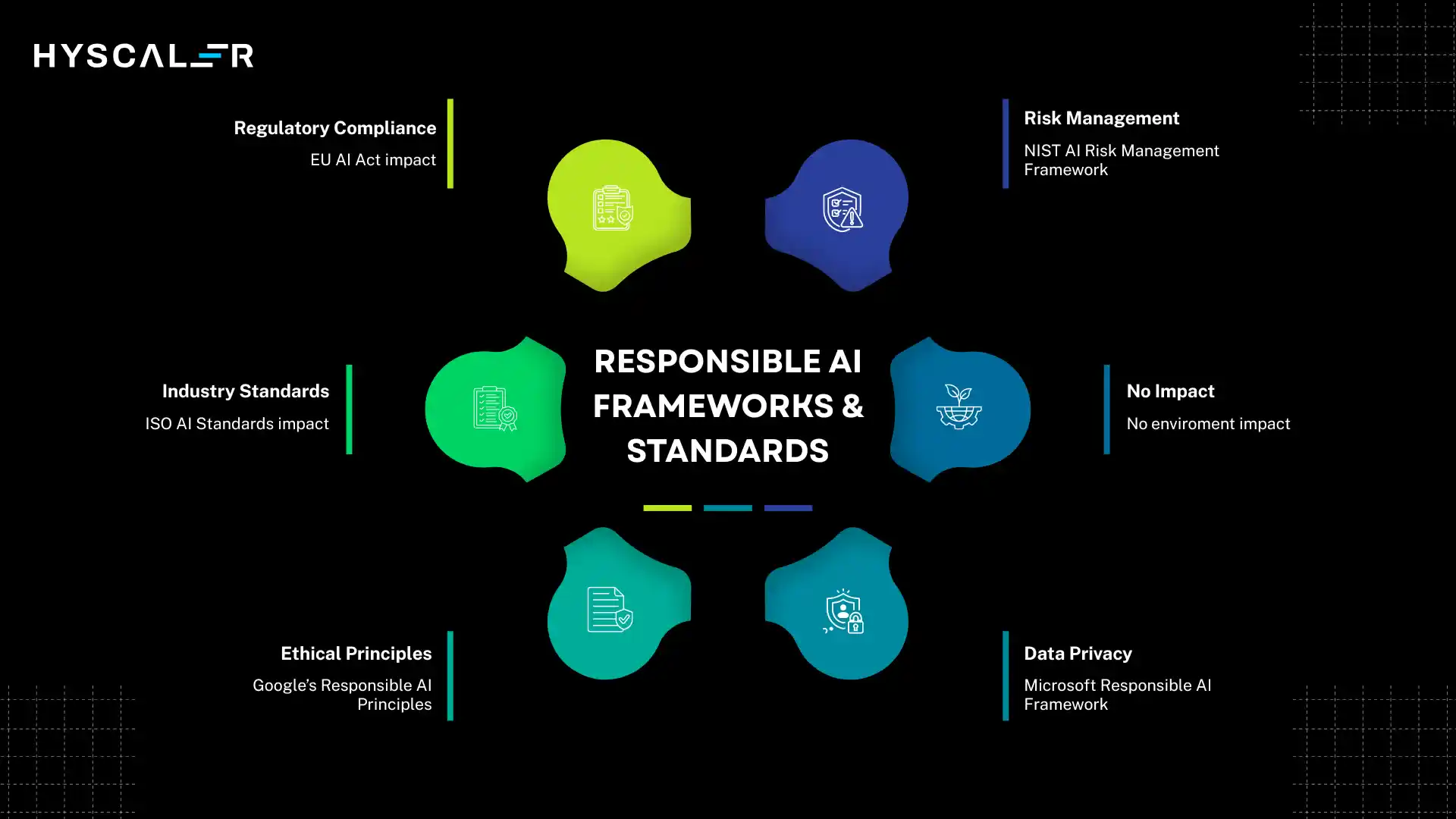

Responsible AI Frameworks and Standards

Organizations don’t need to start from scratch.

Multiple frameworks and standards provide guidance:

NIST AI Risk Management Framework offers a comprehensive, voluntary approach to managing AI risks. It provides a structured process for identifying, assessing, and mitigating risks throughout the AI lifecycle and has gained significant traction among US organizations.

ISO AI Standards are emerging to provide international benchmarks for AI governance, including ISO/IEC 42001 for AI management systems and various standards addressing specific aspects like bias and transparency.

THE EU AI Act represents the world’s first comprehensive AI regulation, classifying systems by risk level (unacceptable, high, limited, minimal) and imposing requirements accordingly. High-risk systems face strict obligations around data governance, documentation, human oversight, and accuracy.

Microsoft Responsible AI Framework articulates six principles: fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability, backed by practical implementation tools.

Google’s Responsible AI Principles focus on being socially beneficial, avoiding unfair bias, being built and tested for safety, being accountable to people, incorporating privacy design principles, upholding high standards of scientific excellence, and being made available for uses that accord with these principles.

These frameworks share common themes while offering different entry points and emphases.

Organizations should evaluate which frameworks align with their regulatory environment, industry sector, and organizational maturity.

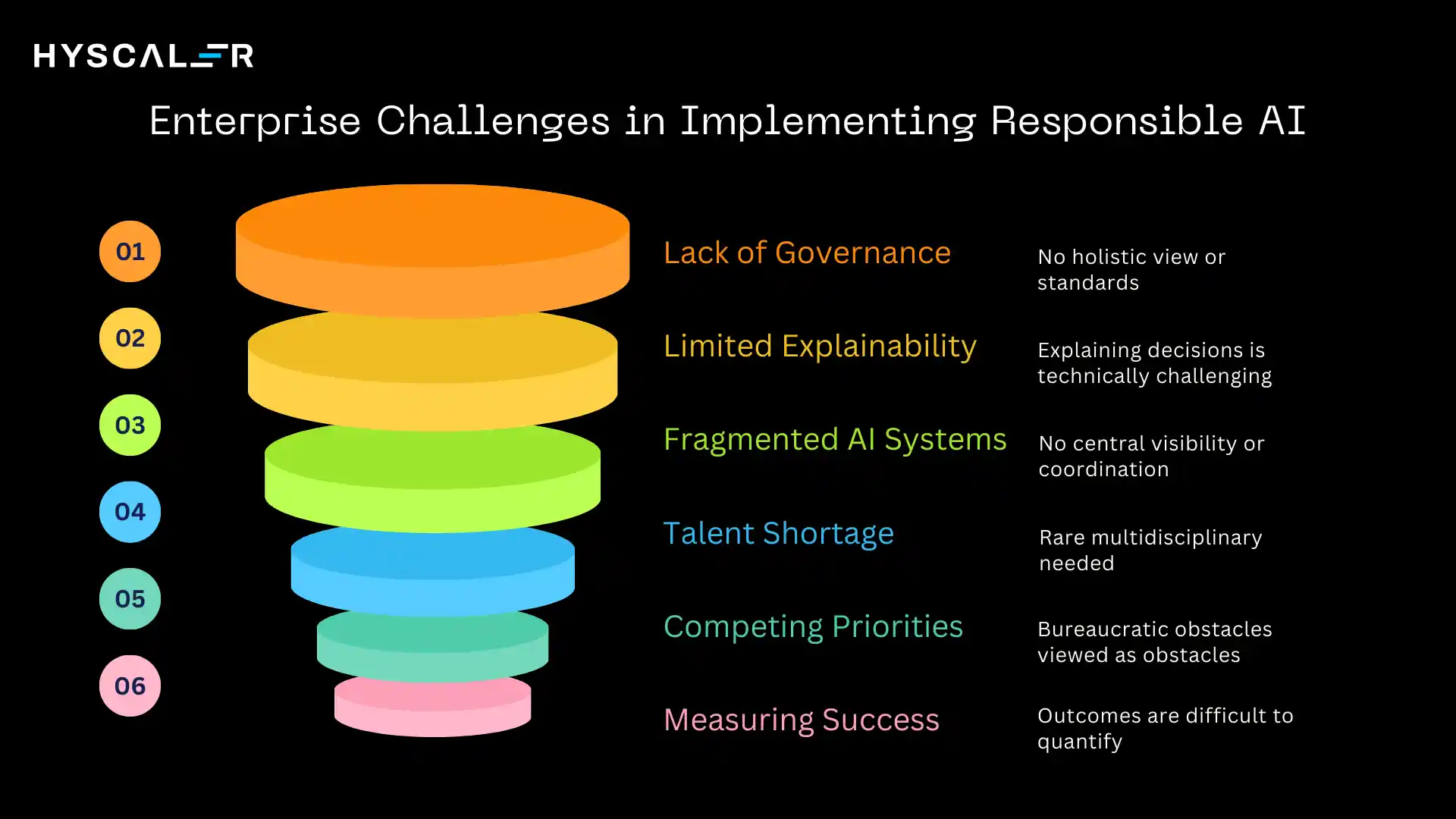

Enterprise Challenges in Implementing Responsible AI

Despite growing awareness, many organizations struggle to operationalize responsible AI practices.

Common challenges include:

Lack of governance structure: Many companies lack clear ownership of AI ethics and responsibility. Technical teams build models, legal reviews for compliance, and business units deploy systems, but no one coordinates the holistic view or enforces standards across projects.

Limited explainability tools: For many advanced models, particularly deep neural networks, explaining specific decisions remains technically challenging. Organizations struggle to balance model performance with interpretability requirements.

Fragmented AI systems: AI implementations often proliferate across business units without central visibility or coordination. This makes it nearly impossible to enforce consistent standards, identify risks, or manage aggregate exposure.

Talent shortage: Responsible AI requires multidisciplinary expertise, data scientists who understand fairness metrics, lawyers who understand AI technology, and ethicists who understand business constraints. These combined skill sets are rare and in high demand.

Competing priorities: Under pressure to deliver AI capabilities quickly, teams may view responsible AI practices as bureaucratic obstacles rather than essential safeguards. Short-term business pressures can override longer-term risk considerations.

Measuring success: Unlike accuracy or speed, responsible AI outcomes can be difficult to quantify. How do you measure whether a system is “fair enough” or “sufficiently transparent”? This ambiguity complicates prioritization and resource allocation.

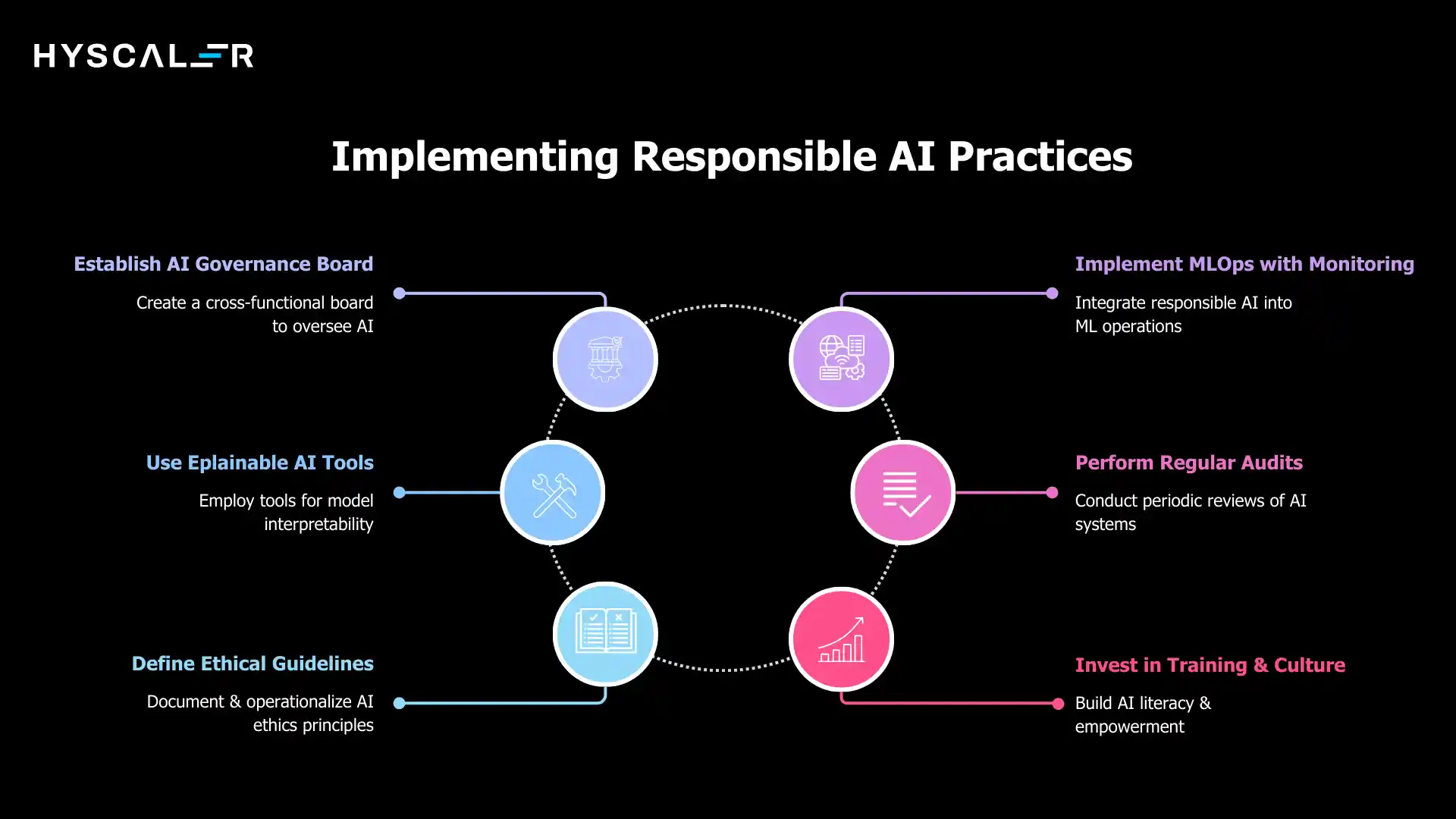

Best Practices to Implement Responsible AI

Organizations that successfully implement responsible AI practices typically follow these approaches:

Establish an AI governance board with cross-functional representation.

This body should include technical leaders, legal counsel, ethicists, business leaders, and subject matter experts.

The board sets standards, reviews high-risk AI projects, investigates incidents, and ensures organizational alignment on responsible AI principles.

Implement MLOps with integrated monitoring.

Build responsible AI directly into your machine learning operations pipeline.

This means automated fairness testing in CI/CD, continuous monitoring dashboards that track bias and drift, and alerts when systems deviate from expected behavior.

Make responsible AI part of the development workflow, not a separate compliance exercise.

Use explainable AI tools.

Invest in technologies that provide model interpretability, SHAP values, LIME, attention visualization, or simpler inherently interpretable models for high-stakes decisions.

Build explanation capabilities into user interfaces so stakeholders can understand AI recommendations.

Perform regular audits.

Schedule periodic reviews of AI systems, examining their performance against responsible AI criteria.

Third-party audits can provide independent validation and identify blind spots in internal processes.

Define clear ethical guidelines.

Document your organization’s AI ethics principles and translate them into operational policies.

What types of AI applications are prohibited? What approval is required for different risk levels? When must humans be in the loop? Make these guidelines concrete and accessible.

Invest in training and culture.

Build responsible AI literacy across the organization.

Developers should understand fairness metrics.

Business leaders should recognize responsible AI risks in project proposals.

Everyone should feel empowered to raise concerns.

Start with high-risk use cases.

Prioritize responsible AI practices for applications with the greatest potential for harm, those affecting employment, credit, healthcare, criminal justice, or vulnerable populations.

Success in these areas builds capability that can scale to other applications.

Future of Responsible AI

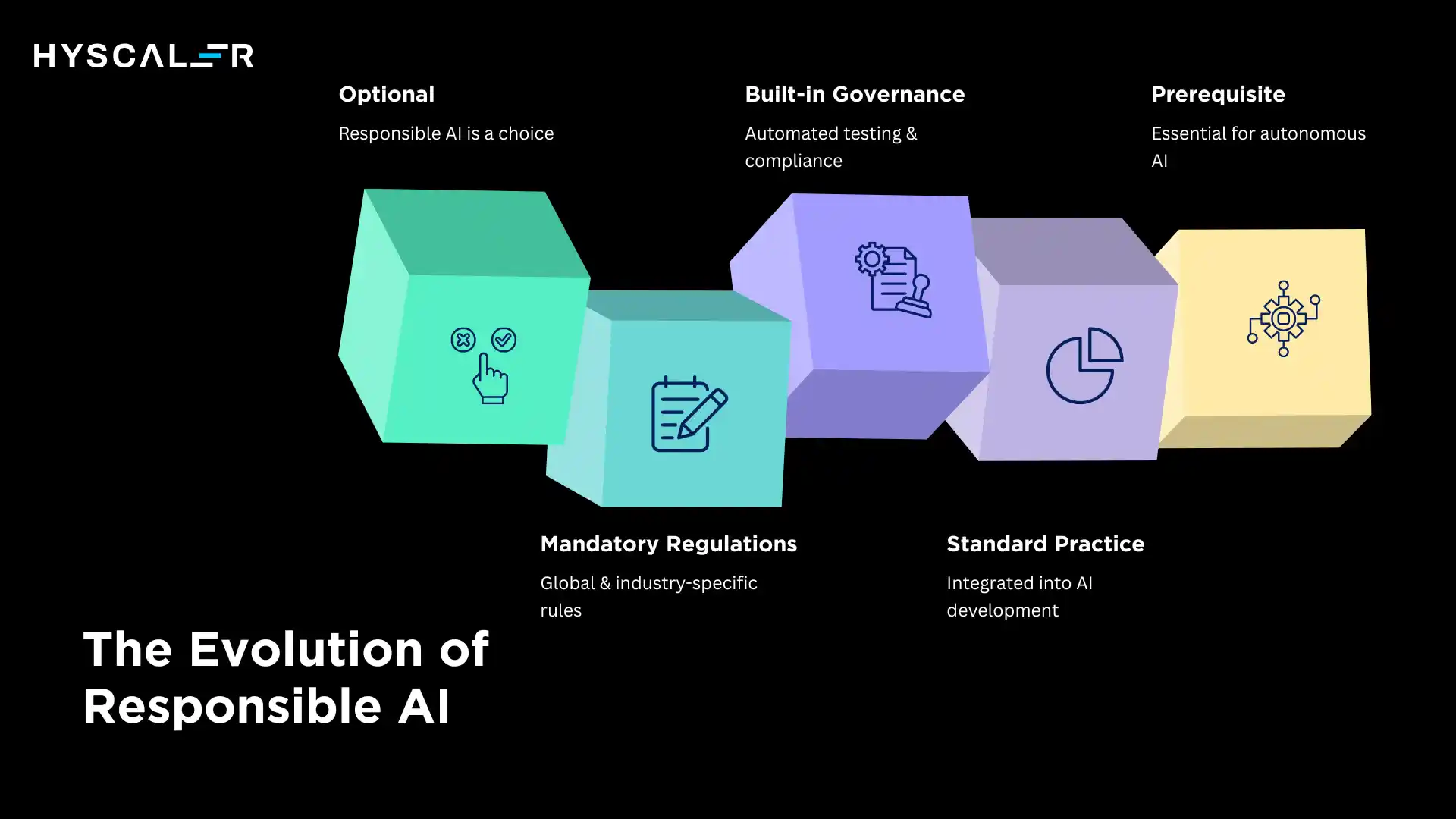

The trajectory is clear: responsible AI is moving from optional to mandatory, from reactive to proactive, from specialized to standard.

Mandatory regulations will continue expanding globally. More jurisdictions will follow the EU’s lead in creating comprehensive AI governance regimes. Industry-specific regulations will proliferate, particularly in finance, healthcare, and employment. Organizations will need sophisticated compliance programs to navigate this landscape.

Built-in governance platforms will emerge as the standard approach. Just as DevOps tools automate software development practices, responsible AI platforms will automate fairness testing, bias detection, explainability, and compliance documentation. These capabilities will be table stakes for enterprise AI platforms.

Responsible AI becoming standard practice means it will be integrated into how organizations think about AI from the beginning, not retrofitted after deployment. Architecture reviews will routinely include responsible AI considerations. Business cases will account for governance costs. Performance metrics will include fairness alongside accuracy.

Key requirement for agentic AI: As AI systems gain autonomy, responsible AI practices won’t just be best practices; they’ll be prerequisites for deployment. No organization will risk deploying autonomous agents without robust governance, monitoring, and control mechanisms.

The competitive advantage will shift.

Organizations that view responsible AI as a compliance burden will lag behind those that recognize it as a source of innovation, differentiation, and trust.

The companies that win will be those that build AI systems people actually want to use, systems that are not just powerful but trustworthy.

Conclusion

Responsible AI isn’t about limiting AI’s potential; it’s about realizing it sustainably.

As AI systems become more capable and more autonomous, the practices that ensure they remain aligned with human values and societal needs become more critical, not less.

The organizations that prioritize responsible AI practices will build trust with customers, employees, regulators, and communities.

They’ll avoid costly failures and regulatory sanctions.

They’ll attract talent that wants to build technology that matters in the right ways.

They’ll be positioned to deploy AI at scale with confidence.

This is the foundation of sustainable AI success.

Not just AI that works, but AI that works for everyone.

Not just AI that’s powerful, but AI that’s trustworthy.

Not just AI that delivers value today, but AI that we can confidently scale tomorrow.

The choice isn’t between innovation and responsibility.

The path forward requires both.

And the time to act is now.

FAQs

What’s the difference between AI ethics and Responsible AI?

AI ethics defines moral principles for AI, while Responsible AI applies them through governance, risk management, and operational practices.

Does implementing Responsible AI slow down development?

Responsible AI may initially slow development but ultimately speeds deployment by preventing failures and reducing rework.

How do I measure if my AI system is “responsible enough”?

Responsible AI is a continuous process measured through fairness, explainability, monitoring coverage, incident response, and regulatory compliance.

Is Responsible AI only relevant for large enterprises?

Responsible AI applies to organizations of all sizes, as even small AI systems can cause harm without basic fairness checks and documentation.

What happens if AI regulations conflict across different countries?

Global AI regulations are diverging, so organizations often follow the strictest standards and maintain documentation to ensure compliance across jurisdictions.

Can automated tools handle Responsible AI, or do I need human oversight?

Automated tools handle large-scale bias and compliance checks, while human oversight ensures context, ethics, and accountability.

How do I balance transparency with protecting proprietary AI technology?

Balance transparency by explaining decisions to users, sharing documentation with regulators, and protecting proprietary details through confidentiality.

What should I do if I discover my deployed AI system is biased?

Act quickly and transparently: assess the bias, pause or recalibrate the system if needed, inform stakeholders, identify root causes, and implement corrective measures.

Is Responsible AI just a passing trend?

AI governance isn’t slowing down; growing regulations, rising expectations, and expanding AI use make responsible practices essential for organizations moving forward.