Table of Contents

For decades, AI operated only in the digital world, analyzing data, generating outputs, but remaining passive and disconnected from physical reality.

That is now changing with embodied AI, where intelligence is integrated into physical systems like robots and machines that can perceive, move, and act in real environments.

This shift, from digital to physical intelligence, is one of the most significant advances since deep learning.

It’s driven by progress in AI models, robotics, and sensors, along with the need to solve real-world tasks in industries like manufacturing, logistics, and healthcare.

Embodied AI isn’t just an evolution, and it’s the foundation of the next generation of intelligent machines.

What is Embodied AI?

Definition

It refers to artificial intelligence systems that are situated in a physical body or agent, allowing them to perceive the world through sensors, reason about what they perceive, and take actions that affect their physical environment.

Unlike traditional AI systems that process static datasets or respond to text prompts, embodied AI agents continuously interact with a dynamic, unpredictable world in real time.

The core idea is borrowed from cognitive science and philosophy: intelligence is not just about computation in the abstract but about how an agent is embedded in, and shaped by, its physical environment.

A mind that can touch, feel, navigate, and manipulate the world develops a richer, more robust form of understanding than one confined to data alone.

Simple Examples

The concept becomes intuitive when you look at real-world examples.

A warehouse robot that rolls down aisles, identifies misplaced items with its cameras, picks them up with a robotic arm, and places them in the correct bin is an embodied AI system.

A self-driving car that reads road signs, detects pedestrians, and steers through traffic is another.

Even a Roomba navigating around furniture and deciding when to return to its charging dock qualifies as a rudimentary form of embodied AI.

Smart home robots that fetch objects, monitor the elderly, or assist with chores represent the consumer-facing frontier of this technology.

Key Characteristics

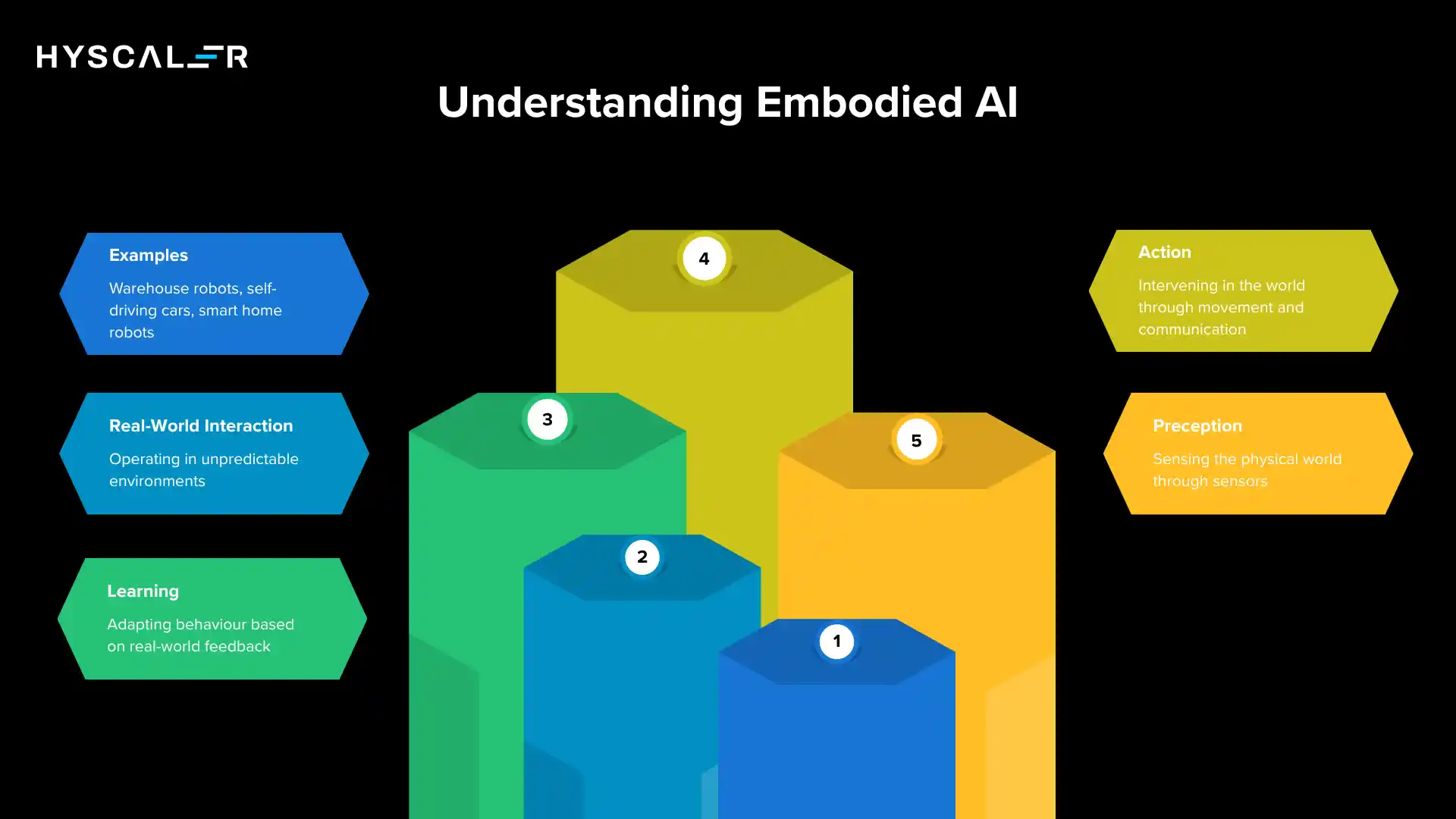

What distinguishes embodied AI from software-only AI comes down to four defining characteristics.

The first is perception, the ability to sense the physical world through cameras, microphones, touch sensors, and other instruments.

The second is action, the capacity to intervene in the world through movement, manipulation, or communication.

The third is learning from the environment, adapting behavior based on feedback from real-world interactions rather than only from labeled datasets.

The fourth is real-world interaction, operating in open-ended, unstructured environments where conditions are never perfectly predictable.

How Embodied AI Works

Understanding embodied AI requires looking at its architecture from the ground up, the hardware layers that sense and act, the software models that reason, and the feedback loop that ties everything together.

Sensors (Perception Layer)

Sensors are the eyes, ears, and fingertips of an embodied AI system.

Cameras capture visual information about the environment, enabling object detection, face recognition, and scene understanding.

LiDAR (Light Detection and Ranging) emits laser pulses to create precise three-dimensional maps of surrounding spaces, essential for autonomous vehicles and mobile robots operating in complex environments.

Touch sensors and pressure-sensitive materials allow robots to gauge force, detect contact, and handle delicate objects without crushing them.

Audio sensors and microphones enable voice commands, ambient sound detection, and acoustic monitoring, adding another dimension of environmental awareness.

Brain (AI Models)

The intelligence layer is where perception data is transformed into decisions.

Computer vision models process camera and LiDAR data to identify objects, estimate distances, and understand spatial relationships.

Reinforcement learning trains agents through trial and error.

The system takes actions, receives rewards or penalties based on outcomes, and gradually learns policies that maximize success.

Large Language Models (LLMs) bring natural language understanding and high-level reasoning, allowing robots to interpret instructions, plan multi-step tasks, and communicate with humans.

Vision-Language Models (VLMs) bridge the visual and linguistic domains, enabling a robot to look at a scene and understand a verbal instruction simultaneously, for instance, hearing “put the red cup on the left shelf” and correctly mapping those words to visible objects and locations.

Actuators (Action Layer)

Actuators are the muscles of an embodied AI agent.

Electric motors drive wheels, joints, and rotating components.

Robotic arms with multiple degrees of freedom allow the manipulation of objects with varying shapes and sizes.

Wheels and legs enable locomotion across different terrains.

Collectively, these mechanical systems translate the AI’s decisions into physical motion, completing the loop between thought and action.

Feedback Loop

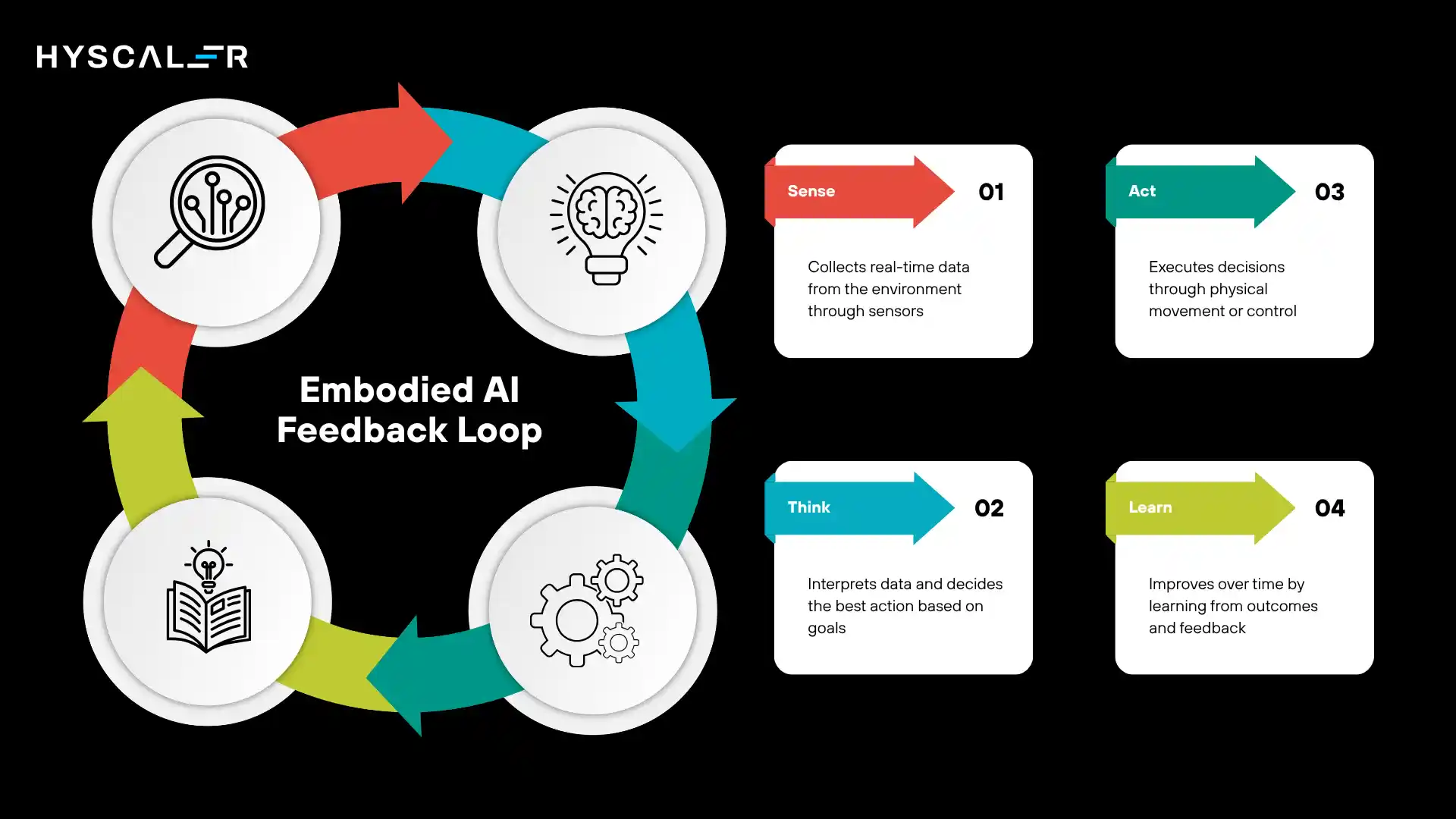

The elegance of embodied AI lies in its continuous feedback loop: Sense → Think → Act → Learn.

The agent senses the environment, processes that sensory data through its AI models, executes an action, observes what happened, and updates its internal model accordingly.

This cycle repeats thousands or millions of times, producing an agent that becomes progressively more capable through experience, much like how biological organisms develop skills through living.

Key Technologies Behind Embodied AI

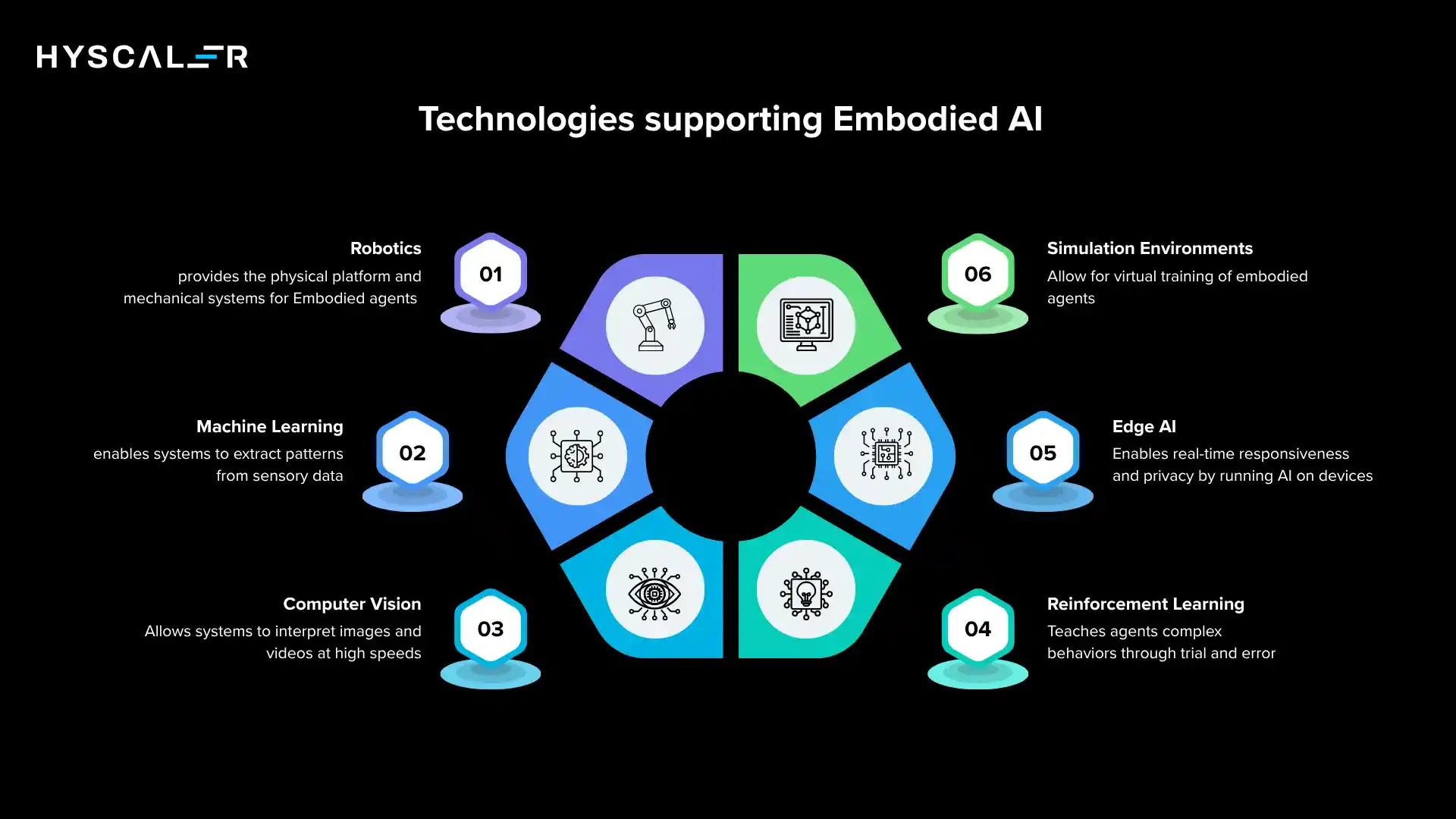

Several technological pillars support the rise of embodied AI.

Robotics provides the physical platform, actuators, chassis, and mechanical systems refined over decades of engineering.

Machine learning, particularly deep learning, enables systems to extract meaningful patterns from high-dimensional sensory data.

Computer vision is the eyes of the system, making sense of images and video at superhuman speeds.

Reinforcement learning is the primary method through which embodied agents learn complex sequential behaviors that are difficult to program manually.

Edge AI, running AI models directly on the device rather than sending data to the cloud, is critical for real-time responsiveness, privacy, and operation in environments without reliable network connectivity.

Finally, simulation environments like NVIDIA Isaac Sim, Google DeepMind’s MuJoCo, and Meta’s Habitat allow researchers to train embodied agents in vast virtual worlds, accumulating millions of hours of virtual experience before deploying in reality.

Real-World Applications of Embodied AI

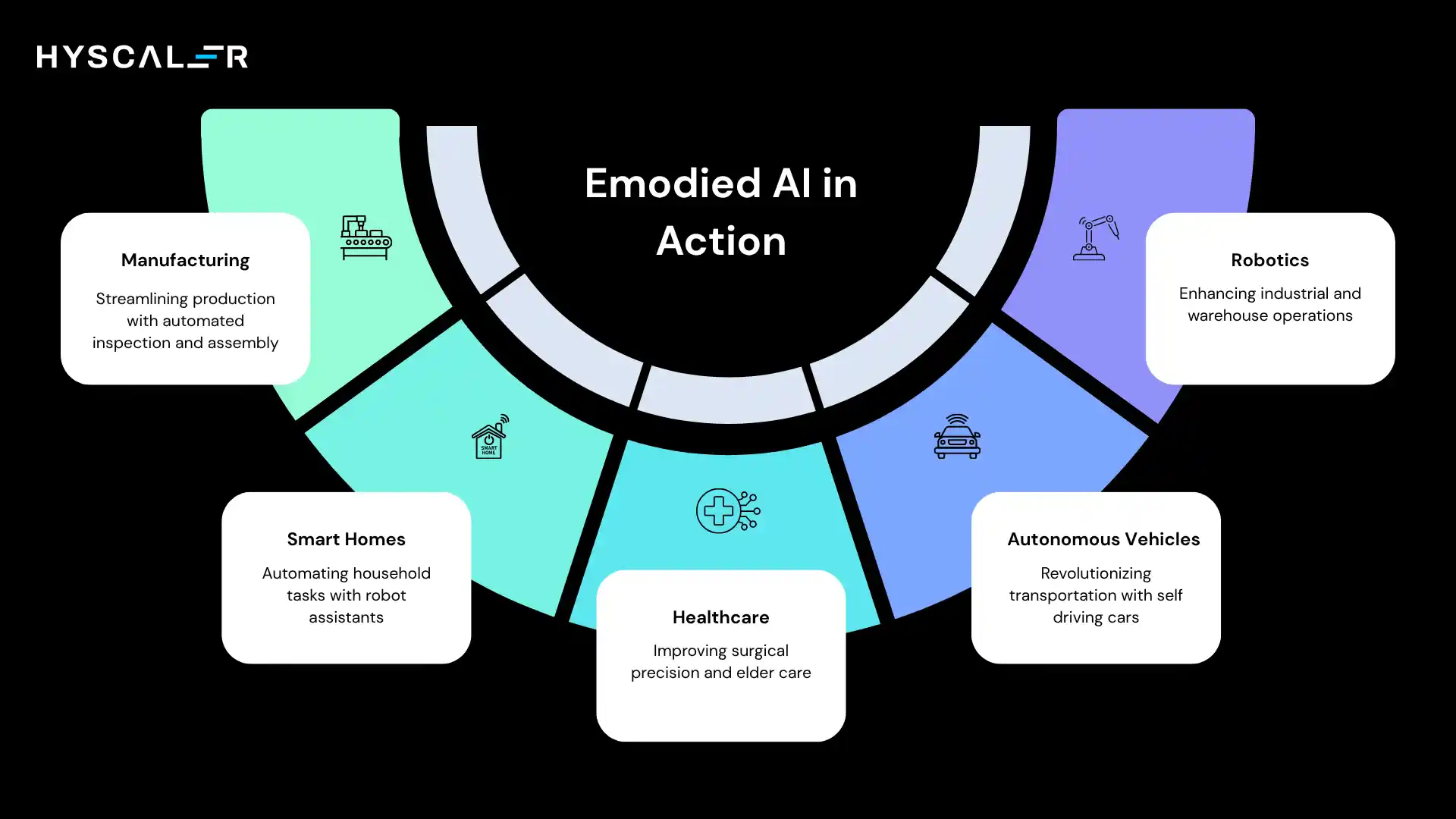

Robotics

Embodied AI has already transformed industrial robotics.

Warehouse robots from companies like Amazon Robotics navigate fulfillment centers autonomously, picking, sorting, and transporting goods with a speed and precision that human workers cannot match at scale.

Industrial robots equipped with AI vision systems can adapt to variations in parts, detect defects, and perform tasks that previously required rigid, pre-programmed routines.

Autonomous Vehicles

Self-driving cars represent one of the most ambitious embodied AI applications, a machine that must perceive a chaotic urban environment, predict the behavior of other drivers and pedestrians, and make split-second decisions at high speeds.

Delivery robots operating on sidewalks and inside buildings bring last-mile logistics automation to a new level, navigating real environments rather than controlled conveyor systems.

Healthcare

Surgical robots like the Da Vinci system allow surgeons to perform minimally invasive procedures with enhanced precision, tremor filtering, and a range of motion that exceeds the human hand.

Embodied AI is pushing these systems toward greater autonomy, with AI-guided instruments capable of executing standardized surgical steps.

Elder care robots represent a growing application area: machines that can monitor patients, assist with mobility, administer medication reminders, and provide companionship, addressing a pressing demographic challenge as populations age globally.

Smart Homes

Consumer-facing embodied AI is emerging in the home.

Robot assistants capable of understanding voice commands and navigating living spaces are moving from concept to product.

Cleaning robots have already demonstrated mass-market viability, and next-generation versions equipped with computer vision and manipulation capabilities will be able to tidy rooms, fold laundry, and manage household tasks far beyond vacuuming.

Manufacturing

On the factory floor, embodied AI is powering automated quality inspection systems that use cameras and AI vision to catch defects at rates and consistencies that manual inspection cannot achieve.

Automated assembly lines featuring robotic arms trained with reinforcement learning can adapt to product variations, work alongside human operators, and reconfigure for new products far more rapidly than traditional fixed automation.

Embodied AI vs Traditional AI

| Feature | Traditional AI | Embodied AI |

|---|---|---|

| Environment | Digital | Physical |

| Interaction | Data only | Real world |

| Action | No physical action | Physical movement |

| Learning | Static datasets | Real-time experience |

| Example | ChatGPT | Humanoid robot |

The contrast is fundamental.

Traditional AI, including even the most advanced language models, exists entirely in the world of symbols and tokens.

It reads text, generates text, and has no awareness that chairs, rain, or gravity exist except as concepts in training data.

Embodied AI must contend with physical reality directly: objects move, environments change, and actions have irreversible consequences.

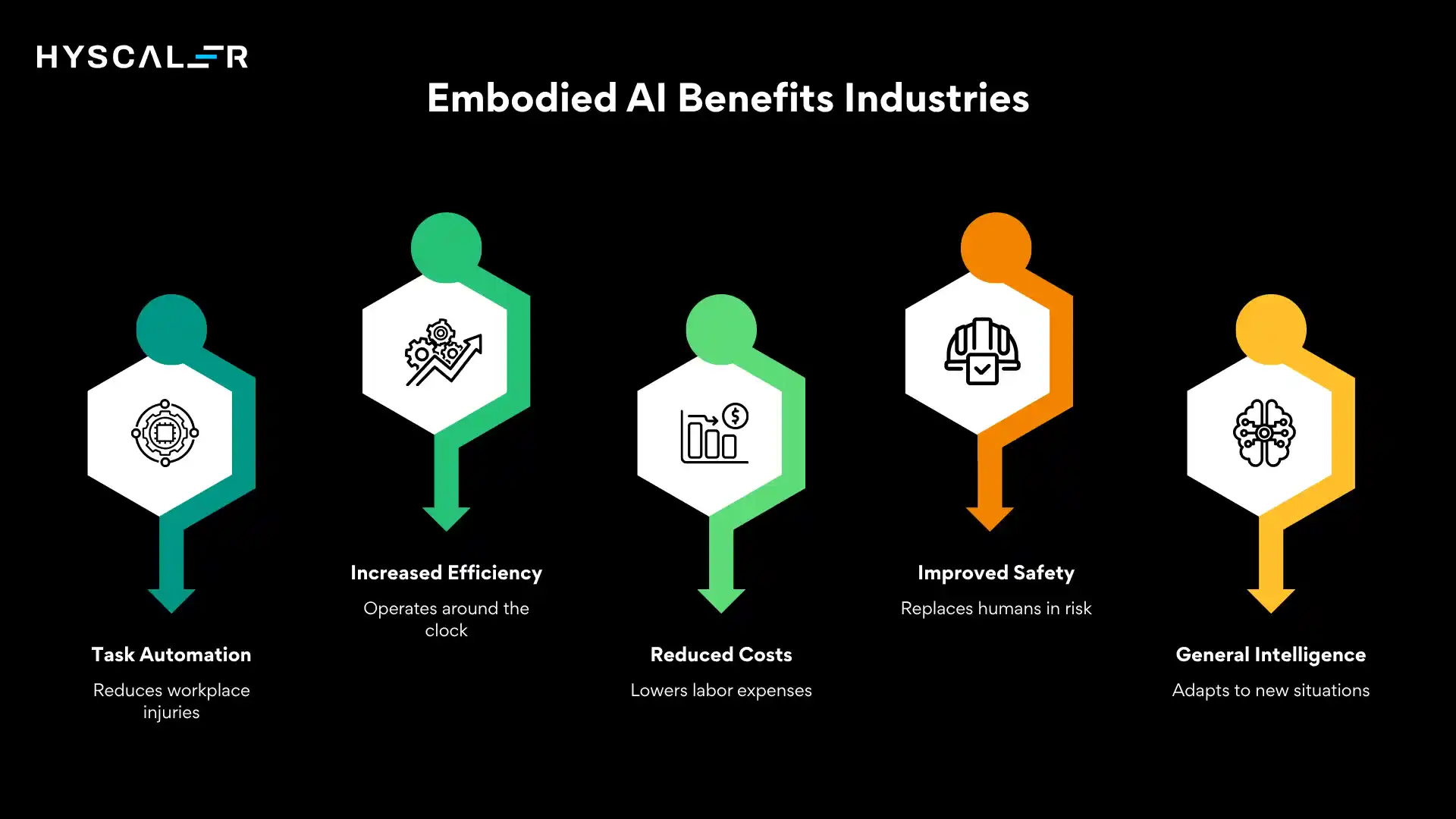

Benefits of Embodied AI

The potential benefits of embodied AI are substantial and span virtually every industry.

Physical task automation allows machines to take over work that is dangerous, repetitive, or physically demanding, reducing workplace injuries and freeing human workers for higher-value activities.

Increased efficiency follows naturally from machines that do not tire, do not require breaks, and can operate around the clock.

Reduced labor requirements lower costs in industries such as logistics and manufacturing, where labor accounts for a significant share of operating expenses.

Improved safety is achievable in hazardous environments, such as mining, chemical plants, and nuclear facilities, where AI-driven robots can replace humans in high-risk roles.

Perhaps most importantly, embodied AI creates a form of real-world intelligence that can generalize across tasks, adapting to new situations in ways that rigid automation cannot.

Challenges of Embodied AI

The path to widespread embodied AI is steep.

High cost remains a significant barrier; sophisticated robots with advanced AI capabilities carry price tags that limit deployment to well-funded operations.

Hardware complexity means that mechanical systems break down, require maintenance, and face fundamental engineering constraints that software systems do not.

Safety risks are a serious concern: a software bug in a language model could produce an incorrect answer; a bug in an embodied AI system could cause a robot to injure a person or damage property.

Training difficulty is another hurdle; teaching an agent to reliably perform complex physical tasks requires either vast amounts of real-world experience or sophisticated simulation, both of which are expensive.

Finally, real-world unpredictability is the deepest challenge: the physical world is messy, variable, and full of edge cases that even the most carefully designed AI systems will encounter unexpectedly.

Embodied AI and the Future of Robotics

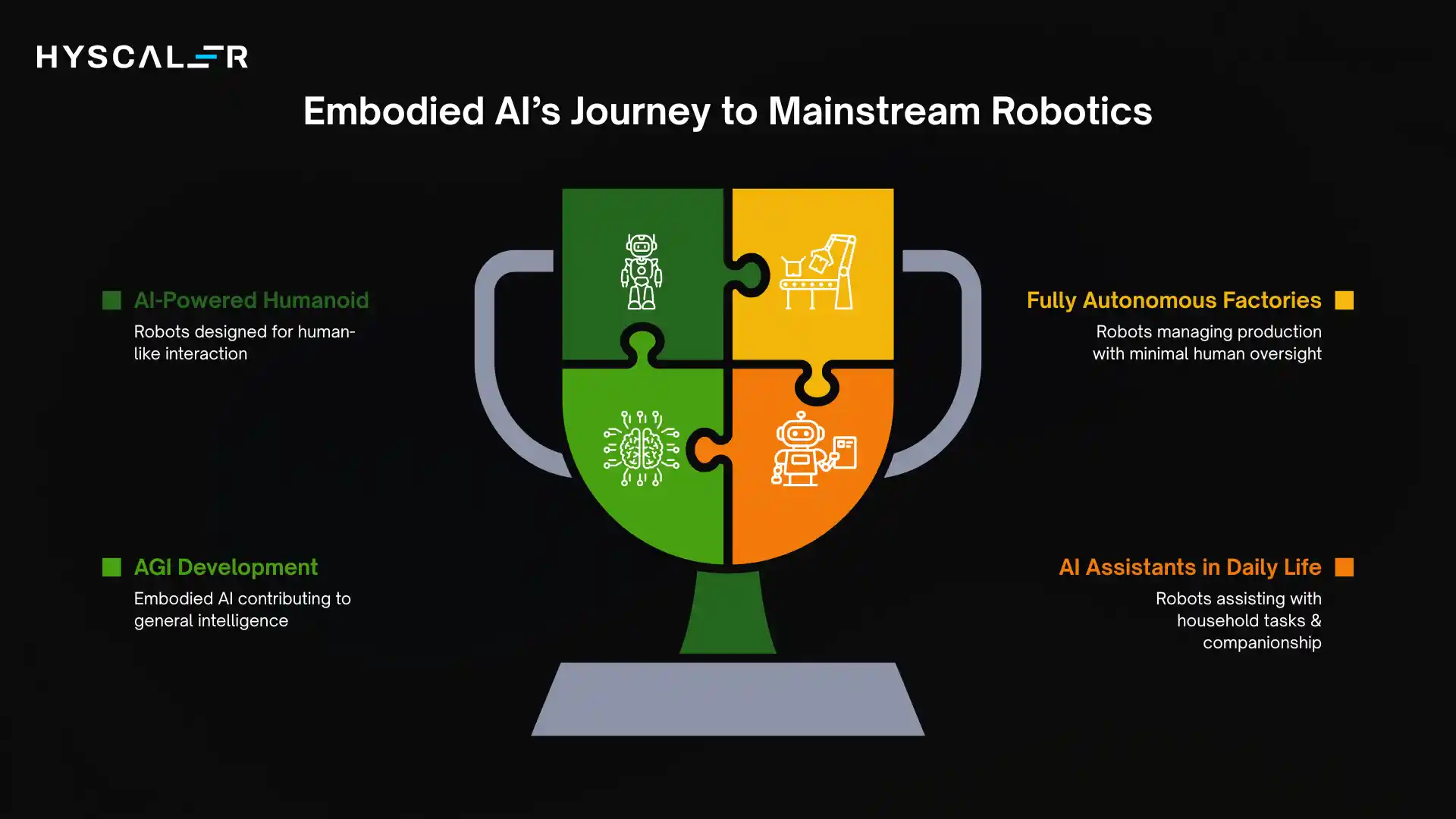

The trajectory of embodied AI points toward a future where intelligent robots are as common as smartphones.

AI-powered humanoid robots, machines that walk upright, use their hands with human-like dexterity, and interact naturally with people and environments designed for humans, are moving rapidly from research labs to production lines.

Fully autonomous factories where fleets of embodied AI systems design, manufacture, inspect, and ship products with minimal human oversight are becoming technically feasible.

AI assistants in daily life, robots that help with cooking, cleaning, personal care, and companionship, will transform the domestic sphere.

This trajectory is closely connected to the development of Artificial General Intelligence (AGI).

Many researchers believe that true general intelligence cannot be achieved in the digital domain alone, that physical embodiment and real-world interaction are prerequisites for the kind of flexible, adaptive intelligence that humans possess.

Several companies are leading this charge.

Tesla’s Optimus humanoid robot is designed to perform general-purpose tasks in factories and homes, leveraging the same AI stack developed for Tesla’s autonomous vehicles.

Figure AI is developing a humanoid robot intended for commercial deployment in industrial settings, with backing from major technology and automotive companies.

Boston Dynamics, long known for its technically impressive but commercially nascent robots, is pushing toward practical deployment of its Atlas and Spot platforms in industrial and inspection roles.

These companies represent the vanguard of a field that is moving faster than most observers anticipated.

Embodied AI vs Generative AI

| Characteristics | Generative AI | Embodied AI |

|---|---|---|

| Purpose | Produce new content (text, images, code, audio) | Interact with and act in the physical world |

| Output | Digital artifacts (documents, designs, responses) | Physical actions (movement, manipulation, task execution) |

| Domain | Fully digital (cloud, software systems) | Real-world environments (homes, factories, streets) |

| Input | Prompts, text, images, structured data | Sensor data (vision, touch, sound, spatial awareness) |

| Example | Report on warehouse organization | Robot organizing a warehouse |

| Interaction Style | Conversational / prompt-based | Continuous perception → decision → action loop |

| Learning Approach | Trained on large datasets (offline training dominant) | Combines training + real-time learning from the environment |

| Feedback Loop | Limited (user feedback, fine-tuning) | Continuous real-world feedback (trial, error, adaptation) |

| Autonomy Level | Low to moderate (depends on user input) | High (can act independently in dynamic environments) |

| Error Impact | Usually low (incorrect output can be edited) | High (mistakes can cause physical or safety issues) |

| Speed of Iteration | Fast (instant responses) | Slower (depends on physical execution and constraints) |

| Infrastructure | GPUs, cloud platforms, APIs | Robots, sensors, actuators + AI models |

| Use Cases | Content creation, coding, customer support, design | Robotics, autonomous vehicles, smart manufacturing |

| Combination | Acts as the “brain interface” (planning, reasoning) | Acts as the “body” executing real-world tasks |

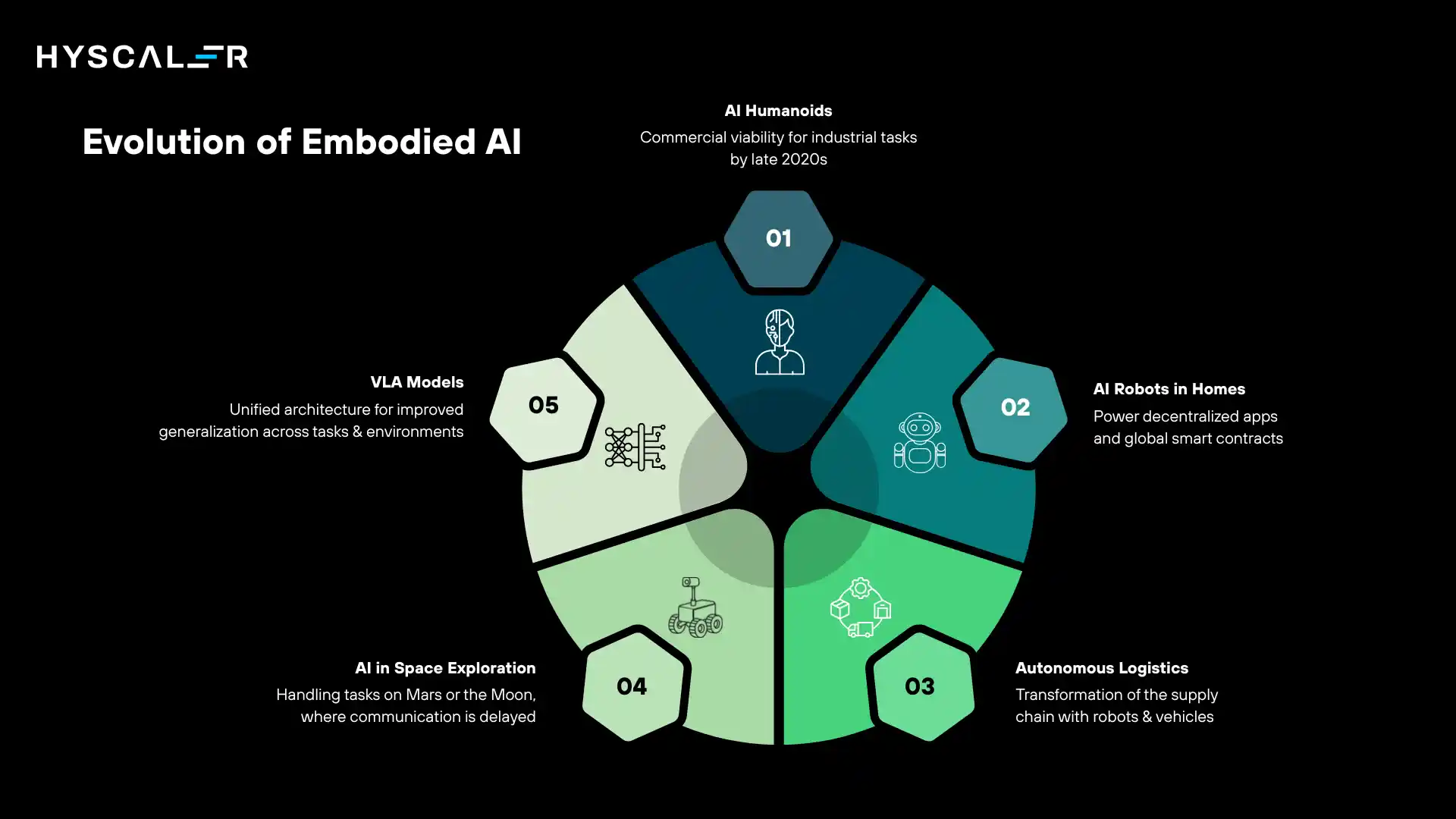

Future Trends of Embodied AI

Several trends will define the evolution of embodied AI over the coming decade.

AI-powered humanoids will become commercially viable for industrial tasks by the late 2020s, with costs decreasing as production scales.

AI robots in homes will become a reality for personal assistance, elder care, and domestic tasks as hardware becomes cheaper and AI becomes more capable.

Autonomous logistics, from fulfillment centers to delivery vehicles to last-mile robots, will transform the supply chain.

AI in space exploration represents a frontier application, with embodied AI systems handling tasks on Mars or the Moon where communication delays make remote human control impractical.

And Vision-Language-Action (VLA) models, which combine visual perception, language understanding, and action planning in a single unified model, are emerging as the architecture of choice for next-generation embodied AI agents, promising dramatically improved generalization across tasks and environments.

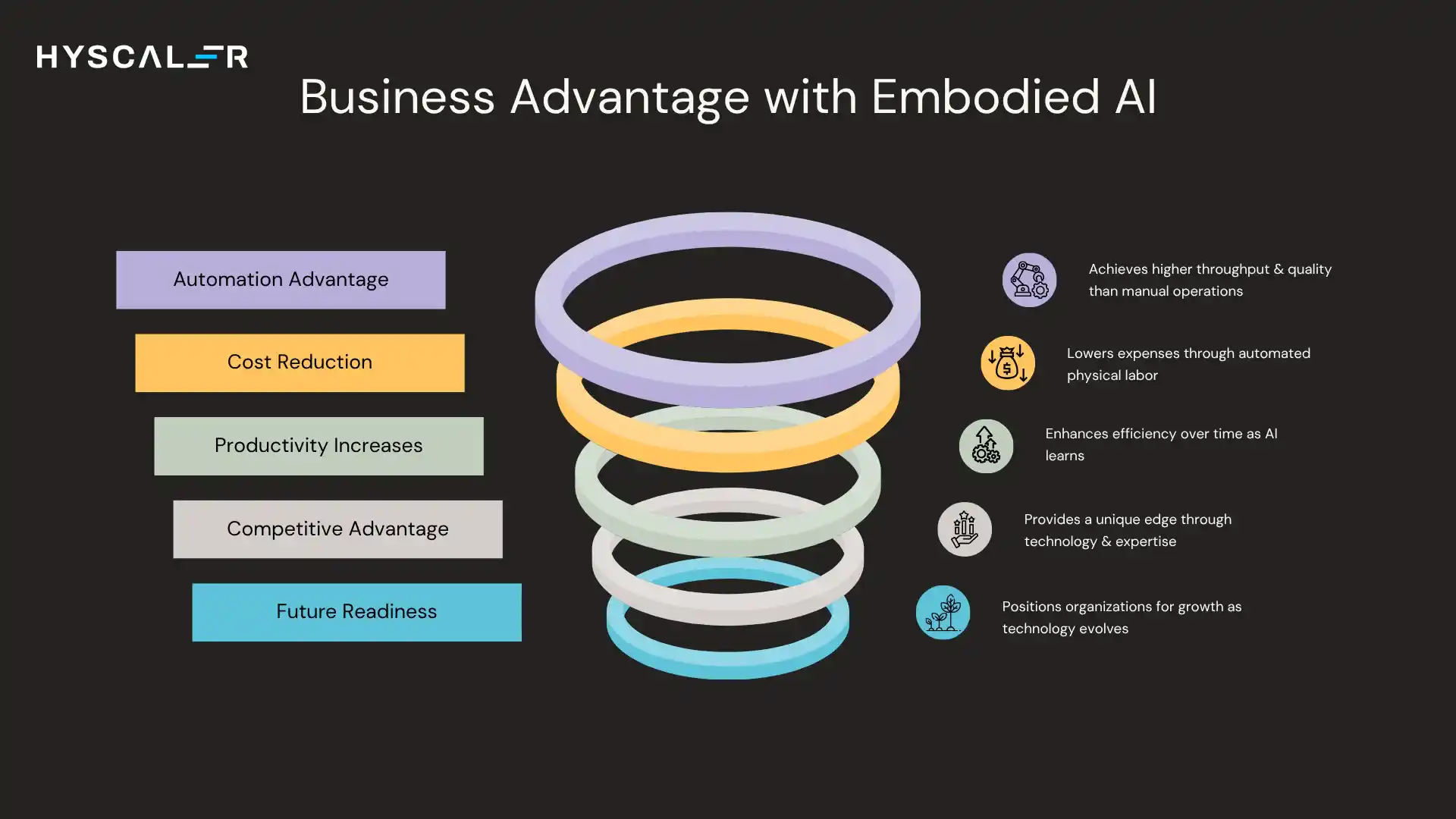

Why Embodied AI Matters for Businesses

For businesses, embodied AI is not a distant future concern; it is an emerging competitive frontier.

The automation advantage it confers will be decisive in labor-intensive industries: companies that deploy embodied AI early will achieve throughput and quality levels that manual operations cannot match.

Cost reduction through automation of physical labor is quantifiable and significant, particularly in manufacturing, warehousing, and logistics.

Productivity increases compoundly over time as AI systems learn from experience and become more capable.

Ultimately, embodied AI represents a source of competitive advantage that is difficult to replicate without serious investment in both technology and operational know-how.

Organizations that begin building expertise and infrastructure now will be better positioned to scale as the technology matures.

Conclusion

Embodied AI represents the next great leap in the development of artificial intelligence, the moment when AI moves from thinking to acting, from the digital world to the physical one.

It is a synthesis of robotics, machine learning, computer vision, and cognitive science, brought together to create machines that can perceive, reason, and operate within the same messy, dynamic world that humans inhabit.

The transformation this will bring to industries, manufacturing, logistics, healthcare, agriculture, construction, and beyond will be profound.

Daily life will be reshaped by AI-driven assistants and autonomous systems that handle tasks ranging from the mundane to the dangerous.

And the deeper scientific and philosophical questions that embodied AI forces us to confront, about the nature of intelligence, the relationship between mind and body, and what it means for machines to understand the world, will drive some of the most important research of our time.

The journey from narrow software tools to general-purpose physical agents will not be immediate, and it will not be without challenges. But the direction is clear.

Embodied AI is not just software anymore; it is intelligence that can move, interact, and transform the real world.

FAQs

What is embodied AI in simple terms?

Embodied AI is artificial intelligence that lives inside a physical body, like a robot or a self-driving car, allowing it to perceive and interact with the real world through sensors and movement, rather than just processing data on a computer.

What is an example of embodied AI?

A warehouse robot that navigates aisles, identifies products with cameras, and picks them up with a robotic arm is a clear example of embodied AI. Self-driving cars, surgical robots, and robotic home assistants are other prominent examples.

Is embodied AI the future?

Many researchers and tech companies see embodied AI as key to the next wave of AI, including progress toward AGI. With rapid advances in humanoid robots and autonomy, it’s expected to be transformative in the coming decade.

What is the difference between robotics and embodied AI?

Traditional robotics follows pre-programmed rules, repeating fixed tasks like an assembly-line robot arm. Embodied AI, in contrast, enables robots to perceive, learn, adapt, and make decisions in dynamic environments. In short, robotics provides the body, while embodied AI provides the intelligence.