Table of Contents

In today’s tech landscape, AI is ubiquitous, enhancing efficiency across sectors. Yet, ensuring fairness, transparency, and accountability is critical. AI auditing tools address this need, offering functionalities to assess, monitor, and mitigate biases in AI systems. They allow organizations to scrutinize models, ensuring compliance with ethical standards and regulations.

AI auditing tools help data teams identify model weaknesses and optimize for fairness. Similarly, just as network auditing tools scan infrastructure for vulnerabilities, AI auditing tools scan algorithms for discriminatory patterns. And just as software auditing tools verify code quality and compliance, AI auditing tools verify that machine learning models behave responsibly across diverse populations.

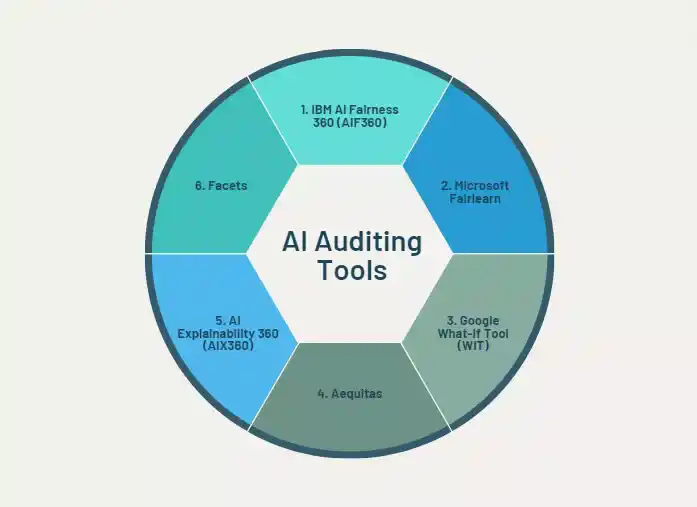

By leveraging these tools, stakeholders gain insights into AI systems, identifying and rectifying bias. Moreover, they facilitate ongoing monitoring, helping organizations adapt to evolving ethical standards. In this article, we explore six top AI auditing tools, their features, applications, and significance in promoting ethical AI solutions. Through their adoption, organizations can instill trust, accountability, and transparency in AI technologies.

What Is an AI Auditing Tool?

An AI auditing tool is a software framework or toolkit designed to systematically examine artificial intelligence systems, particularly machine learning models for bias, unfairness, opacity, and unintended behavior. Much like a financial audit verifies the integrity of accounts, an AI audit verifies that a model behaves ethically, equitably, and transparently across different populations and scenarios.

These tools give data scientists, policymakers, and business stakeholders a structured way to ask:

- Who does this model hurt?

- Why did it make this decision?

- Is it treating everyone fairly?

Think of AI auditing tools as the equivalent of website auditing tools in the digital marketing world; both systematically surface hidden problems that aren’t visible on the surface. Just as Active Directory auditing tools track who accessed what and when inside enterprise systems, AI auditing tools track how models make decisions and where they go wrong.

6 Leading AI Auditing Tools

1. IBM AI Fairness 360 (AIF360)

IBM AI Fairness 360 (AIF360) is a robust toolkit engineered to identify and rectify biases within machine learning models and datasets. Equipped with an extensive range of pre-established fairness metrics and bias mitigation algorithms, AIF360 facilitates a meticulous examination of model fairness across diverse demographic segments and application domains.

Key Applications

- Mitigating bias in lending and credit-scoring decisions

- Ensuring fairness in healthcare diagnostic algorithms

- Accountability checks in criminal justice risk tools

- Bias auditing in recruitment and hiring pipelines

How to Use

- Customize adjustments to match your specific use case

- Feed datasets and models into the AIF360 pipeline

- Select from pre-built fairness metrics (e.g., disparate impact)

- Apply built-in mitigation algorithms (pre-, in-, or post-processing)

2. Microsoft Fairlearn

Microsoft Fairlearn is a powerful AI auditing tool that assesses and mitigates unfairness in machine learning models using metrics like group fairness and individual fairness. Much like how software auditing tools integrate into CI/CD pipelines to check code quality, Fairlearn integrates seamlessly into existing ML workflows to continuously check model fairness.

Key Applications

- Identifying demographic biases in lending decisions

- Eliminating discrimination in candidate hiring workflows

- Auditing predictive policing models for systemic bias

- Comparing fairness trade-offs across model versions

How to Use

- Use the dashboard to compare model performance vs. fairness

- Integrate Fairlearn into existing ML workflows via its Python package

- Assess fairness using built-in metrics like disparate impact

- Explore mitigation strategies (reductions, threshold optimization)

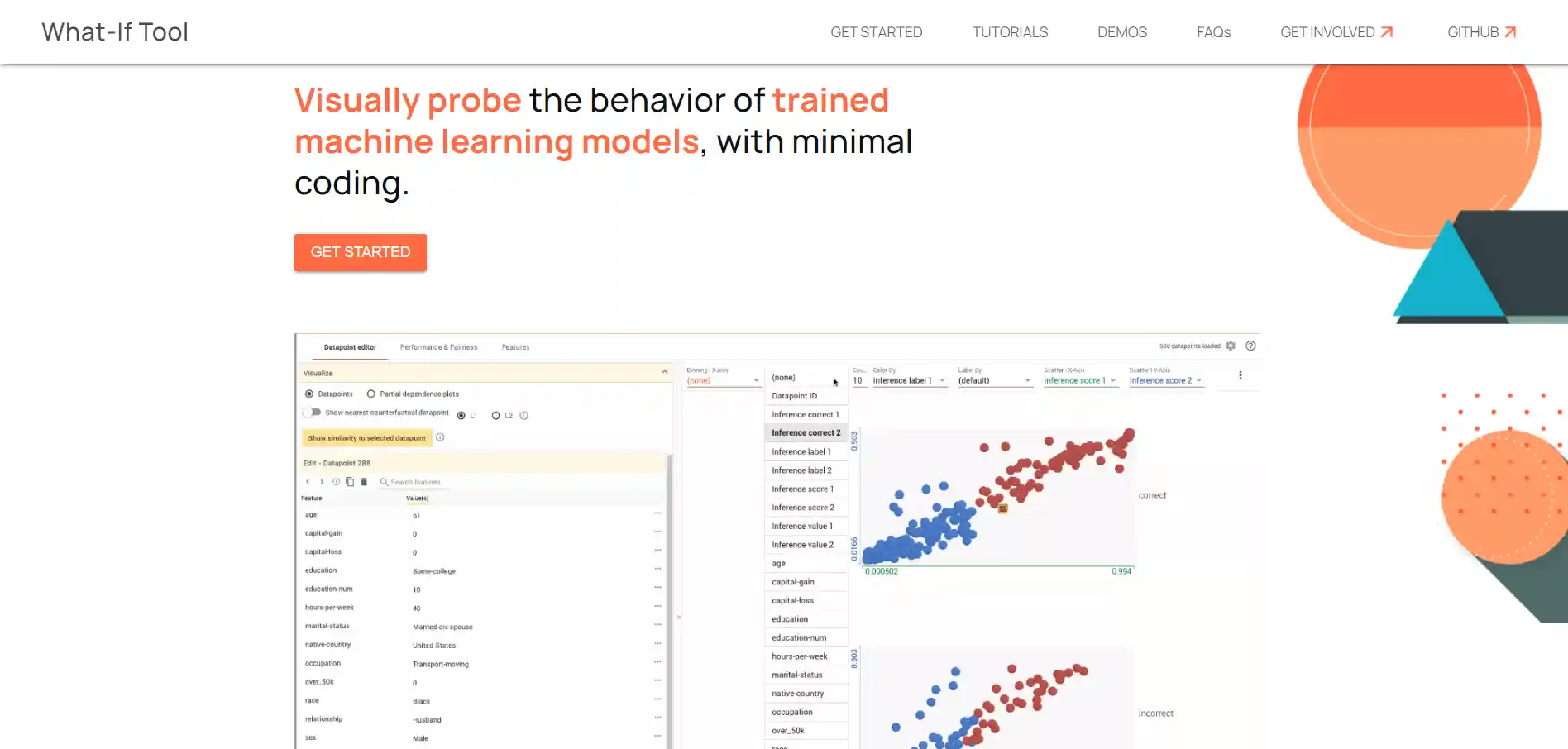

3. Google What-If Tool (WIT)

Google’s What-If Tool (WIT) aids AI auditing through interactive exploration and visualization of model predictions. Users manipulate input features to observe prediction changes, revealing biases or inconsistencies. Think of WIT as one of the most intuitive website auditing tool equivalents for AI, giving you a live, visual preview of how your model behaves across demographics.

Key Applications

- Visualizing how patient demographics affect medical model outputs

- Detecting if hiring recommendations differ by protected characteristics

- Comparing outcomes across demographic groups side-by-side

- Interactive scenario testing to stress-test model behavior

How to Use

- Export insights and share results for team-wide transparency

- Input your dataset and connect to a hosted or local model

- Adjust individual feature values and watch predictions update live

- Compare predictions between demographic subgroups visually

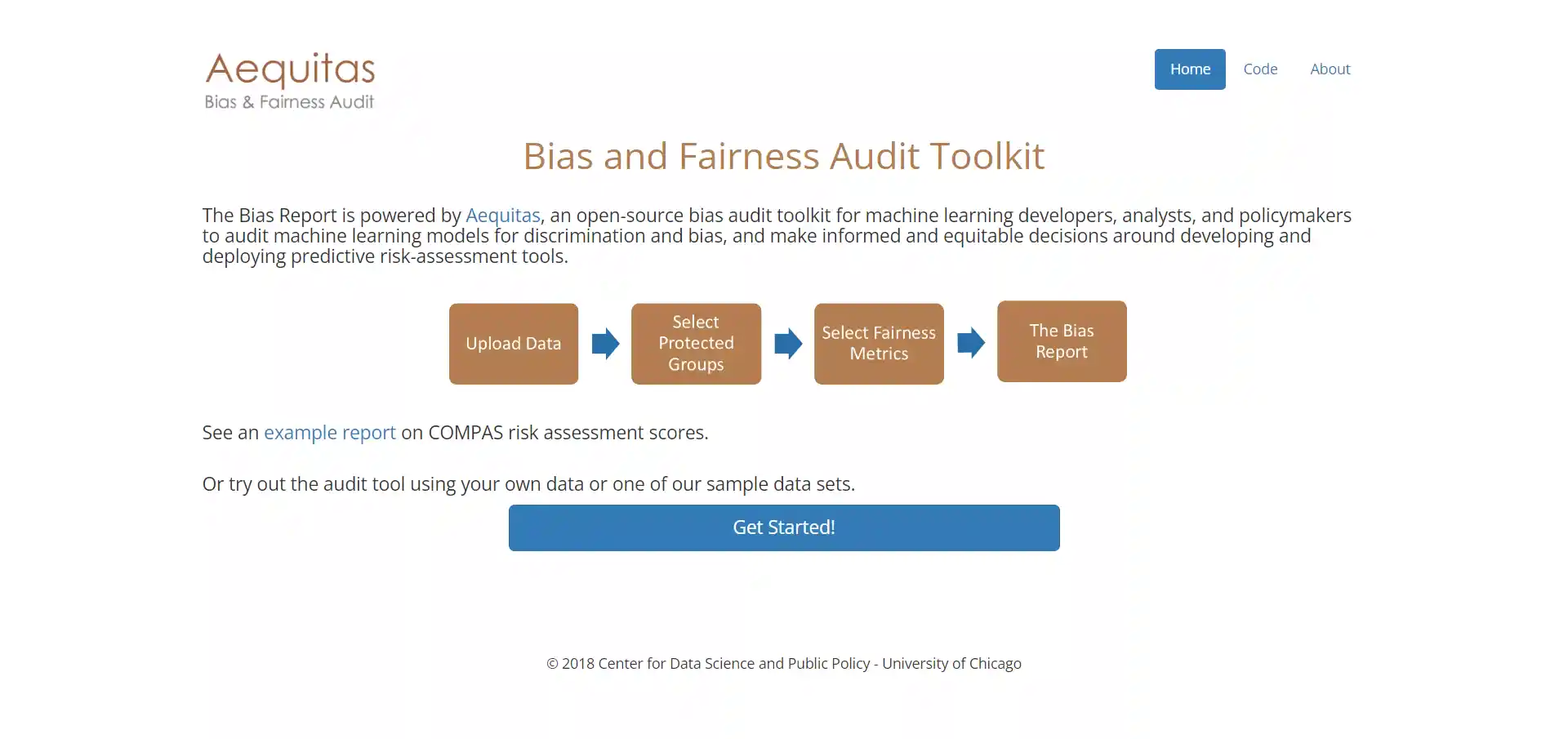

4. Aequitas

Aequitas is an open-source bias audit toolkit that detects and mitigates bias across diverse demographic groups, fostering fairness and equity. Just as network auditing tools generate detailed reports on infrastructure health, Aequitas generates detailed fairness reports on model health, broken down by group, metric, and risk level.

Key Applications

- Ensuring fair access to financial services in lending

- Eliminating bias in recruitment and candidate selection

- Auditing risk assessment tools in criminal justice systems

- Promoting equitable decision-making in healthcare

How to Use

- Apply mitigation strategies and re-audit to validate improvement

- Input ML model predictions alongside demographic attributes

- Review automatically computed fairness metrics and visual reports

- Identify disparities across demographic subgroups

5. AI Explainability 360 (AIX360)

AI Explainability 360 (AIX360) enhances machine learning model interpretability by revealing how features influence decisions, ensuring transparency and accountability. In regulated industries, much like how Active Directory auditing tools provide a clear audit trail of user actions for compliance, AIX360 provides a clear audit trail of model decisions, satisfying regulators who demand explainability.

Key Applications

- Debugging biased models by revealing how features drive outputs

- Meeting regulatory compliance requirements with decision explanations

- Building patient and clinician trust in healthcare diagnostics

- Providing clear explanations for financial risk assessments

How to Use

- Present explanations to stakeholders to reinforce trust and accountability

- Select an explainability algorithm suited to your model type

- Generate global explanations to understand overall model behavior

- Produce local explanations for individual predictions

6. Facets

Facets is a powerful visualization suite that helps users explore dataset statistics, distributions, and inter-feature relationships with ease. Think of it the way organisations think of SEO auditing tools for content gap analysis, it surfaces what’s missing or imbalanced in your dataset before you invest in training a model.

Key Applications

- Data preprocessing and cleaning before model training

- Detecting structural biases embedded within raw datasets

- Quality assessment to ensure data integrity at scale

- Exploratory analysis to understand feature distributions

How to Use

- Use insights to inform data cleaning and feature engineering decisions

- Upload your dataset directly into the Facets interface

- Explore statistics and distributions across all features interactively

- Identify patterns, anomalies, and potential sources of bias

Related Article:

AI Auditing Systems: Improving Accuracy with 60% Surge

Wrapping Up: The Role of AI Auditing Tools

AI auditing tools are vital for fairness, transparency, and accountability in AI systems. They detect and mitigate biases, explain model decisions, and ensure data integrity. The modern enterprise now runs on multiple auditing disciplines seo auditing tools keep your web presence healthy, network auditing tools keep your infrastructure secure, software auditing tools keep your code reliable, website auditing tools keep your user experience optimized, and Active Directory auditing tools keep your identity ecosystem compliant. AI auditing tools complete this picture by keeping your machine learning models ethical and trustworthy.

As AI permeates society, adopting these tools becomes imperative to prevent unintended consequences and promote ethical AI use. Incorporating AI auditing tools demonstrates a commitment to ethical AI and societal well-being.

FAQs

What is the difference between AI auditing tools and traditional software auditing tools?

Software auditing tools check code quality and compliance; they focus on what software does. AI auditing tools go deeper, examining how a model makes decisions and whether those decisions are fair across demographic groups.

Can AI auditing tools integrate with network auditing tools for end-to-end infrastructure monitoring?

Yes. Enterprise teams increasingly combine network auditing tools with AI auditing pipelines for holistic monitoring, especially since anomaly detection models used in network security should themselves be audited for bias.

How do AI auditing tools compare to SEO auditing tools in terms of complexity?

SEO auditing tools operate on clear, rule-based criteria like broken links or page speed. AI auditing is more contextual; there is rarely one correct fairness metric, and different stakeholders may prioritize different definitions of fairness.

Which AI auditing tool is best for beginners?

Google’s What-If Tool is the most beginner-friendly due to its visual, no-code interface. For Python users, Microsoft Fairlearn is the next best option. IBM AIF360 suits experienced data scientists.

How often should organizations run AI audits?

Audits should occur: (1) before deployment, (2) after retraining or data refresh, and (3) on a scheduled quarterly or annual basis similar to how compliance teams use software auditing tools during regulatory review cycles.

Is there a free, open-source alternative to paid enterprise AI auditing tools?

Yes, IBM AIF360, Fairlearn, What-If Tool, Aequitas, AIX360, and Facets are all free on GitHub.

What industries are most required to use AI auditing tools by regulation?

Financial services, healthcare, hiring, criminal justice, and government sectors face the strictest requirements. These industries are also the heaviest users of Active Directory auditing tools and network auditing tools, making AI auditing a natural governance extension.