Table of Contents

The generative AI has changed like the seasons, becoming better and better.

What began as experimental pilots with ChatGPT and similar tools has evolved into a fundamental question every enterprise must answer: How do we deploy AI at scale while maintaining control, security, and competitive advantage?

The explosion of generative AI adoption across industries has created an urgent need for strategic clarity.

Enterprises are no longer asking if they should adopt AI, but how, and the choice between public AI vs private AI models carries profound implications for data security, cost structure, competitive positioning, and regulatory compliance.

This isn’t a simple binary choice.

The reality facing enterprise leaders in 2026 is delicate, combining speed versus control, convenience versus security, and immediate value versus long-term strategic advantage.

This guide will help you understand the trade-offs between Public AI vs Private AI, explore how leading enterprises are building their AI strategies in practice, and determine which approach best fits your organization.

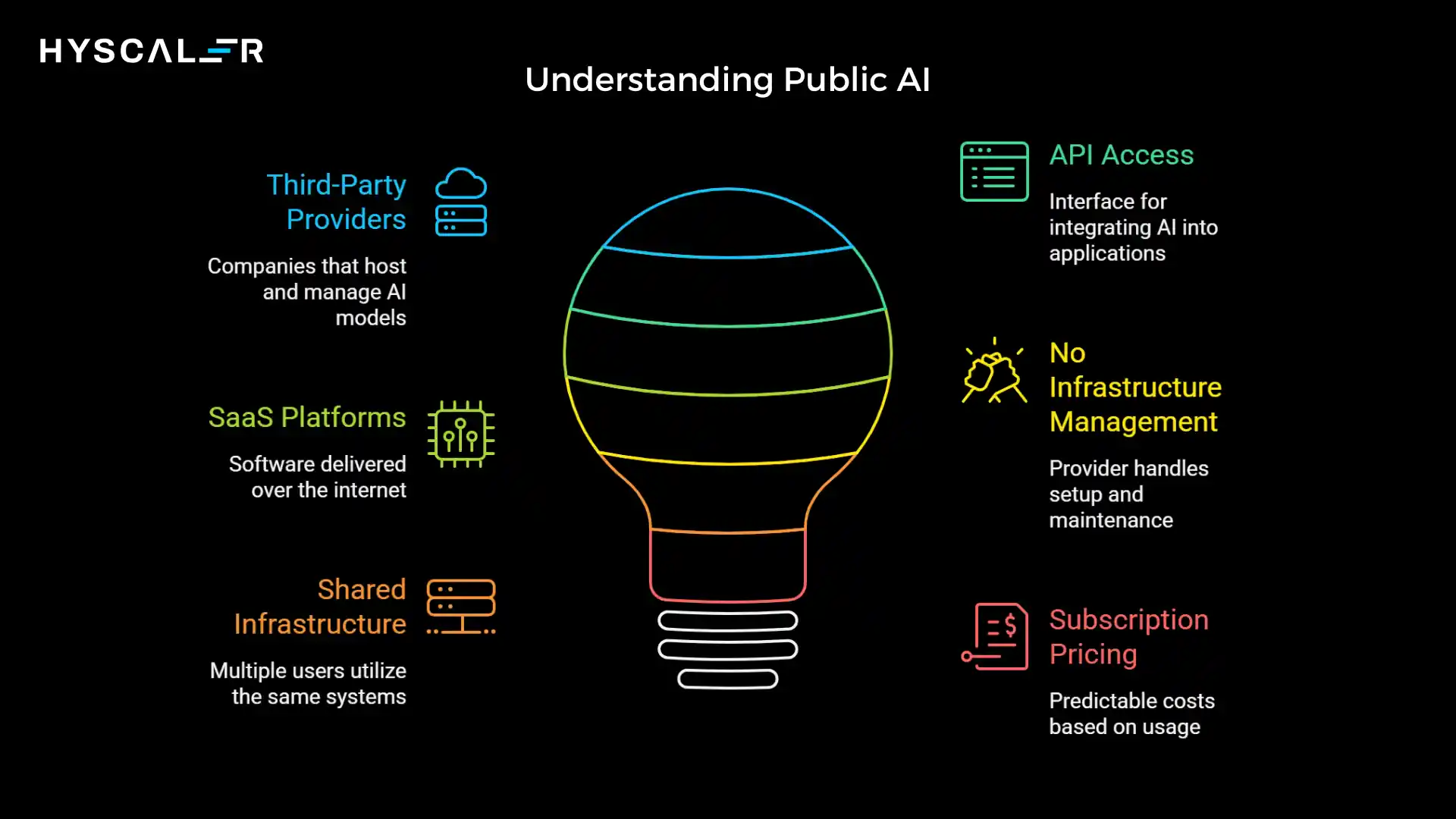

What is Public AI?

Public AI refers to AI models hosted and managed by third-party providers, accessible via APIs or Software-as-a-Service (SaaS) platforms.

These are the AI services you can sign up for and start using within minutes, without building any infrastructure yourself.

Leading Examples:

- OpenAI GPT – The ChatGPT models that sparked the generative AI revolution

- Google Gemini – Google’s multimodal AI platform

- Anthropic Claude – Advanced conversational AI with strong reasoning capabilities

- Microsoft Copilot – AI assistance integrated across Microsoft’s ecosystem

Key Characteristics of Public AI:

Public AI solutions share several defining traits that make them attractive for rapid adoption.

They operate on shared infrastructure, meaning multiple organizations use the same underlying systems and model deployments.

Access is provided through APIs or web interfaces, eliminating the need for specialized infrastructure knowledge.

Perhaps most importantly, these solutions require no infrastructure management on your team’s part.

The provider handles model updates, scaling, maintenance, and security patches.

Pricing typically follows subscription or usage-based models, making costs predictable and eliminating large upfront investments.

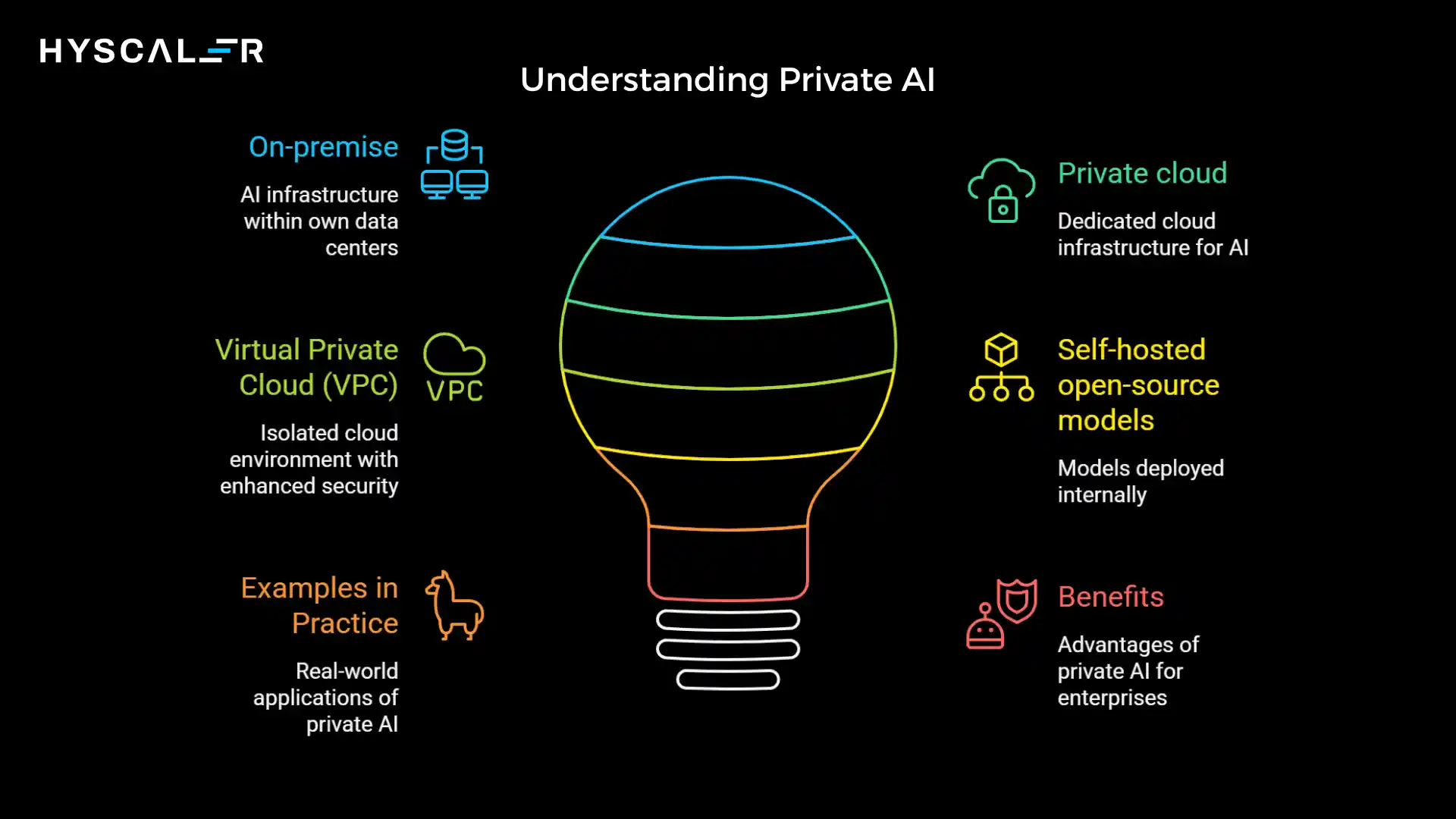

What is Private AI?

Private AI represents the other end of the spectrum: AI models deployed within an enterprise’s own infrastructure or in dedicated environments where the organization maintains full control over the deployment, data, and access patterns.

Common Deployment Models:

- On-premise – AI infrastructure within your own data centers

- Private cloud – Dedicated cloud infrastructure for AI workloads

- Virtual Private Cloud (VPC) – Isolated cloud environments with enhanced security

- Self-hosted open-source models – Models like Llama or Mistral deployed internally

Examples in Practice:

- Meta’s Llama models deployed on enterprise infrastructure

- Fine-tuned large language models trained on proprietary enterprise data

- Custom AI systems built specifically for unique business processes

- Domain-specific models for specialized industries

Private AI gives enterprises complete sovereignty over their AI stack, from the hardware it runs on to the data it processes and the ways it’s customized for specific business needs.

Public AI vs Private AI: Core Differences

Understanding the key differences in Public AI vs Private AI is essential for enterprises looking to make informed AI strategy decisions.

| Factor | Public AI | Private AI |

| Data Control | Limited data passes through third-party systems | Full control, data never leaves your infrastructure |

| Security | Shared responsibility model | Enterprise-controlled end-to-end |

| Deployment Speed | Very fast, hours to days | Slower, weeks to months |

| Upfront Cost | Low, pay-as-you-go pricing | High infrastructure investment is required |

| Customization | Limited, pre-trained models with some fine-tuning | Extensive, full control over training and optimization |

| Compliance | Challenging, must trust the provider’s compliance | Easier, direct control over compliance measures |

| Scalability | Easy, the provider handles the infrastructure | Requires planning and capacity management |

These differences create a fundamental tension: public AI offers speed and convenience, while private AI offers control and customization.

The right choice between public AI vs private AI depends on your specific use case, risk tolerance, and strategic priorities.

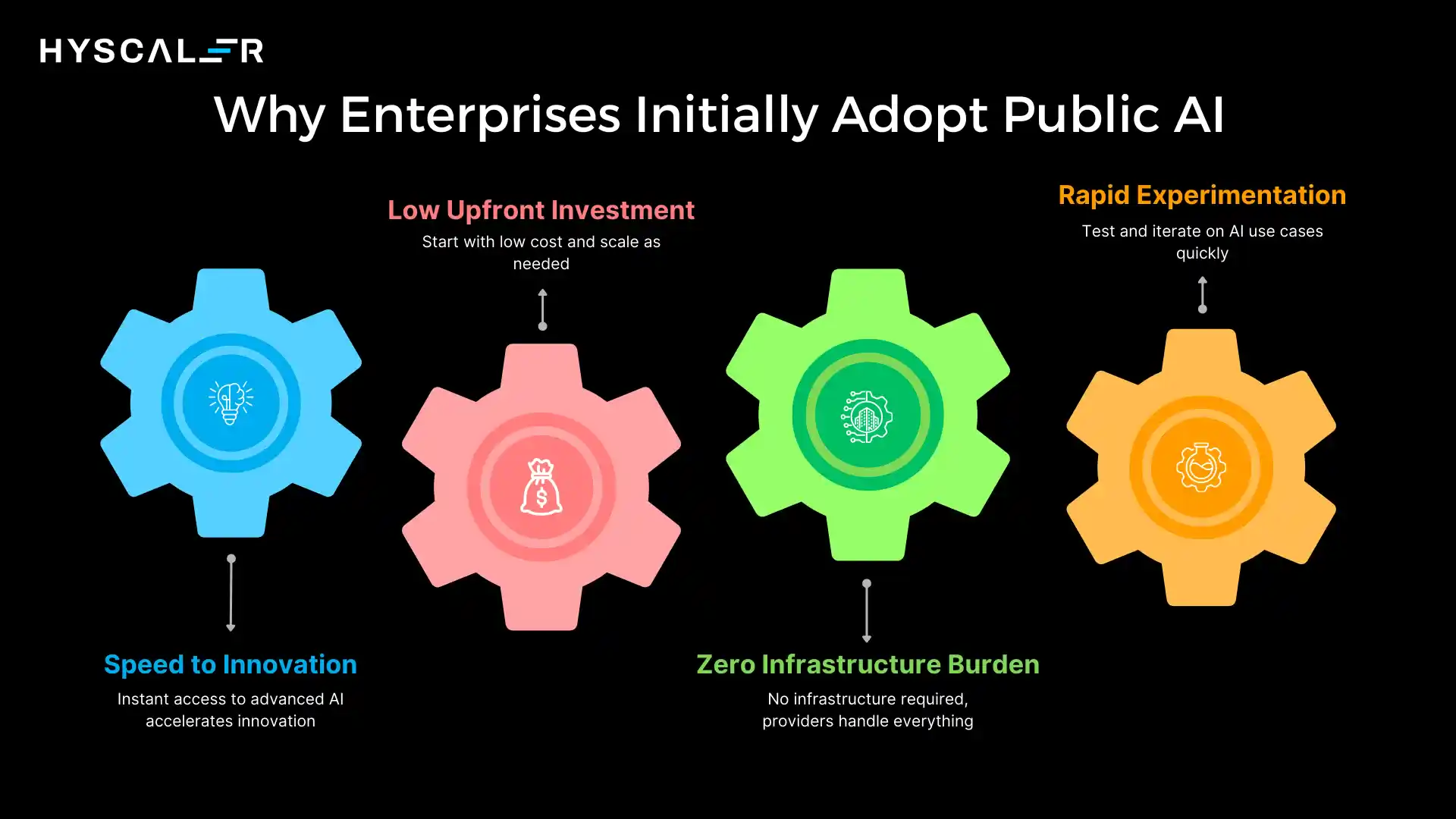

Why Enterprises Initially Adopt Public AI

In public AI vs private AI, public AI solutions have dominated the initial wave of enterprise AI adoption, and for good reason.

Several compelling factors drive organizations toward this approach first.

Speed to Innovation

Public AI provides immediate access to state-of-the-art models.

There’s no need to build infrastructure, hire specialized ML engineers, or wait for lengthy procurement cycles.

Teams can start experimenting with AI capabilities on the same afternoon they decide to try them.

Low Upfront Investment

Unlike traditional enterprise software that requires significant capital expenditure, public AI operates on an operational expense model.

Organizations can start with small budgets and scale usage based on proven value, making it easier to secure initial approvals and demonstrate ROI quickly.

Zero Infrastructure Burden

With public AI, there’s no need to provision GPU clusters, set up data pipelines, or manage model deployment infrastructure.

The provider handles all the complex technical operations, allowing your team to focus entirely on building applications and solving business problems.

Rapid Experimentation

The low barrier to entry enables a “fail fast” approach to AI innovation.

Teams can test multiple use cases quickly, learn what works, and pivot without high sunk costs.

This experimentation phase has been crucial for enterprises learning how AI can add value to their operations.

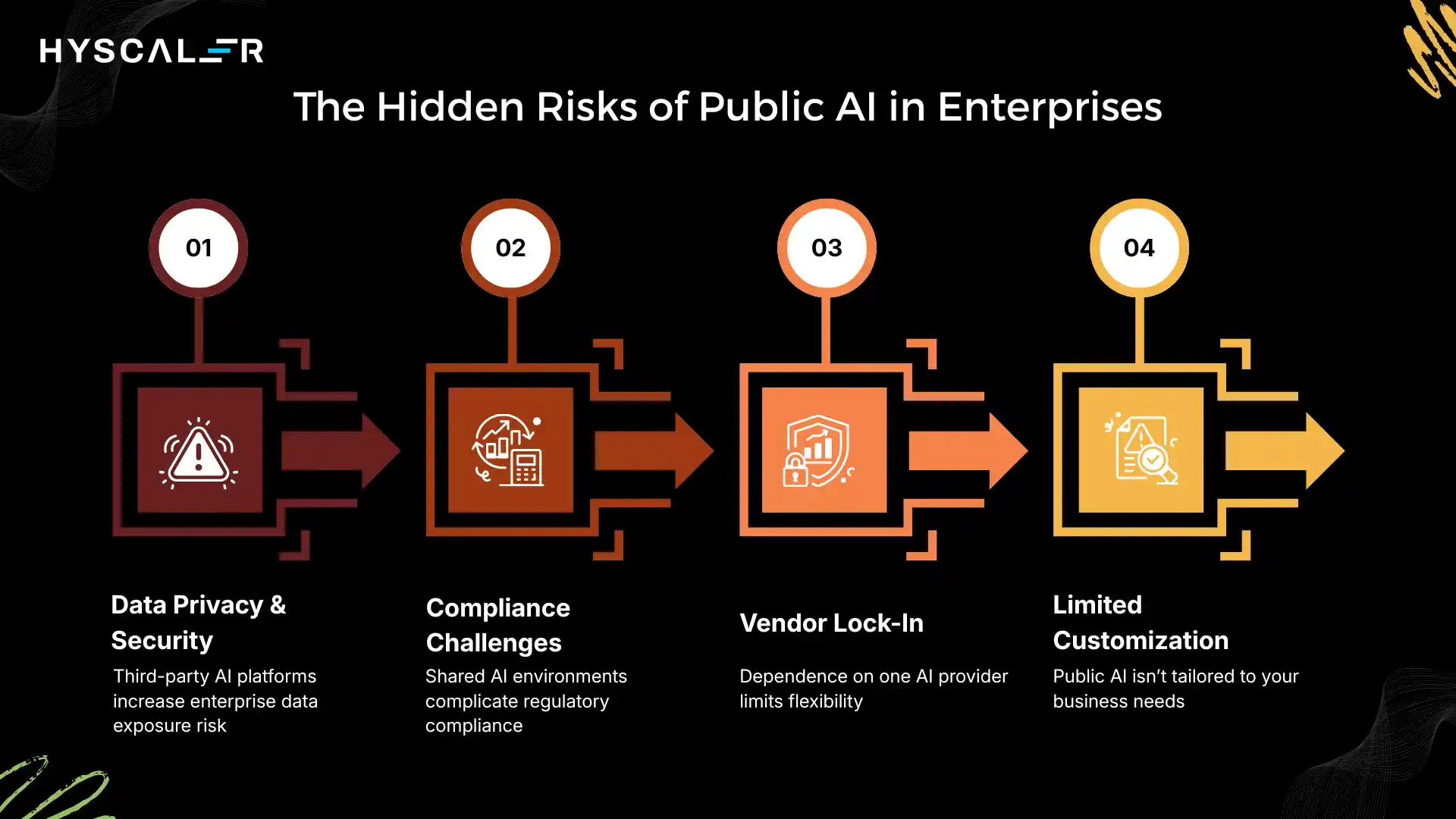

The Hidden Risks of Public AI in Enterprises

As enterprises scale their public AI usage beyond experimentation, several critical risks become apparent, risks that weren’t obvious during small-scale pilots.

Data Privacy and Security Concerns

When you send data to a public AI service, it passes through systems you don’t control.

Even with strong contractual protections and data processing agreements, the fundamental architecture creates risk.

Sensitive customer information, proprietary business data, or confidential strategic plans flowing through third-party systems represent a potential exposure point.

Compliance Challenges

Industries subject to strict regulatory requirements, such as healthcare (HIPAA), finance (SOC 2, PCI-DSS), government (FedRAMP), and European operations (GDPR), face significant challenges with public AI.

Proving compliance when data processing happens in shared environments controlled by third parties adds complexity and risk to audit processes.

Vendor Lock-In

As teams build applications and workflows around a specific public AI provider’s API, switching costs increase dramatically.

Prompt engineering, fine-tuning, integration patterns, and user training all become tied to one vendor’s approach.

This dependency can limit negotiating power and flexibility as your AI strategy evolves.

Limited Customization

Public AI models are trained on broad, general datasets.

While they’re remarkably capable, they’re not optimized for your specific industry terminology, business processes, or proprietary knowledge.

This limitation becomes more significant as enterprises move from general productivity use cases to AI systems at the core of their business operations.

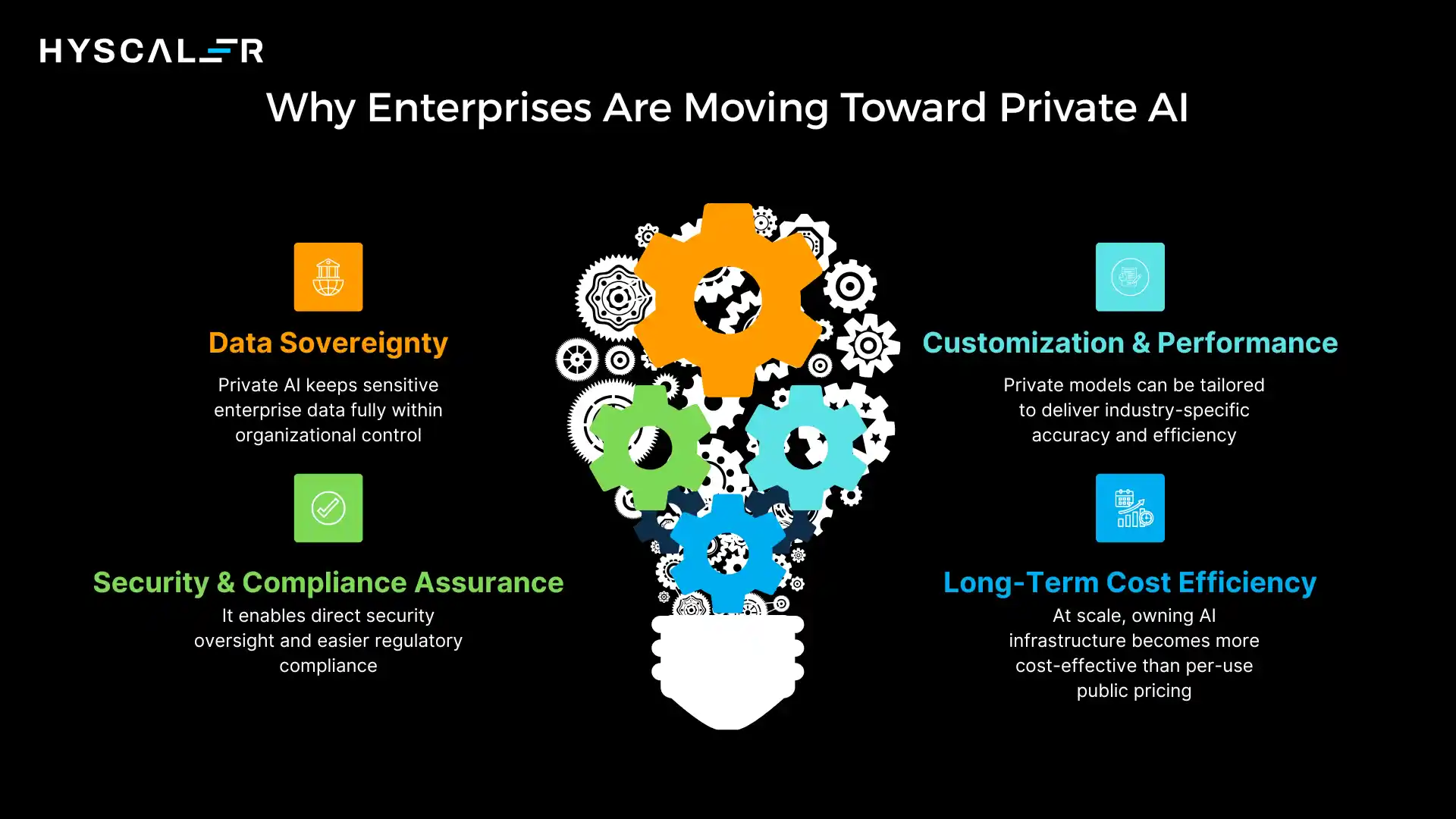

Why Enterprises Are Moving Toward Private AI

Despite the convenience of public AI, a significant shift is underway.

Enterprises are increasingly investing in private AI infrastructure, driven by several strategic decisions.

Data Sovereignty

In private AI deployments, sensitive data never leaves the enterprise perimeter.

This architectural guarantee provides certainty that’s impossible to achieve with third-party services, regardless of contractual terms.

For organizations handling truly sensitive information, financial data, healthcare records, and national security information, this control is non-negotiable.

Security and Compliance Assurance

Private AI enables enterprises to implement their own security controls, conduct internal audits, and demonstrate compliance through direct evidence rather than reliance on vendor certifications.

This control significantly simplifies regulatory compliance and reduces audit complexity.

Customization and Performance

With private AI, enterprises can fine-tune models on proprietary data, optimize for specific use cases, and achieve performance levels impossible with general-purpose public models.

A private model trained on years of customer service interactions will understand your products, policies, and customer needs in ways a general model cannot.

Long-Term Cost Efficiency

While private AI requires significant upfront investment, the economics become favorable at scale.

Organizations processing millions of requests monthly find that owning their AI infrastructure costs substantially less than paying per-token pricing to public providers.

The breakeven point varies, but for AI-first companies, it arrives quickly.

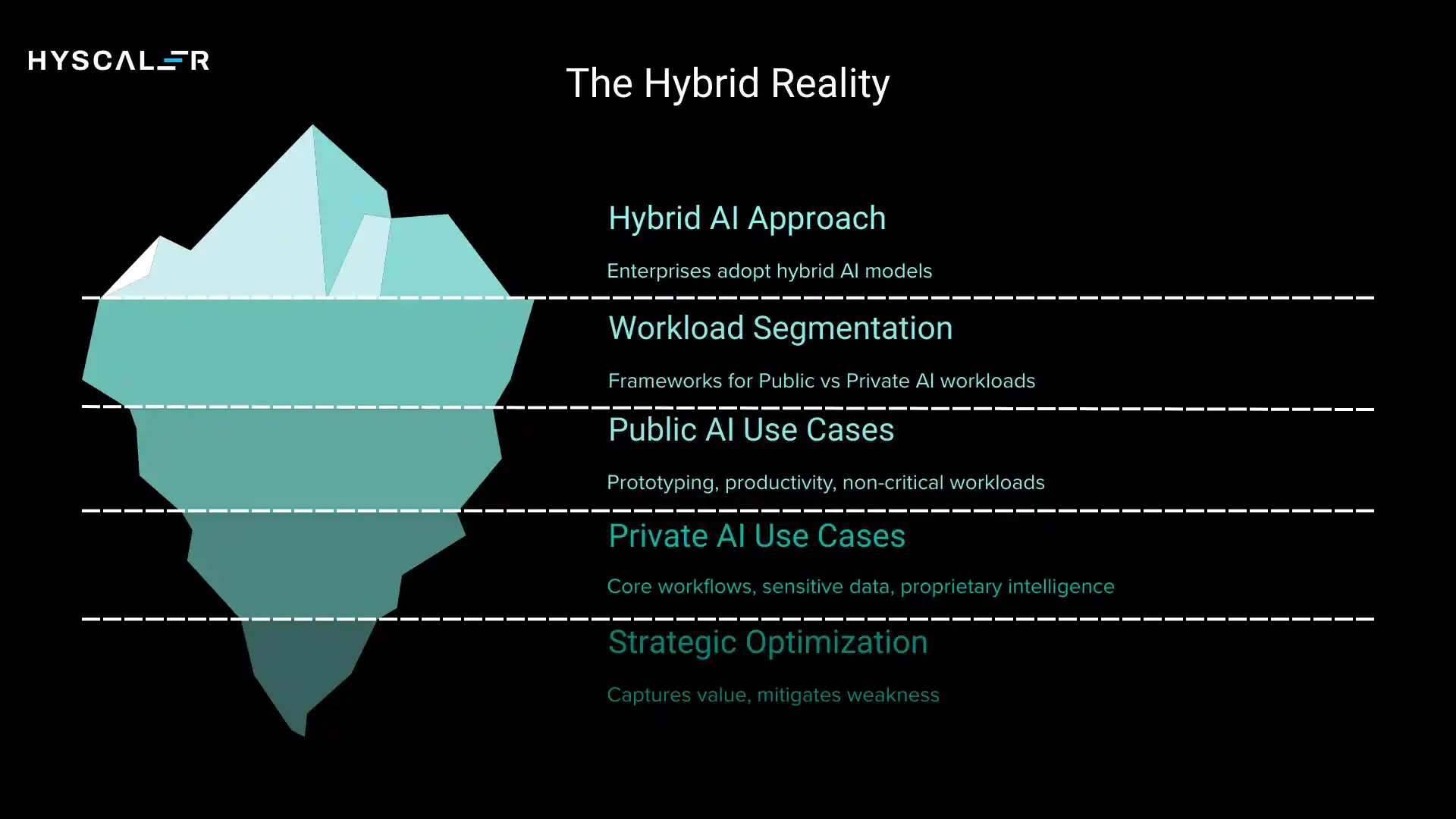

The Hybrid Reality: How Most Enterprises Actually Operate

The public AI vs. private AI debate presents a false contrast.

In practice, sophisticated enterprises are adopting hybrid approaches that leverage the strengths of both models.

The Emerging Pattern

Leading organizations are developing clear frameworks for which workloads run on public AI and which require private infrastructure.

This segmentation is driven by data sensitivity, business criticality, and cost considerations.

Public AI for:

- Prototyping and experimentation – Testing new AI use cases before committing to infrastructure

- General productivity tools – Writing assistance, coding help, summarization for non-sensitive content

- Non-critical workloads – Use cases where occasional errors or downtime are acceptable

- Variable demand – Workloads with unpredictable usage patterns where elastic scaling is valuable

Private AI for:

- Core business workflows – AI systems that directly impact revenue or competitive advantage

- Sensitive customer data – Processing personal information, financial records, or health data

- Proprietary intelligence – Models trained on competitive insights or trade secrets

- High-volume production systems – Workloads where per-request costs make private economics favorable

This hybrid model isn’t a compromise, but it’s a strategic optimization that captures value from both approaches while mitigating their respective weaknesses.

Real Enterprise Use Cases

Understanding how specific use cases map to public AI vs private AI helps clarify the decision framework.

Public AI Use Cases

Marketing Content Generation Marketing teams use public AI to generate social media posts, email campaigns, and blog drafts. The content isn’t particularly sensitive, volume is moderate, and the creativity of general models is valuable. Risk tolerance is higher since errors can be caught in human review.

Coding Assistants Developers use public AI tools like GitHub Copilot for code completion and problem-solving. While code is intellectual property, the convenience and quality of public models often justify the tradeoff, particularly for standard programming patterns.

Internal Productivity Meeting summarization, email drafting, and general research tasks are handled effectively by public AI. These workloads benefit from the broad knowledge of general models and don’t typically involve sensitive data.

Private AI Use Cases

Financial Risk Analysis Banks and financial institutions deploy private AI for credit risk assessment, fraud detection, and portfolio analysis. These systems process highly sensitive financial data and directly impact business outcomes, making private deployment essential.

Healthcare Diagnostics Medical AI systems analyzing patient records, imaging data, or clinical notes must comply with HIPAA and maintain absolute data privacy. Private deployment on healthcare organization infrastructure is the only viable approach.

Customer Intelligence Systems AI systems that analyze customer behavior, predict churn, or personalize experiences process data that represents a core competitive advantage. Private deployment ensures this intelligence remains proprietary.

Legal Document Analysis Law firms and corporate legal departments deploy private AI for contract review, legal research, and due diligence. Attorney-client privilege and confidentiality requirements make public AI unacceptable for these workflows.

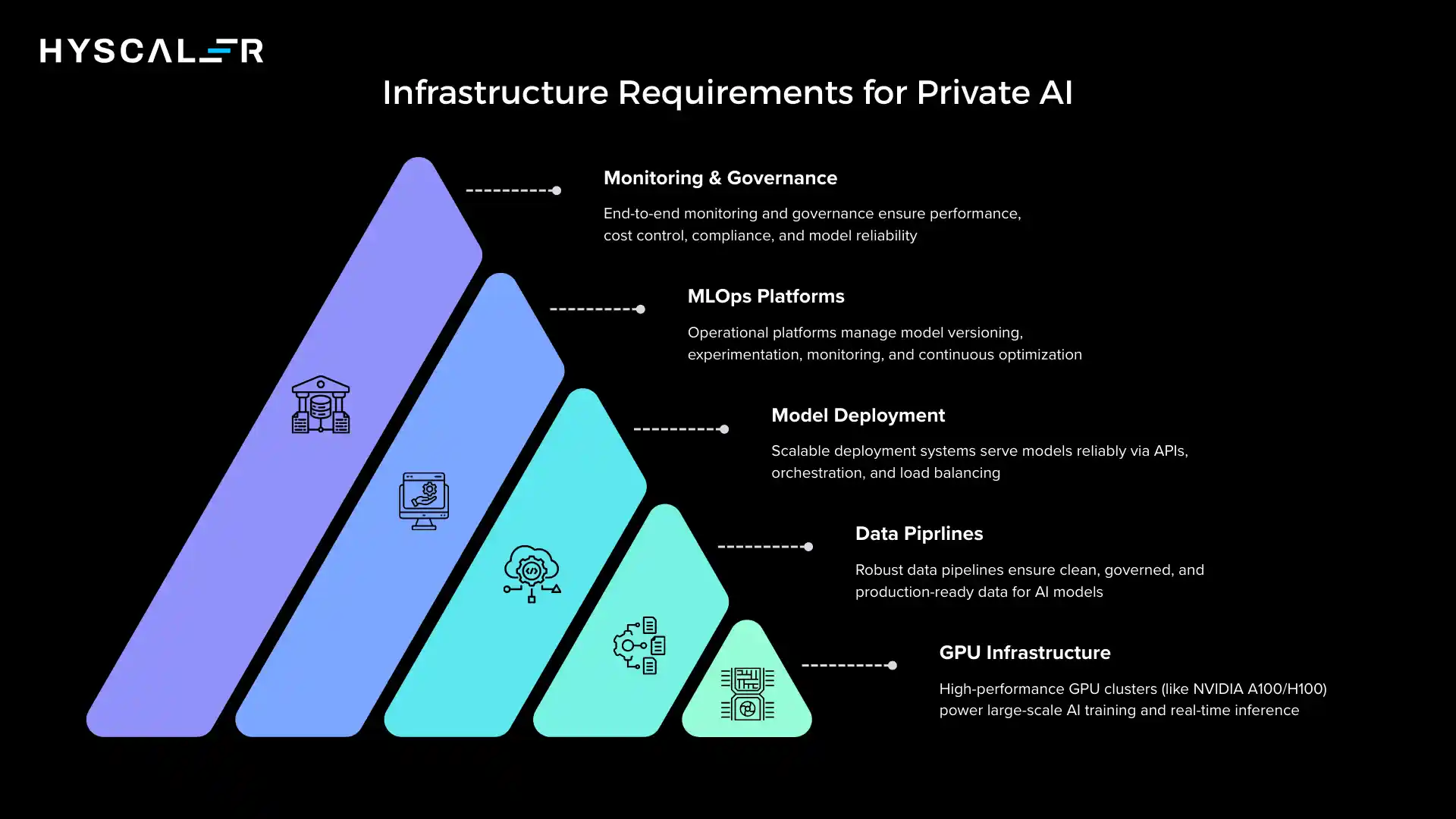

Infrastructure Requirements for Private AI

Moving to private AI isn’t simply a matter of downloading a model.

It requires significant infrastructure investment and operational capability.

Essential Components:

GPU Infrastructure: High-performance GPU clusters are necessary for both training and inference. For large language models, this might mean NVIDIA A100 or H100 systems, representing significant capital investment or cloud infrastructure costs.

Data Pipelines: Robust data engineering infrastructure to prepare training data, manage data quality, and feed inference systems. This includes data warehouses, ETL systems, and data governance tools.

Model Deployment Systems: Infrastructure for serving models in production, including API gateways, load balancers, and container orchestration systems. Tools like NVIDIA Triton Inference Server or custom deployment stacks.

MLOps and LLMOps Platforms: Operational systems for model versioning, monitoring, A/B testing, and continuous improvement. Platforms like MLflow, Weights & Biases, or custom-built solutions.

Monitoring and Governance: Systems to track model performance, detect drift, manage costs, and enforce governance policies. This includes logging, metrics, alerting, and compliance reporting tools.

Building and maintaining this infrastructure requires specialized expertise in ML engineering, data engineering, and platform operations, a significant organizational investment beyond just technology.

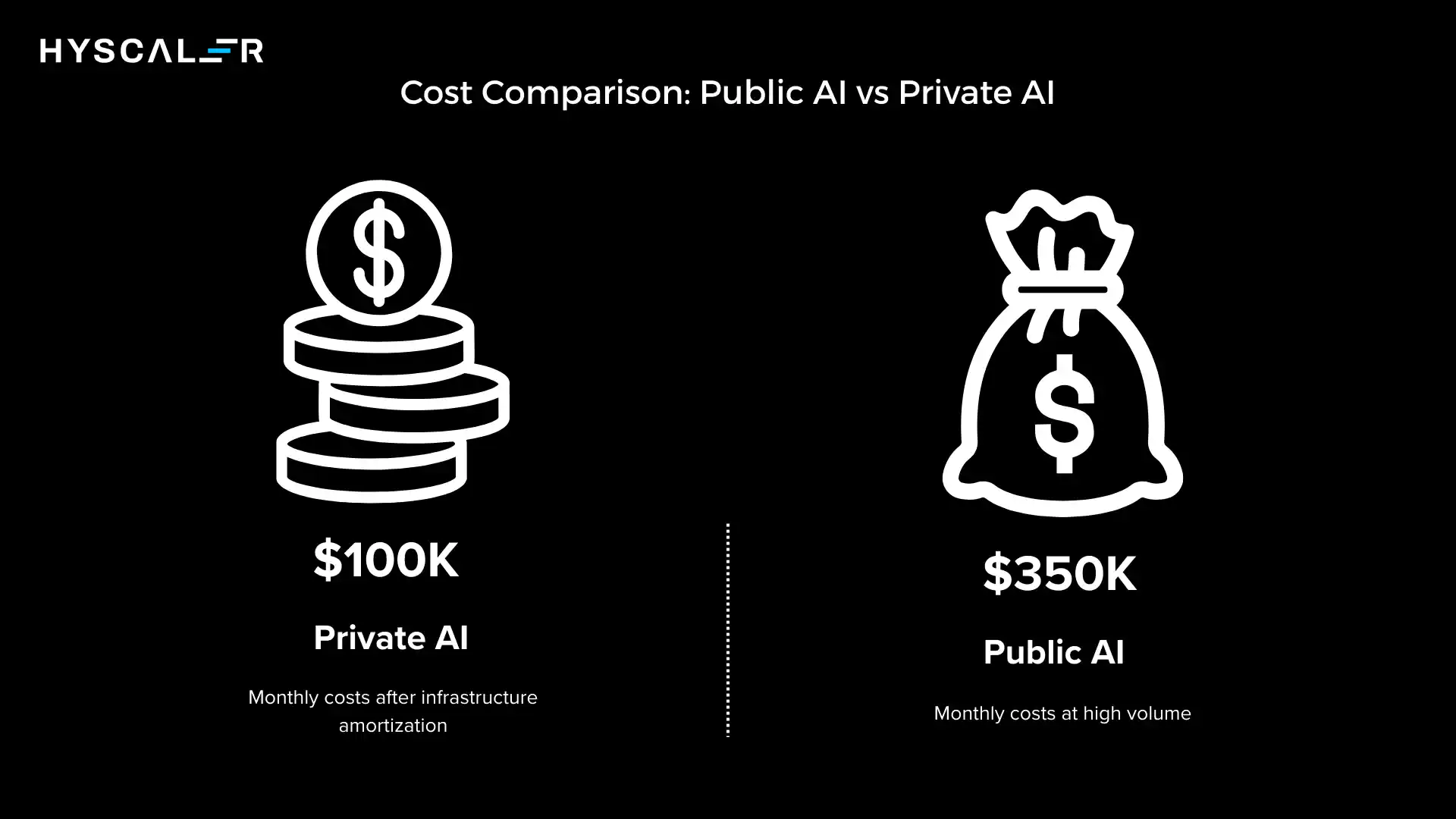

Cost Comparison: Public AI vs Private AI

Understanding the true cost of the Public AI vs Private AI approach requires looking beyond simple per-request pricing.

Public AI Cost Structure

Advantages:

- Zero infrastructure investment

- Pay-as-you-go flexibility

- Costs scale linearly with usage

- No maintenance or operational overhead

Hidden Costs:

- Expensive at high volume (often $0.002-0.10 per 1K tokens)

- Costs can spike unpredictably with increased usage

- Limited ability to optimize costs through technical improvements

Example: An enterprise processing 100M tokens daily might spend $200-500K monthly on public AI services.

Private AI Cost Structure

Disadvantages:

- High initial capital expenditure ($500K-5M for infrastructure)

- Ongoing operational costs (personnel, maintenance, power)

- Fixed costs regardless of utilization

Advantages:

- Dramatically lower marginal costs at scale

- Costs decrease as usage increases

- Ability to optimize infrastructure for specific workloads

Example: The same 100M tokens daily might cost $50-150K monthly after initial infrastructure investment amortization.

The Breakeven Analysis

For most enterprises, the breakeven point arrives between 10 and 50M tokens processed monthly, depending on specific requirements and infrastructure choices.

Organizations planning significant AI integration should model their expected usage carefully.

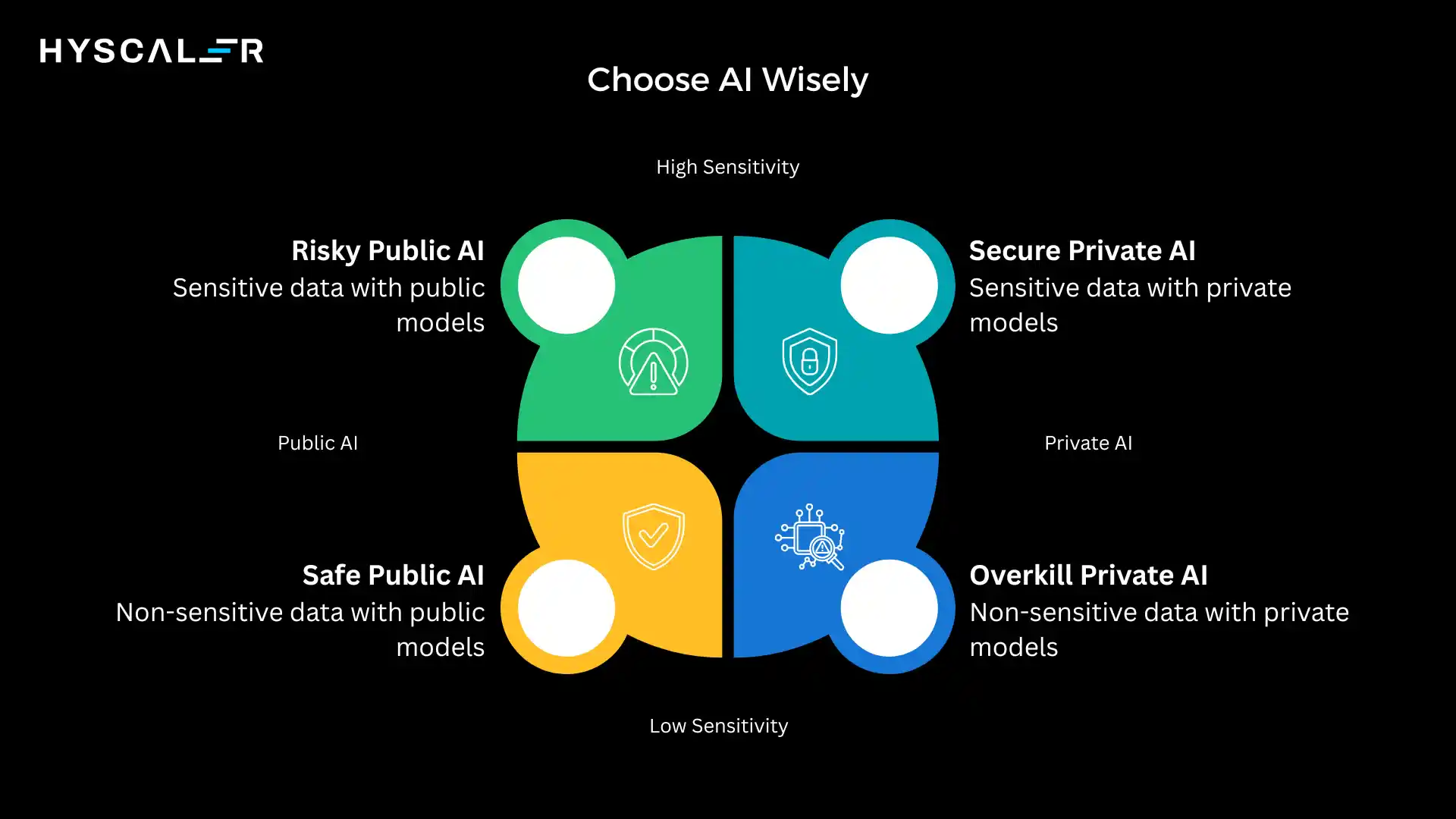

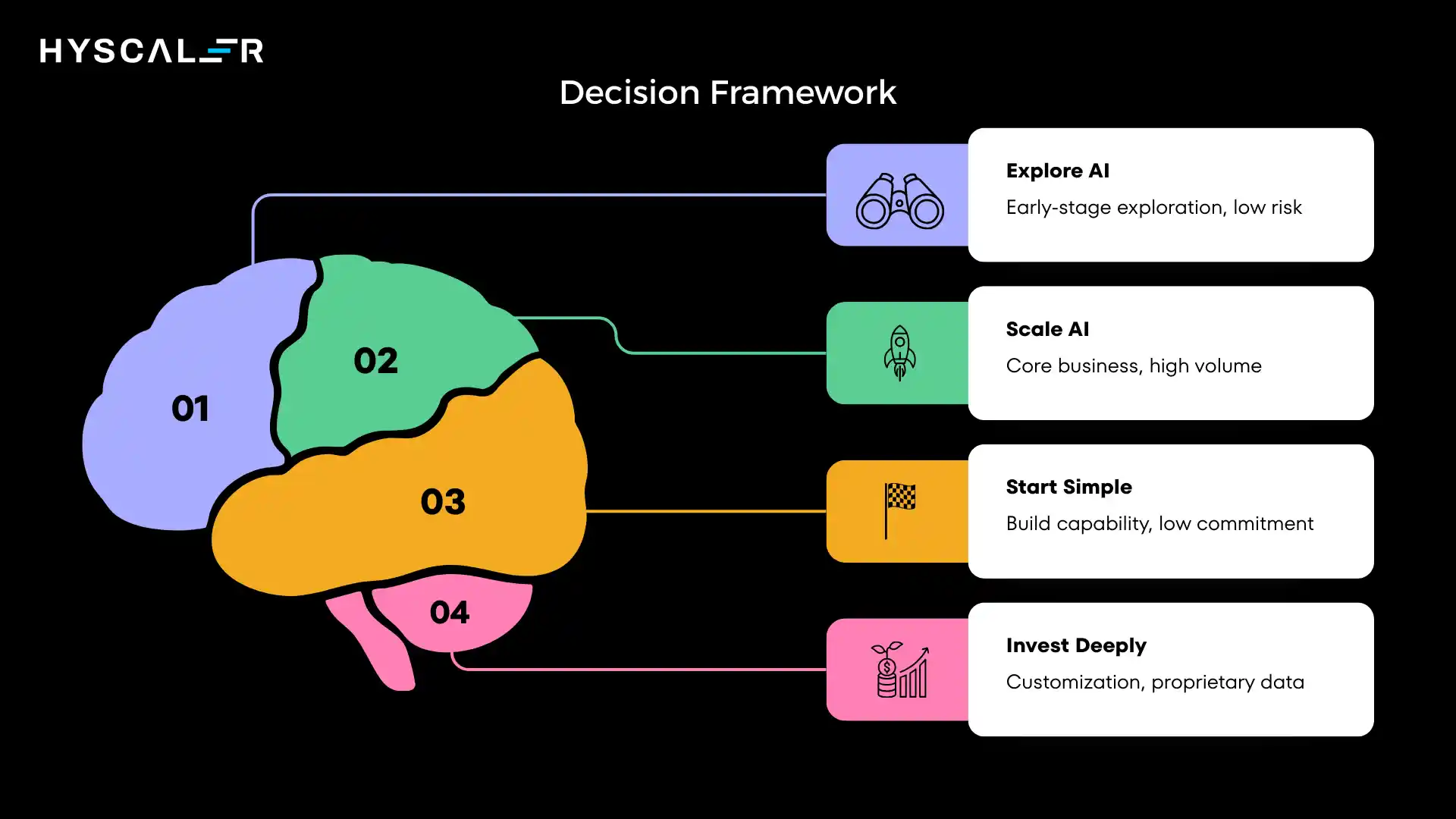

Decision Framework: When to Choose Public AI vs Private AI

Strategic decision-making requires a framework that considers multiple dimensions beyond just technology.

Choose Public AI When:

You’re in early-stage exploration: If your organization is still learning what AI can do and which use cases create value, public AI’s low barrier to entry enables rapid experimentation without major commitments.

AI maturity is limited: Organizations without ML engineering expertise, GPU infrastructure experience, or data science teams should start with public AI to build capability before considering private deployments.

Use cases are low-risk: For applications where data sensitivity is low, errors are tolerable, and business criticality is moderate, public AI’s convenience often outweighs control considerations.

Volume is unpredictable: When usage patterns are highly variable or seasonal, public AI’s elastic scaling prevents over-provisioning private infrastructure.

Choose Private AI When:

Sensitive data is involved: Any use case processing regulated data (PII, PHI, financial records), competitive intelligence, or information subject to strict privacy requirements should default to private deployment.

AI is core to your business: When AI capabilities directly drive revenue, create competitive differentiation, or enable your core value proposition, the control and customization of private AI justifies the investment.

You need full control and customization: Use cases requiring fine-tuning on proprietary data, optimization for specific domains, or integration with legacy systems often require private infrastructure.

You’re operating at scale: High-volume production workloads processing millions of requests daily, and achieving better economics with private infrastructure.

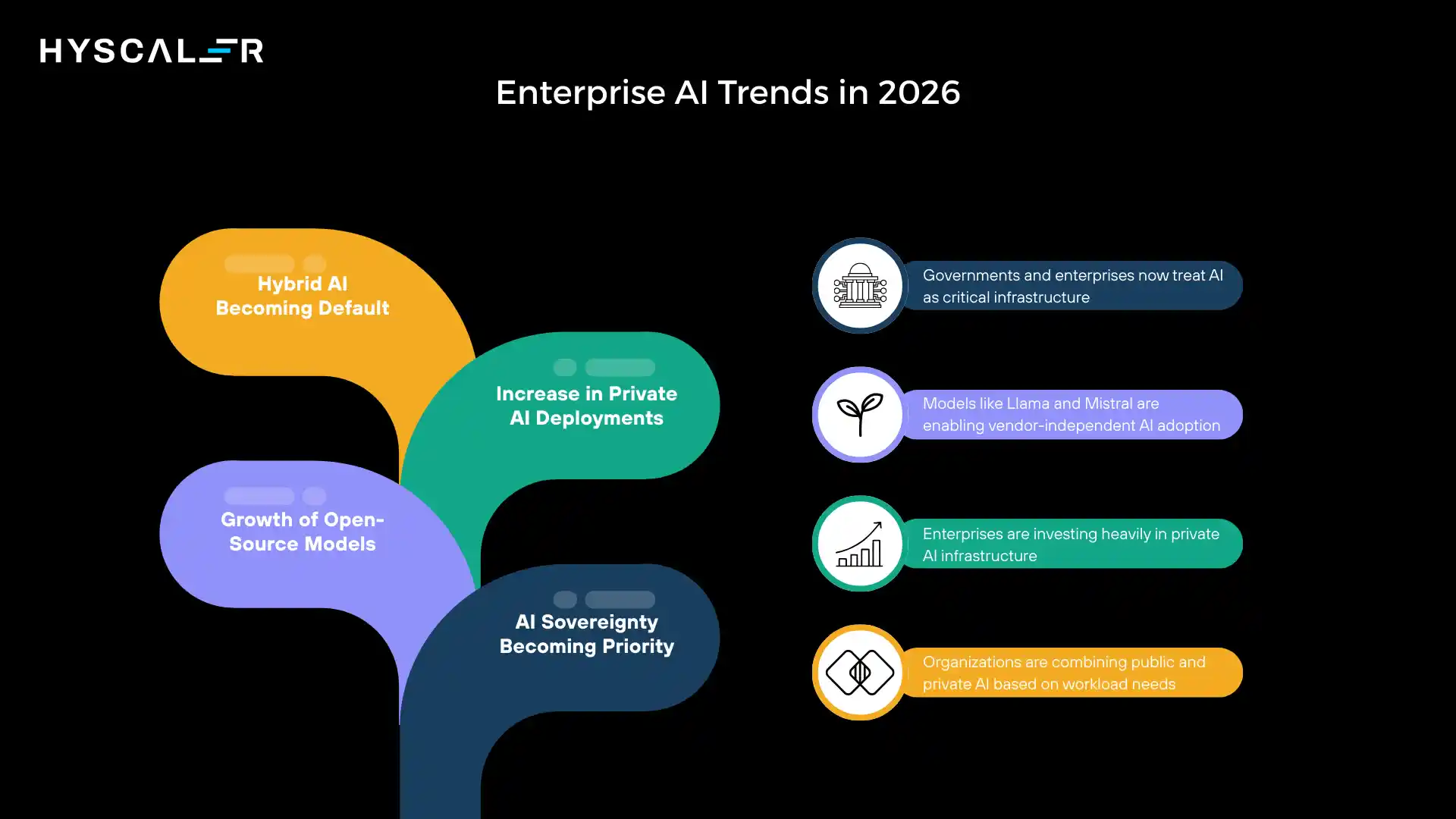

Enterprise AI Trends in 2026: The Rise of Sovereign AI

Public AI vs Private AI is just a new strategy, with several other trends reshaping enterprise strategies.

AI Sovereignty Becoming Priority

Governments and enterprises increasingly view AI capability as strategic infrastructure, similar to telecommunications or energy.

This shift drives investment in domestic AI development and private deployment to maintain sovereignty over critical systems.

Growth of Open-Source Models

Models like Meta’s Llama, Mistral, and others have reached performance levels competitive with proprietary alternatives.

This open-source movement enables private AI deployment without dependence on specific vendors, accelerating adoption.

Increase in Private AI Deployments

Major enterprises across industries are announcing significant investments in private AI infrastructure.

Cloud providers are responding with specialized offerings for enterprises wanting private deployments with cloud economics.

Hybrid AI Becoming Default

The debate is shifting from “public or private” to “which workloads where.”

Enterprises are developing sophisticated frameworks for workload placement, recognizing that the optimal approach combines both models strategically.

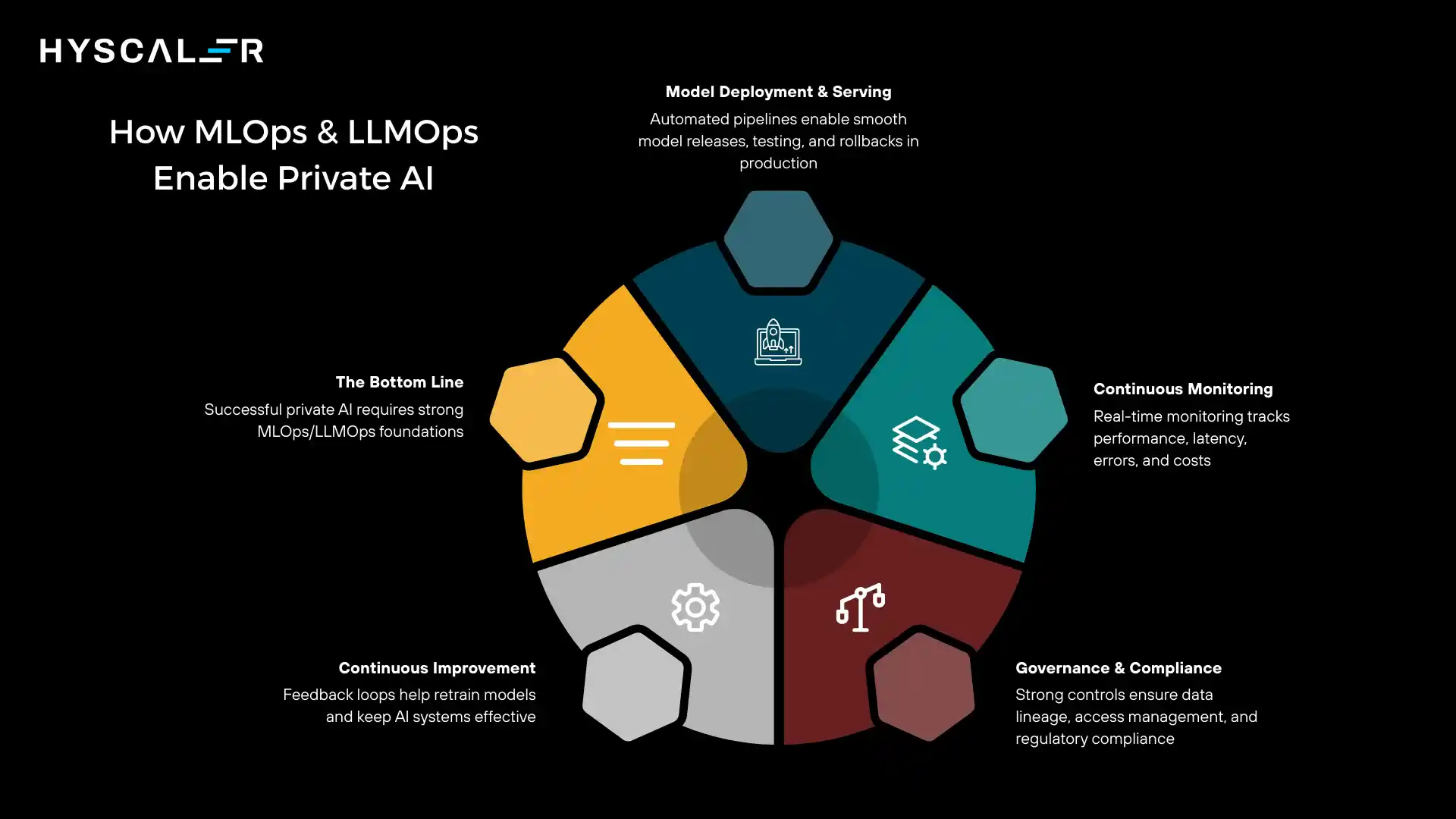

How MLOps and LLMOps Enable Private AI

Private AI’s success depends on operational maturity.

Without strong MLOps and LLMOps practices, private deployments become unsustainable.

Critical Capabilities:

Model Deployment and Serving: Automated pipelines for deploying models to production, managing versions, and routing traffic. This includes canary deployments, A/B testing, and rollback capabilities.

Continuous Monitoring: Real-time tracking of model performance, latency, error rates, and cost. Detecting degradation before it impacts users requires sophisticated monitoring infrastructure.

Governance and Compliance: Tracking data lineage, managing access controls, documenting model decisions, and maintaining audit trails. Particularly critical in regulated industries.

Continuous Improvement: Systematic processes for collecting feedback, retraining models, and deploying improvements. Without these feedback loops, private models become stale and less effective over time.

The Bottom Line: Private AI is impossible without strong operational foundations. Organizations must invest in MLOps/LLMOps capabilities before scaling private deployments, or risk creating technical debt that undermines the entire initiative.

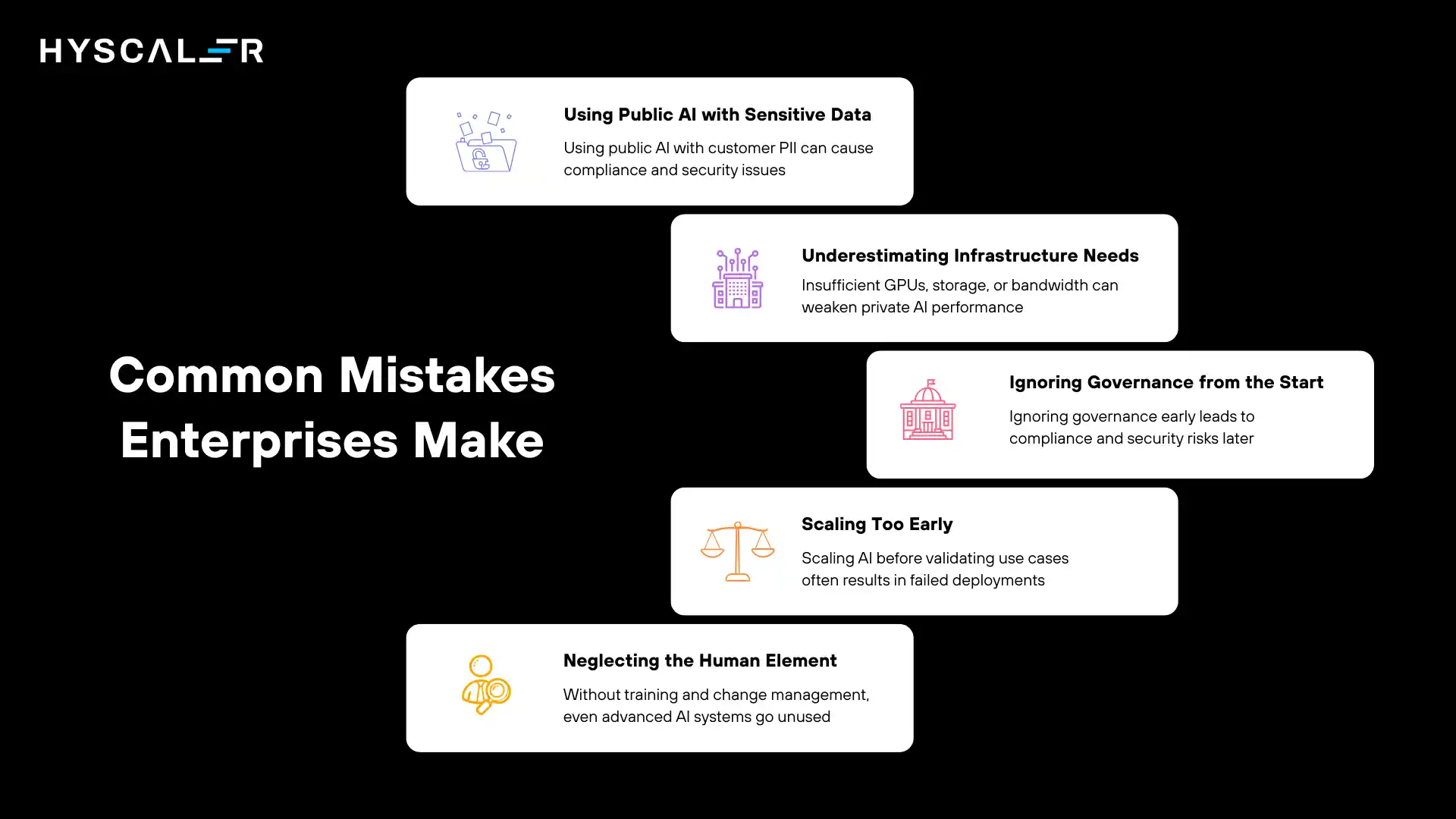

Common Mistakes Enterprises Make

Learning from others’ mistakes can help you avoid costly errors in your AI strategy.

Using Public AI with Sensitive Data

The most common mistake is underestimating data sensitivity.

Teams often use public AI for “just this one document” that contains customer PII, leading to compliance violations and security incidents.

Underestimating Infrastructure Needs

Organizations sometimes attempt private AI deployment without adequate GPU resources, network bandwidth, or storage capacity, resulting in poor performance that undermines confidence in the approach.

Ignoring Governance from the Start

Treating AI as a pure technology project rather than a governance challenge leads to uncontrolled proliferation, compliance gaps, and security vulnerabilities that are expensive to remediate later.

Scaling Too Early

Moving from pilot to production without validating the use case, refining the model, or building operational processes leads to failed deployments and wasted investment.

Neglecting the Human Element

Focusing entirely on technology while ignoring change management, user training, and workflow redesign results in sophisticated AI systems that nobody uses effectively.

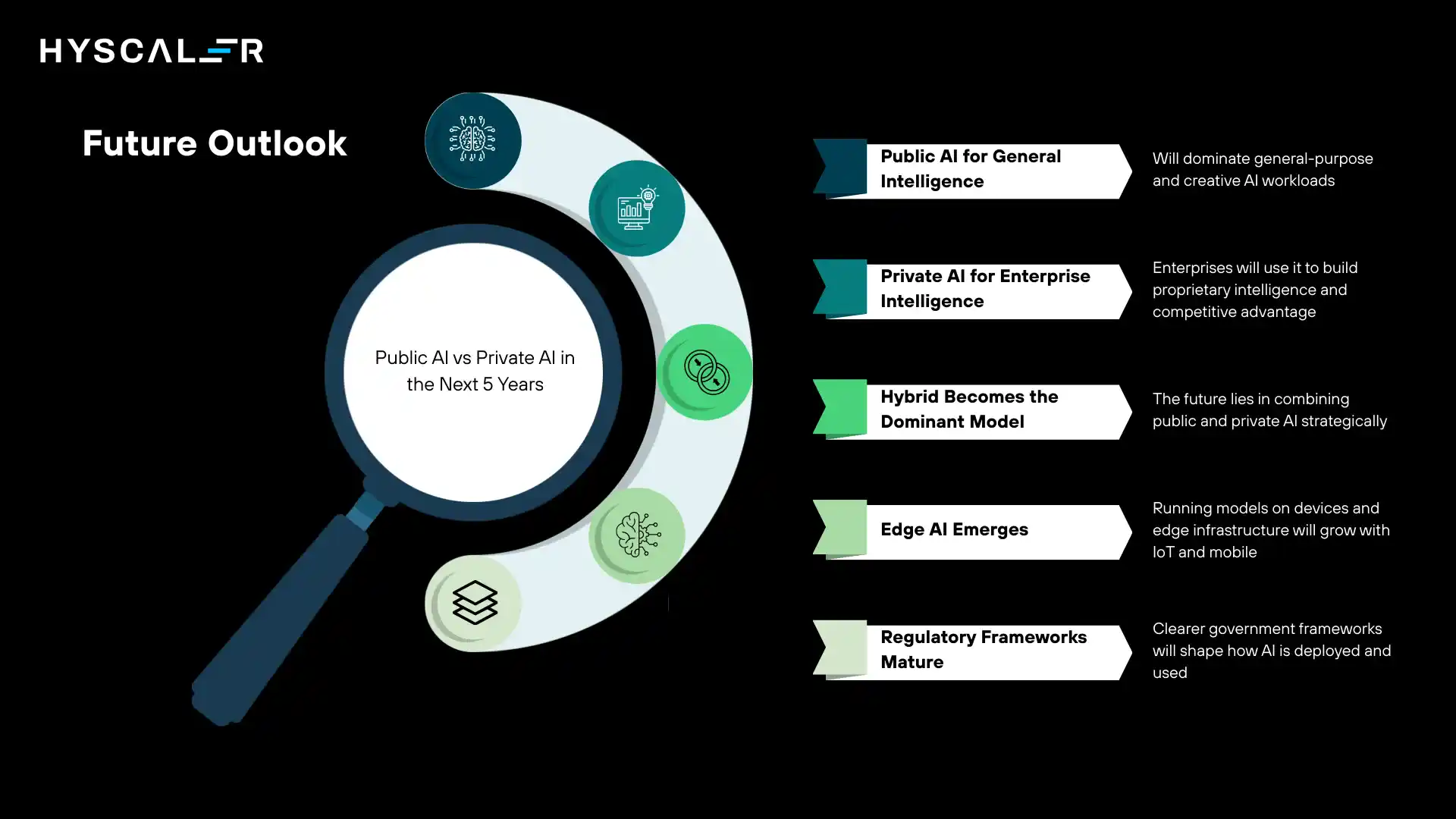

Future Outlook: Public AI vs Private AI in the Next 5 Years

Looking ahead, several trends will shape the evolution of enterprise AI architectures.

Public AI for General Intelligence

Public AI services will continue advancing as providers for general-purpose intelligence, creative tasks, and broad knowledge work.

These services will become more capable, more affordable, and easier to use, perfect for commodity AI workloads.

Private AI for Enterprise Intelligence

Enterprises will increasingly view private AI as the foundation for proprietary intelligence that creates competitive advantage.

The models will be smaller, more efficient, and specifically optimized for unique business contexts.

Hybrid Becomes the Dominant Model

The future isn’t choosing between public AI vs private AI, but it’s architecting intelligent systems that leverage both strategically.

Leading enterprises will develop sophisticated orchestration layers that route requests to the optimal infrastructure based on data sensitivity, cost, and performance requirements.

Edge AI Emerges

A third category will gain prominence: edge AI, where models run on local devices or edge infrastructure.

This approach combines private AI’s privacy benefits with public AI’s convenience, particularly for mobile and IoT applications.

Regulatory Frameworks Mature

Government regulations around AI will solidify, likely mandating private infrastructure for certain use cases while creating clearer guidelines for acceptable public AI usage.

This regulatory clarity will actually accelerate adoption by reducing uncertainty.

Conclusion: Enterprise Reality Is Hybrid

The question isn’t public AI vs private AI, it’s how to leverage both strategically to maximize value while managing risk.

Key Takeaway: The future is not public AI vs private AI.

It is public AI + private AI working together.

Organizations that succeed in the AI-driven economy will be those that develop sophisticated frameworks for deploying the right AI approach for each use case.

They’ll use public AI to accelerate innovation, experiment rapidly, and handle general workloads.

Simultaneously, they’ll invest in private AI for sensitive data, core business processes, and competitive differentiation.

This hybrid approach requires more sophisticated thinking than simply choosing one model or the other.

It demands clear governance frameworks, strong technical capabilities, and strategic clarity about which AI workloads create the most value for your specific business.

The enterprises that master this balance, capturing public AI’s speed and innovation while maintaining control through private AI where it matters, will lead their industries in the AI era.

The question for your organization isn’t which approach to choose, but how to thoughtfully combine both to achieve your strategic objectives.

Frequently Asked Questions (FAQs)

What is the main difference in the Public AI vs Private AI debate?

Public AI is hosted by third-party providers (like OpenAI or Google) and accessed via APIs, offering speed and convenience.

Private AI is deployed on your own infrastructure, providing greater control, data sovereignty, and customization.

Key difference: convenience vs. control.

Is public AI secure enough for enterprise use?

Public AI is secure for many enterprise use cases, especially with non-sensitive data, but it runs on shared third-party infrastructure.

For highly sensitive or regulated data (HIPAA, GDPR, financial data), private AI offers stronger security since data stays within your controlled environment.

How much does it cost to implement private AI?

Private AI typically requires an initial investment of $500K–$5M, covering GPUs, data pipelines, and operations.

At high usage (10-50M tokens/month), it can become more cost-effective than public AI, but organizations must plan for ongoing costs like specialized staff, maintenance, and power.

Can small and medium-sized businesses use private AI?

Private AI was once limited to large enterprises, but cloud-based dedicated infrastructure and smaller open-source models are making it more accessible.

Still, SMBs should assess whether the investment is justified compared to using public AI services.

What is hybrid AI, and why are most enterprises adopting it?

Hybrid AI combines public and private AI for different workloads.

Enterprises use public AI for experimentation and non-sensitive tasks, and private AI for sensitive data and core processes.

It’s not a compromise, it’s an optimization strategy.

Which industries benefit most from private AI?

Industries with strict regulatory requirements or handling sensitive data benefit most from private AI, including:

Healthcare – Patient data protected by HIPAA

Financial services – Transaction data and risk analysis systems

Legal – Attorney-client privileged information

Government – Classified or sensitive information

Pharmaceuticals – Proprietary research and development data

How do I decide if my use case needs public or private AI?

Consider three dimensions:

Data sensitivity – Does it involve regulated data, customer PII, or proprietary intelligence?

Business criticality – Is AI core to your value proposition or a productivity tool?

Volume and scale – Will you process enough requests to justify infrastructure investment?

If data is highly sensitive, AI is business-critical, or volume is substantial, lean toward private AI.

Otherwise, public AI likely offers better value.

What are the technical requirements for deploying private AI?

Key requirements include:

GPU infrastructure (NVIDIA A100/H100 or equivalent)

Data engineering pipelines for training and inference

Model deployment systems (containerization, orchestration)

MLOps/LLMOps platforms for operations and monitoring

Specialized personnel (ML engineers, data scientists, platform engineers)

Without these capabilities, private AI deployments struggle to deliver value.

Can I migrate from public AI to private AI later?

Yes, but migration requires significant effort, retraining or fine-tuning models, rebuilding integrations, updating applications, and reworking workflows.

The more advanced your public AI setup, the more complex the shift.

Early strategic planning helps reduce future migration costs.

How is AI sovereignty related to private AI?

AI sovereignty means maintaining control over AI as strategic infrastructure.

It drives private AI adoption because relying on third-party providers can create vulnerability. Private AI ensures control over capabilities, data, and intellectual property.

Are open-source models good enough for enterprise use?

Modern open-source models like Llama 3 and Mistral are competitive with proprietary models for many use cases.

They’re ideal for private AI because they reduce vendor lock-in and allow full customization, but require strong technical expertise to deploy and optimize.

What role do cloud providers play in private AI?

Major cloud providers like AWS, Google Cloud, and Azure now support private AI with dedicated GPUs, VPC isolation, managed model deployment, and MLOps tools.

These services lower the barrier by offering enterprise-grade infrastructure without building your own data center.

How do I ensure compliance when using public AI?

To stay compliant with public AI: classify sensitive data, mask or anonymize it before use, verify provider certifications (SOC 2, ISO 27001), set clear usage policies, maintain audit logs, and review contracts legally.

For highly regulated or sensitive data, private AI is often the safest option.

What is the future of public AI providers?

Public AI providers will evolve as platforms for productivity and general intelligence, offering specialized models, stronger privacy features, hybrid deployment options, and lower costs.

They won’t replace private AI; they’ll complement it for different use cases.

How long does it take to implement private AI?

Timeline varies significantly based on scope and existing capabilities:

Pilot/POC: 2-3 months with existing infrastructure

Production-ready deployment: 6-12 months, including infrastructure setup

Full enterprise rollout: 12-24 months, including governance, training, and optimization

Organizations with strong ML engineering teams and cloud infrastructure can accelerate these timelines, while those building capabilities from scratch should expect longer implementations.