Table of Contents

As artificial intelligence reshapes entire industries, digital transformation accelerates beyond recognition, and customer expectations reach unprecedented heights.

But cloud infrastructure is a basic necessity for managing the heavy data load the enterprise requires or will produce.

Cloud infrastructure represents the foundation upon which modern enterprises build their competitive edge.

It’s the invisible architecture powering everything from real-time AI inference to global content delivery, from serverless applications to containerized microservices.

In 2026, organizations that master cloud infrastructure aren’t just keeping pace, but they’re defining the future of their industries.

This comprehensive guide is designed for technical and business leaders navigating the complexities of modern cloud infrastructure.

Whether you’re a CIO evaluating multi-cloud strategies, a CTO architecting next-generation platforms, a DevOps engineer optimizing deployment pipelines, or a cloud architect designing resilient systems, this guide provides the strategic insights and technical depth you need to make informed decisions.

The stakes have never been higher.

Get cloud infrastructure right, and you unlock unprecedented agility, innovation, and growth.

Get it wrong, and you face spiraling costs, security vulnerabilities, and competitive disadvantage.

What is Cloud Infrastructure?

Cloud infrastructure is the collection of hardware and software components, servers, storage, networking, virtualization, and orchestration tools that enable the delivery of cloud computing services over the internet.

Unlike traditional on-premises infrastructure that organizations own and operate, cloud infrastructure is typically provided as a service, allowing businesses to consume computing resources on demand without managing the underlying physical assets.

At its core, cloud infrastructure abstracts away the complexities of physical hardware, transforming computing resources into programmable, scalable services.

Organizations can provision virtual servers in minutes, scale storage to petabytes, and deploy applications across global regions, all through simple API calls or web interfaces.

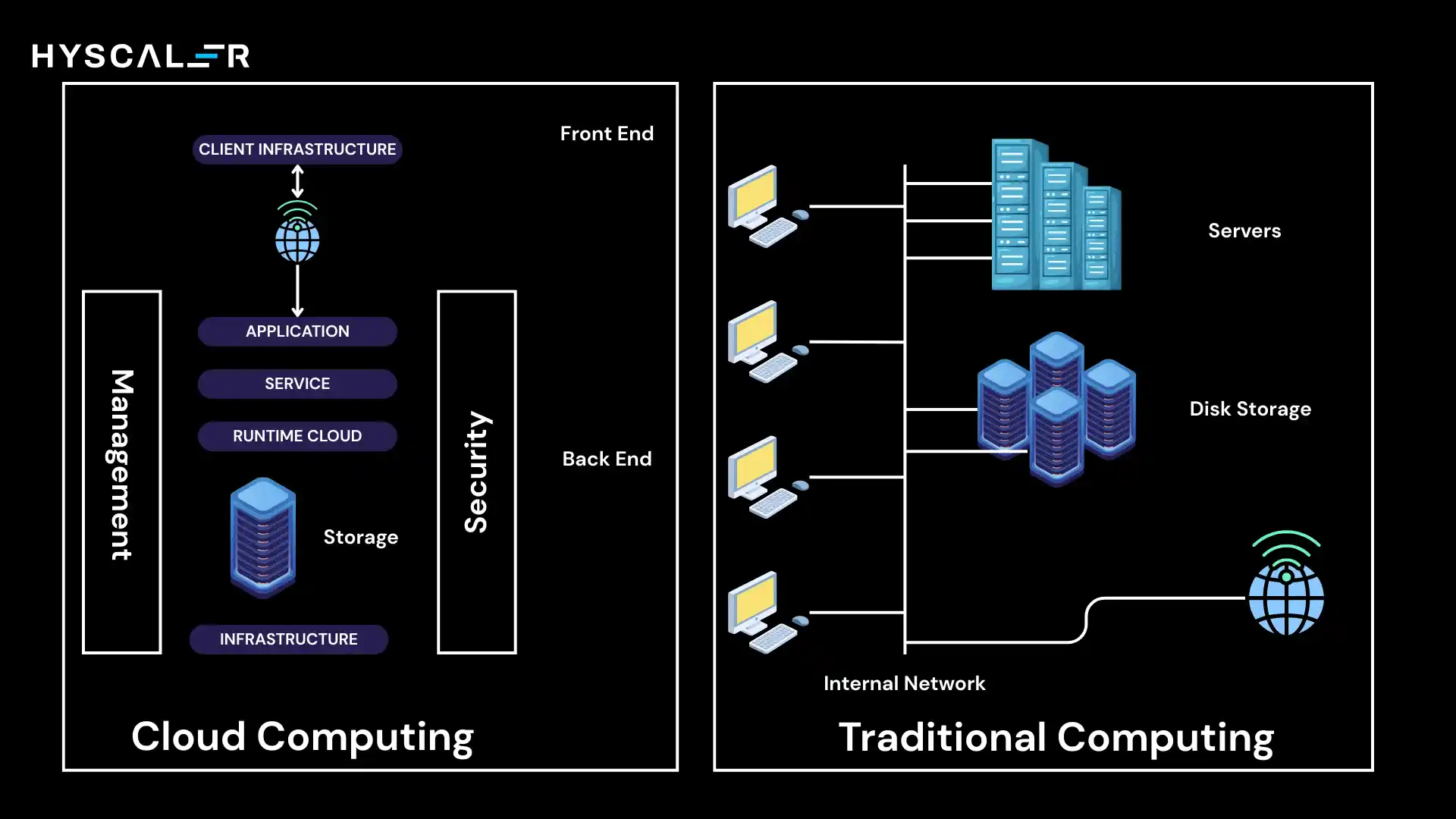

Traditional IT Infrastructure vs Cloud Infrastructure

The contrast between traditional and cloud infrastructure reveals a fundamental shift in how organizations think about technology:

Traditional infrastructure

Requires organizations to purchase, install, and maintain physical servers, storage arrays, and networking equipment in their own data centers.

This model demands significant upfront capital expenditure, long procurement cycles, and dedicated IT staff for hardware maintenance.

Scaling means buying more equipment, which takes weeks or months.

When demand decreases, that expensive hardware sits idle, representing sunk costs.

Cloud infrastructure

Inverts the traditional model entirely.

Resources are virtualized and pooled across shared physical infrastructure, managed by cloud providers.

Organizations access computing power, storage, and networking as utility services, paying only for what they use.

Scaling happens in minutes through software configuration.

Maintenance, upgrades, and hardware failures become the provider’s responsibility.

The shift from capital expenditure to operational expenditure fundamentally changes how businesses budget and plan for technology.

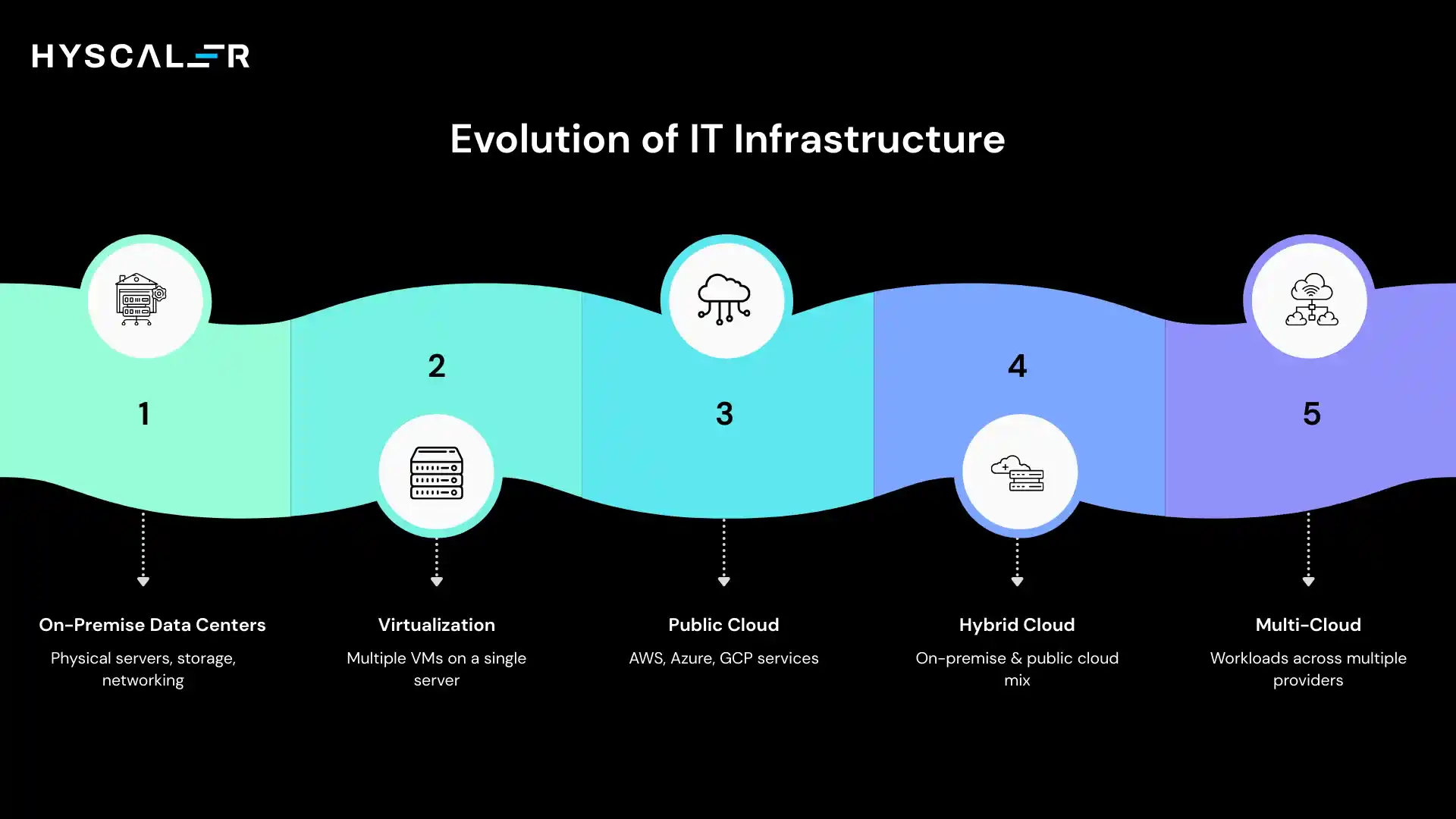

Evolution from On-Premise to Distributed Cloud

The journey to modern cloud infrastructure has been transformative.

Early 2000s: Enterprises relied on on-premise data centers.

Virtualization introduced abstraction, enabling multiple VMs on a single server.

2006: The launch of AWS began the public cloud era, later joined by Azure and GCP, evolving into full-service platforms.

2010s: Hybrid cloud enabled a mix of on-prem and cloud, while multi-cloud reduced vendor lock-in and optimized costs.

2026: Distributed cloud is emerging, cloud services spread across locations but centrally managed, addressing latency, data sovereignty, and edge computing needs.

Cloud has evolved from centralized data centers to a globally distributed computing fabric.

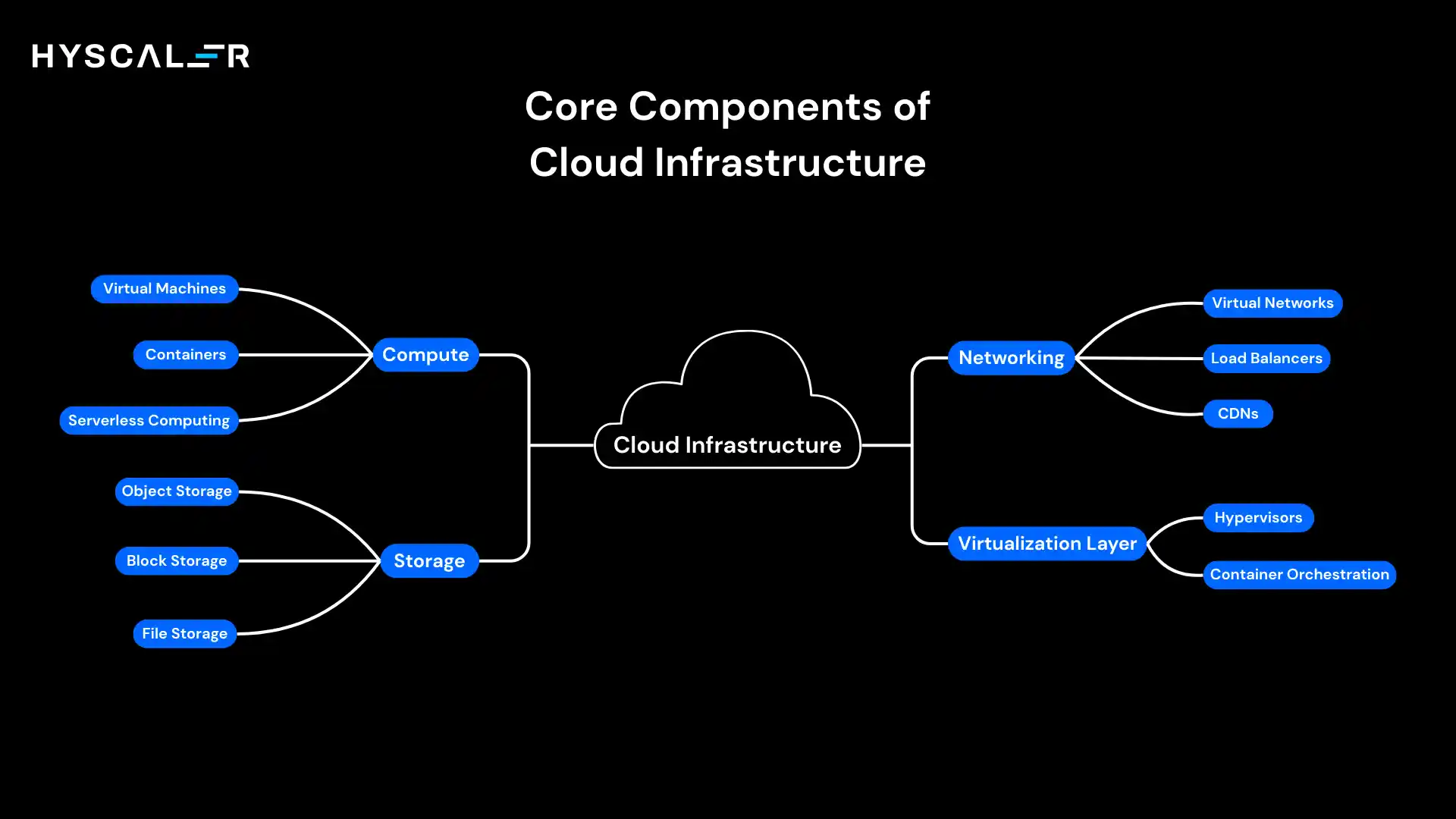

Core Components of Cloud Infrastructure

Modern cloud infrastructure is built on four foundational pillars that work in concert to deliver flexible, scalable computing services.

Understanding these components is essential for architecting effective cloud solutions.

Compute

Compute resources are the processing engines of cloud infrastructure, providing the CPU and memory needed to run applications and workloads.

Virtual Machines

Remains the workhorse of cloud computing in 2026.

VMs provide complete operating system environments running on virtualized hardware, offering familiar server experiences without physical constraints.

Organizations use VMs for everything from legacy application hosting to production databases.

Modern hyperscale providers offer hundreds of VM configurations optimized for different workloads, from memory-intensive data processing to compute-optimized scientific simulations.

Containers

It has revolutionized application deployment by packaging code and dependencies into lightweight, portable units.

Unlike VMs that virtualize entire operating systems, containers share the host OS kernel while maintaining isolated user spaces.

This efficiency enables organizations to run dozens of containers on resources that might support only a handful of VMs.

Container technology has become essential for microservices architectures and DevOps workflows.

Serverless Computing

Represents the next abstraction layer, eliminating infrastructure management.

Developers write functions that execute in response to events, with the cloud provider handling all provisioning, scaling, and maintenance.

Serverless architectures excel at event-driven workloads, API backends, and data processing pipelines, allowing teams to focus purely on business logic rather than infrastructure concerns.

Storage

Cloud storage systems provide durable, scalable data persistence across different use cases and performance requirements.

Object Storage

It has become the foundation of cloud-native applications, offering virtually unlimited scalability for unstructured data.

Files are stored as objects with metadata and unique identifiers, accessed through HTTP APIs.

Object storage excels at storing media files, backups, data lakes, and static website content.

Its durability, often designed for 99.999999999% reliability, makes it ideal for long-term data retention.

Block Storage

Provides high-performance persistent volumes that attach to virtual machines, functioning like traditional hard drives or SSDs.

Block storage supports databases, transactional applications, and any workload requiring low-latency random access.

Modern cloud block storage offers features like snapshots, encryption, and the ability to dynamically resize volumes without downtime.

File Storage

Delivers network-attached storage with traditional file system interfaces, enabling multiple compute instances to access shared file systems concurrently.

This capability supports legacy applications designed for NFS or SMB protocols, collaboration tools requiring shared access, and content management systems.

File storage bridges the gap between traditional infrastructure and cloud-native architectures.

Networking

Cloud networking components create the connective tissue that enables communication between resources and end users.

Virtual Networks

Provides an isolated network environment within cloud infrastructure, allowing organizations to define IP address ranges, subnets, routing tables, and network gateways.

These software-defined networks enable the same network segmentation and security controls as physical networks, but with the flexibility to reconfigure instantly through code.

Load Balancers

Distribute incoming traffic across multiple compute instances, ensuring high availability and optimal performance.

Modern cloud load balancers operate at different OSI layers, from simple Layer 4 TCP/UDP distribution to sophisticated Layer 7 HTTP routing based on URL paths, headers, or cookies.

Advanced features include SSL termination, health checking, and automatic scaling.

Content Delivery Networks (CDNs)

Cache static content at edge locations worldwide, dramatically reducing latency for global users.

By serving content from servers near end users, CDNs accelerate website performance, reduce bandwidth costs, and improve user experience.

Modern CDNs also provide DDoS protection, WAF capabilities, and edge computing functions.

Virtualization Layer

The virtualization layer abstracts physical hardware into programmable resources, making cloud infrastructure possible.

Hypervisors

These are the fundamental technologies enabling virtual machines.

These software layers sit between hardware and operating systems, allowing multiple VMs to share physical resources safely and efficiently.

Type 1 hypervisors run directly on hardware, while Type 2 hypervisors run on host operating systems.

Cloud providers use highly optimized hypervisors like KVM, Xen, or proprietary solutions to maximize density and performance.

Container Orchestration

Platforms like Kubernetes have become indispensable for managing containerized applications at scale.

These systems handle container deployment, scaling, networking, and health monitoring across clusters of machines.

Kubernetes in particular has emerged as the de facto standard, offering declarative configuration, self-healing capabilities, and a rich ecosystem of extensions.

Container orchestration transforms complex distributed systems into manageable, resilient platforms.

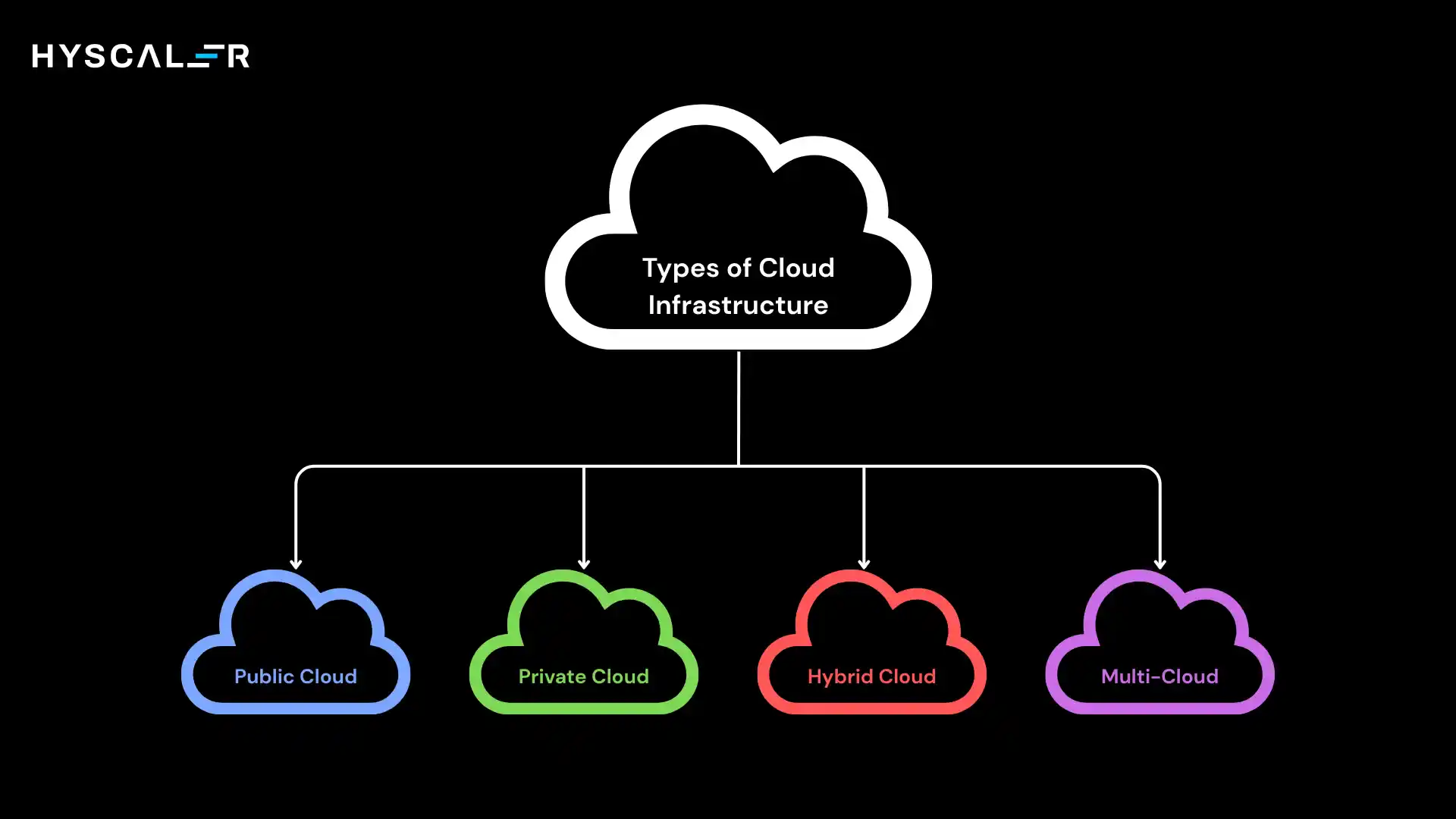

Types of Cloud Infrastructure

Organizations in 2026 rarely rely on a single cloud deployment model.

Instead, they strategically combine different types of infrastructure to meet diverse business, technical, and regulatory requirements.

Public Cloud

Public cloud infrastructure is owned by third-party providers and delivered over the internet using secure, multi-tenant architectures.

It offers on-demand scalability, global availability, and a pay-as-you-go model, turning fixed costs into flexible, usage-based spending.

With access to advanced services like AI/ML, analytics, and databases, organizations can innovate without heavy upfront investment.

Use cases span startups building entirely in the cloud, enterprises running dev/test and web apps, data analytics, and scalable workloads, as well as SaaS platforms delivering globally.

Public cloud is ideal where agility, elasticity, and speed matter more than strict data control or regulatory constraints.

Private Cloud

Private cloud infrastructure is dedicated to a single organization, deployed on-premises or hosted, offering cloud features like self-service, automation, and scalability with full control.

It’s ideal for industries with strict compliance and data sovereignty needs, such as finance, healthcare, government, or sensitive IP environments, and for legacy systems requiring specific setups.

The trade-off: higher upfront costs, ongoing maintenance, and potential underutilization due to fixed capacity.

Best suited for workloads needing consistent performance, security, and complete governance.

Hybrid Cloud

Hybrid cloud integrates on-premises, private, and public clouds into a unified environment where data and applications move based on needs.

It enables flexibility, keeping sensitive workloads on-prem while using public cloud for scalability and customer-facing apps, and bursting to the cloud during peak demand.

Organizations can modernize gradually, avoiding risky full migrations.

With unified management, security, and networking, teams can build once and deploy anywhere.

In 2026, hybrid cloud is a strategic, long-term approach to optimize performance, cost, and compliance.

Multi-Cloud Strategy

Multi-cloud distributes workloads across multiple providers (e.g., AWS, Azure, Google Cloud) to use best-of-breed services.

It reduces vendor lock-in, improves resilience against outages, and enables cost optimization and better negotiation leverage.

It also supports compliance needs and strengthens business continuity through provider and geographic diversity.

However, it adds complexity, requiring cross-platform expertise, consistent security, and integration efforts.

Successful multi-cloud strategies rely on strong abstraction, unified management, and standardized practices.

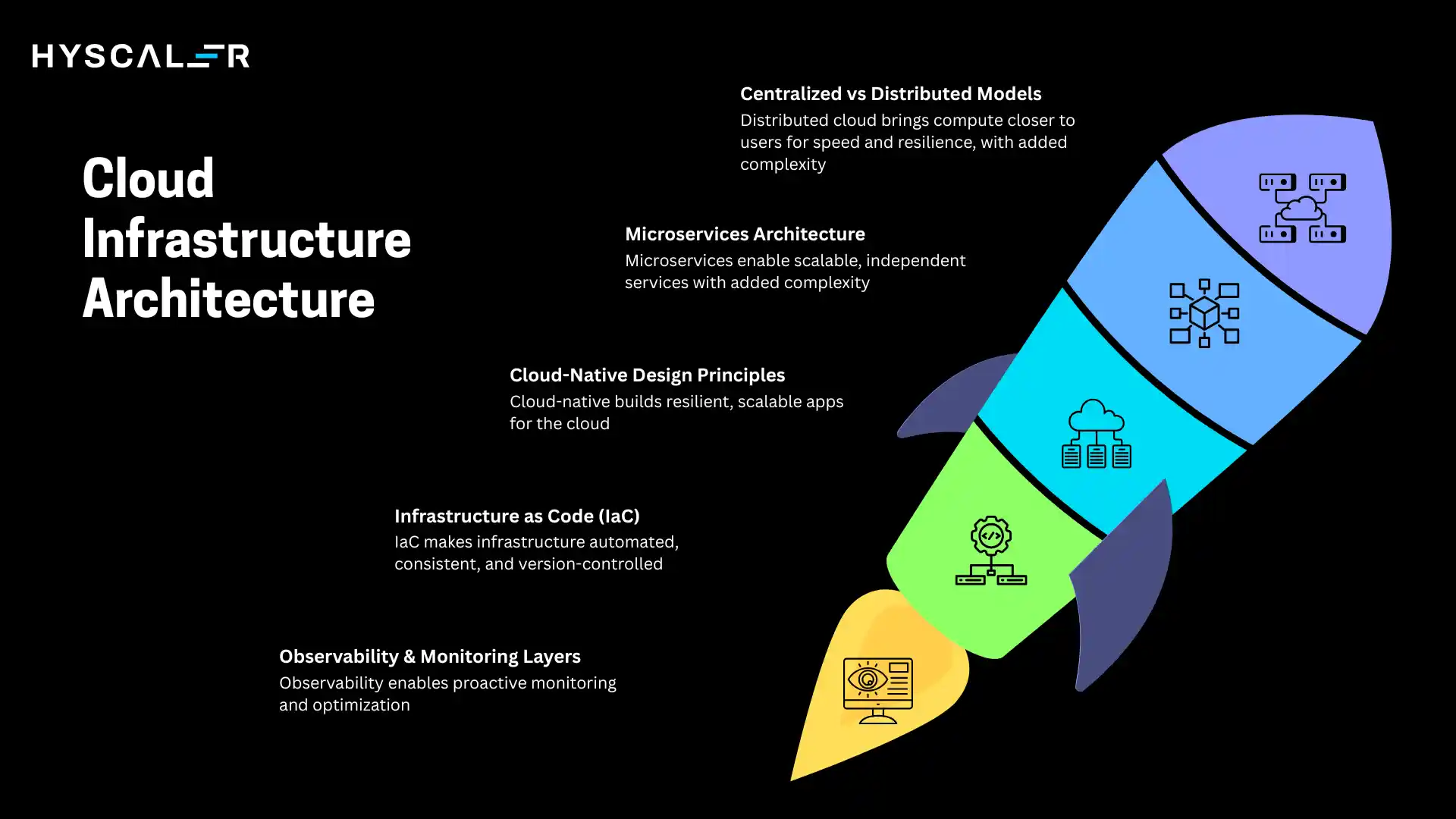

Cloud Infrastructure Architecture

The architecture of cloud infrastructure has evolved dramatically from simple lift-and-shift approaches to sophisticated, cloud-native designs that fully exploit modern platform capabilities.

Centralized vs Distributed Models

Traditional cloud used centralized regional data centers, simpler to manage but prone to latency and single points of failure.

Distributed cloud spreads infrastructure across regions, edge locations, and on-prem sites while keeping centralized control.

This reduces latency, supports data residency, and improves resilience through failover.

Trade-offs include higher complexity and networking challenges.

In 2026, organizations blend both centralized control and edge deployment for real-time, latency-sensitive workloads.

Microservices Architecture

Microservices break applications into loosely coupled, independently deployable services, each handling a specific function and communicating via APIs.

They enable independent scaling, faster deployments, and flexibility in technology choices.

This aligns with cloud capabilities, scaling only what’s needed and avoiding large release cycles.

However, microservice architecture requires strong supporting infrastructure like API gateways, service discovery, monitoring, and resilient communication.

Containers and serverless platforms make managing large-scale microservices practical.

Cloud-Native Design Principles

Cloud-native design builds applications specifically for the cloud, not adapted from traditional systems.

It emphasizes resilience (designing for failure), stateless services, immutable infrastructure, and declarative configuration for automation and consistency.

Applications leverage managed services to reduce operational overhead and focus on innovation.

Strong observability (metrics, logs, traces) and built-in security are core to managing complex distributed systems.

Infrastructure as Code (IaC)

Infrastructure as Code (IaC) treats infrastructure like software, defined in code, version-controlled, tested, and deployed via automation instead of manual setup.

Tools like Terraform, AWS CloudFormation, and Azure ARM enable consistent, repeatable provisioning and eliminate configuration drift.

IaC allows identical environments across dev, test, and production, with changes following code review processes.

It improves quality through testing, audit trails, and easy disaster recovery by recreating environments from code.

In 2026, IaC is a standard practice for modern cloud operations.

Observability & Monitoring Layers

Modern cloud systems generate massive data, metrics, logs, and traces, used to understand performance and behavior.

Metrics show system health, logs capture events, and tracing tracks requests across services.

Observability platforms correlate this data, enabling faster debugging, root cause analysis, and performance optimization.

With AI-driven insights and anomaly detection, teams can identify issues before users are impacted.

In 2026, observability is proactive, driving optimization, cost efficiency, and better user experience, making it as critical as the infrastructure itself.

Benefits of Cloud Infrastructure

The migration to cloud infrastructure delivers tangible advantages that extend far beyond simple cost savings, fundamentally transforming how organizations operate and compete.

Scalability & Elasticity

Cloud infrastructure removes fixed capacity limits with on-demand scalability.

Resources can automatically scale up or down based on demand, at the application, environment, or global level.

This eliminates costly capacity planning and idle infrastructure.

Startups can scale from zero to millions, while enterprises handle spikes without overprovisioning.

Elasticity enables flexible, cost-efficient growth and new business models.

Cost Optimization (CapEx → OpEx)

Cloud shifts spending from capital expenditure (CapEx) to operational expenditure (OpEx).

Organizations pay only for what they use, aligning costs with business growth and eliminating large upfront investments.

This reduces financial risk; failed projects don’t leave costly unused hardware.

It also enables continuous rightsizing and gives finance teams clear visibility into usage and spending.

High Availability

Cloud providers design for high reliability with built-in redundancy and strong SLAs.

Multi-zone deployments, global load balancing, and automated failover minimize downtime.

This enables uptime of 99.99%+ with seamless maintenance and disaster recovery across regions.

Organizations can deliver highly available services, building trust and competitive advantage.

Global Deployment

Cloud infrastructure offers instant global reach without building physical data centers.

Applications can run across multiple regions, with CDNs caching content at edge locations for faster performance.

This enables international expansion, supports data residency compliance, and ensures cross-region redundancy.

Cloud removes geographic barriers, making global scale accessible to any organization.

Faster Innovation Cycles

The most transformative benefit is accelerated innovation.

Services deploy in minutes, not months.

Developers experiment freely with low-cost, temporary resources, making failure inexpensive and enabling breakthrough ideas.

With managed services, AI/ML, databases, analytics, and IoT available via APIs, teams spend less time on infrastructure and more on delivering business value.

This democratization of technology empowers small teams to build at the scale of large enterprises.

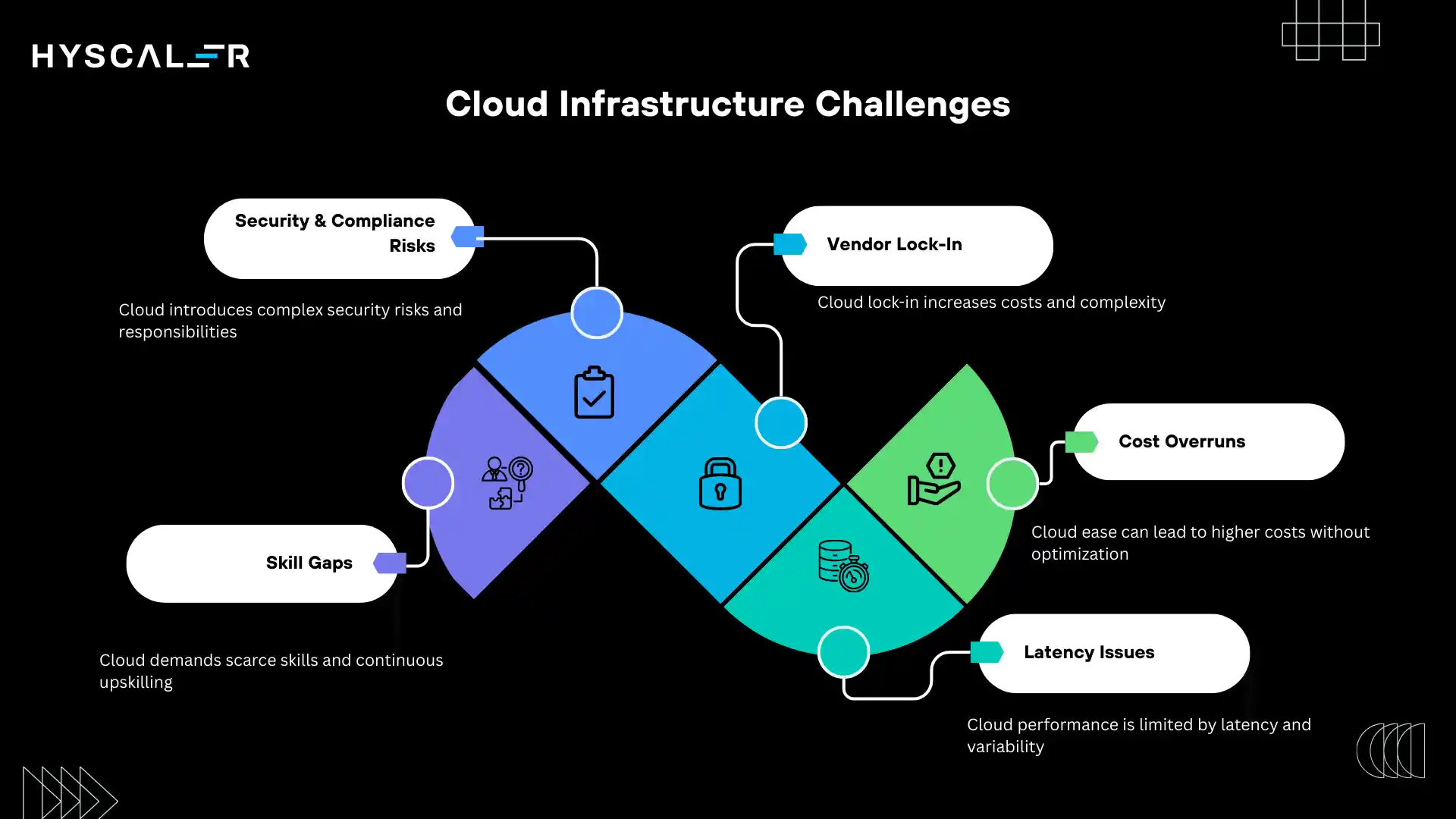

Cloud Infrastructure Challenges

While cloud infrastructure delivers substantial benefits, organizations must navigate significant challenges to realize its full potential.

Security & Compliance Risks

Cloud infrastructure brings new security challenges.

Under the shared responsibility model, providers secure the infrastructure, while organizations must protect their applications and data.

Misconfigurations, like public storage or excessive permissions, remain a leading cause of breaches.

Compliance is more complex due to multi-jurisdiction data storage and strict requirements for residency, encryption, and auditing, all within a constantly changing environment.

Additionally, API-driven systems expand attack surfaces, compromised credentials can enable large-scale damage, and insider risks extend to cloud provider personnel.

Vendor Lock-In

Cloud providers differ significantly, creating switching costs and potential lock-in.

Proprietary services and APIs often lack direct equivalents, making migrations complex and requiring major refactoring.

Lock-in also extends to team expertise, tools, and processes tied to specific platforms.

While strategies like multi-cloud, abstraction layers, and Kubernetes reduce dependency, they add complexity and limit access to provider-specific innovations.

Organizations must balance lock-in risks with the benefits of leveraging unique cloud capabilities.

Cost Overruns

The ease of provisioning cloud resources often leads to cost challenges.

Unused resources, over-provisioning, underutilized capacity, and data transfer fees can quickly drive up expenses.

Complex pricing models also make cost prediction difficult.

Lift-and-shift migrations often increase costs if applications aren’t optimized for cloud environments.

Real savings require continuous optimization and strong cost governance.

Latency Issues

Despite global cloud infrastructure, physical limits still impact performance.

Ultra-low latency applications struggle with geographically distributed users and data, while chatty applications suffer from multiple sequential API calls.

Shared infrastructure can cause performance variability, and complex network paths may introduce unpredictable latency spikes.

Skill Gaps

Cloud infrastructure demands new skills beyond traditional IT.

Teams must master cloud-native design, provider-specific services, infrastructure as code, and distributed systems, expertise that’s still scarce.

Organizations struggle to hire and retain talent, while existing teams need reskilling and mindset shifts.

With platforms evolving rapidly, continuous learning is essential.

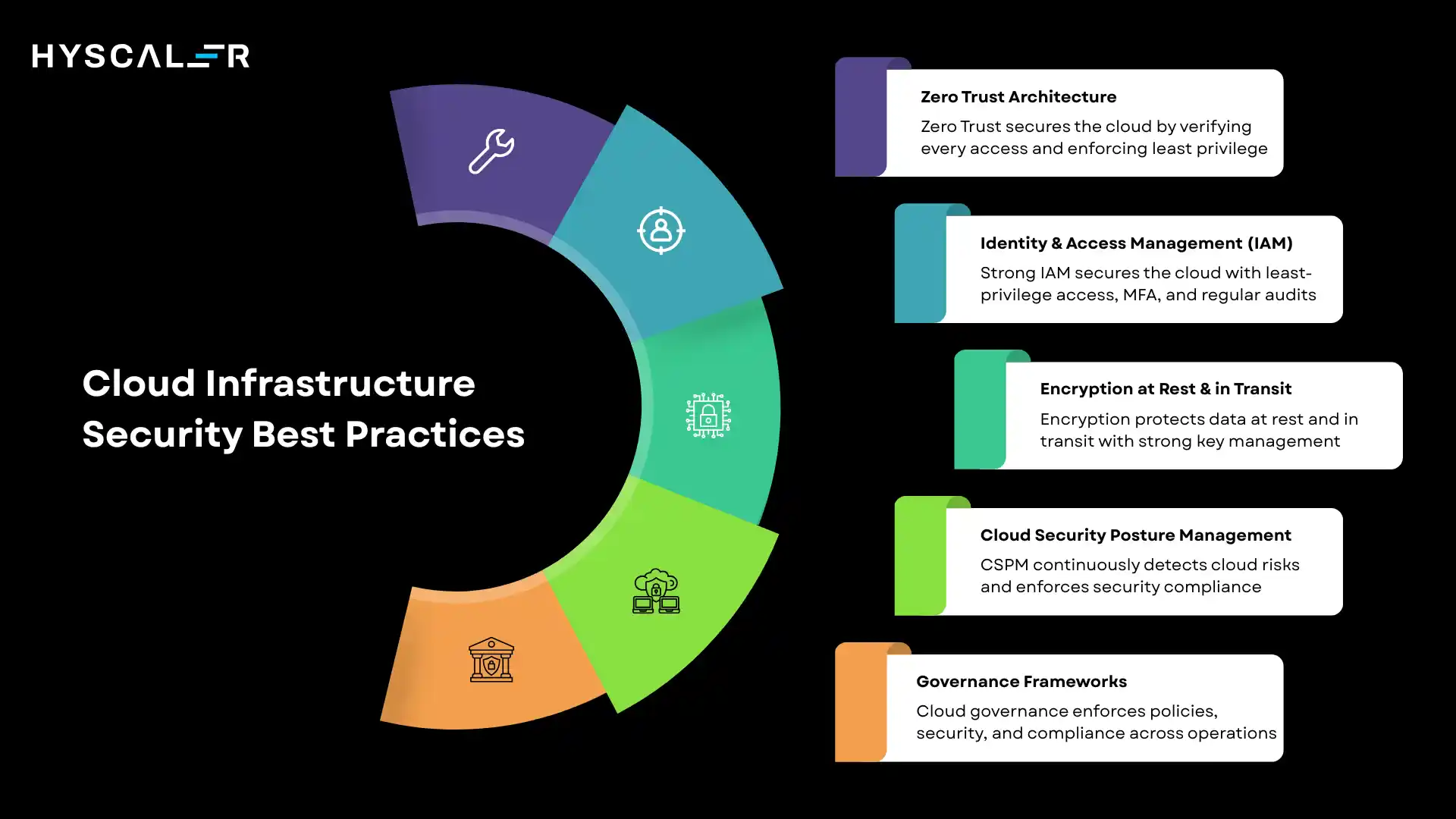

Cloud Infrastructure Security Best Practices

Securing cloud infrastructure requires a comprehensive approach that addresses the unique characteristics of cloud environments while maintaining rigorous standards.

Zero Trust Architecture

Zero Trust assumes no network is inherently trusted, requiring verification for every access request.

It fits cloud environments where perimeters are porous. Implementation includes strict authentication and authorization, least-privilege access, microsegmentation, and continuous monitoring.

This approach helps protect against compromised credentials, insider threats, and attacks that bypass traditional defenses.

Identity & Access Management (IAM)

Robust IAM is the foundation of cloud security.

Use role-based access control to grant least-privilege access, and rely on roles or service accounts instead of embedded credentials.

Best practices include MFA, regular permission audits, just-in-time access for privileged tasks, and fine-grained policies.

Treat IAM policies like code, version, test, and manage them consistently.

Encryption at Rest & in Transit

Data encryption protects sensitive information throughout its lifecycle.

Encryption at rest secures stored data, while encryption in transit uses TLS/SSL to protect data in motion.

Best practices include envelope encryption, key rotation, and separation of keys across security domains, making encryption the default, not optional.

Cloud Security Posture Management (CSPM)

CSPM tools continuously monitor cloud environments for misconfigurations, policy violations, and risks.

They identify issues like public exposure, excessive permissions, and missing encryption, and integrate with pipelines to prevent problems before deployment.

With multi-cloud visibility, compliance mapping, and remediation guidance, CSPM shifts security from periodic audits to continuous validation.

Governance Frameworks

Effective governance defines policies and processes for secure cloud operations.

This includes resource tagging, approved service catalogs, change management, and audit trails.

With automated policy enforcement and security integrated into CI/CD, governance becomes continuous.

Regular audits ensure compliance with internal standards and regulations.

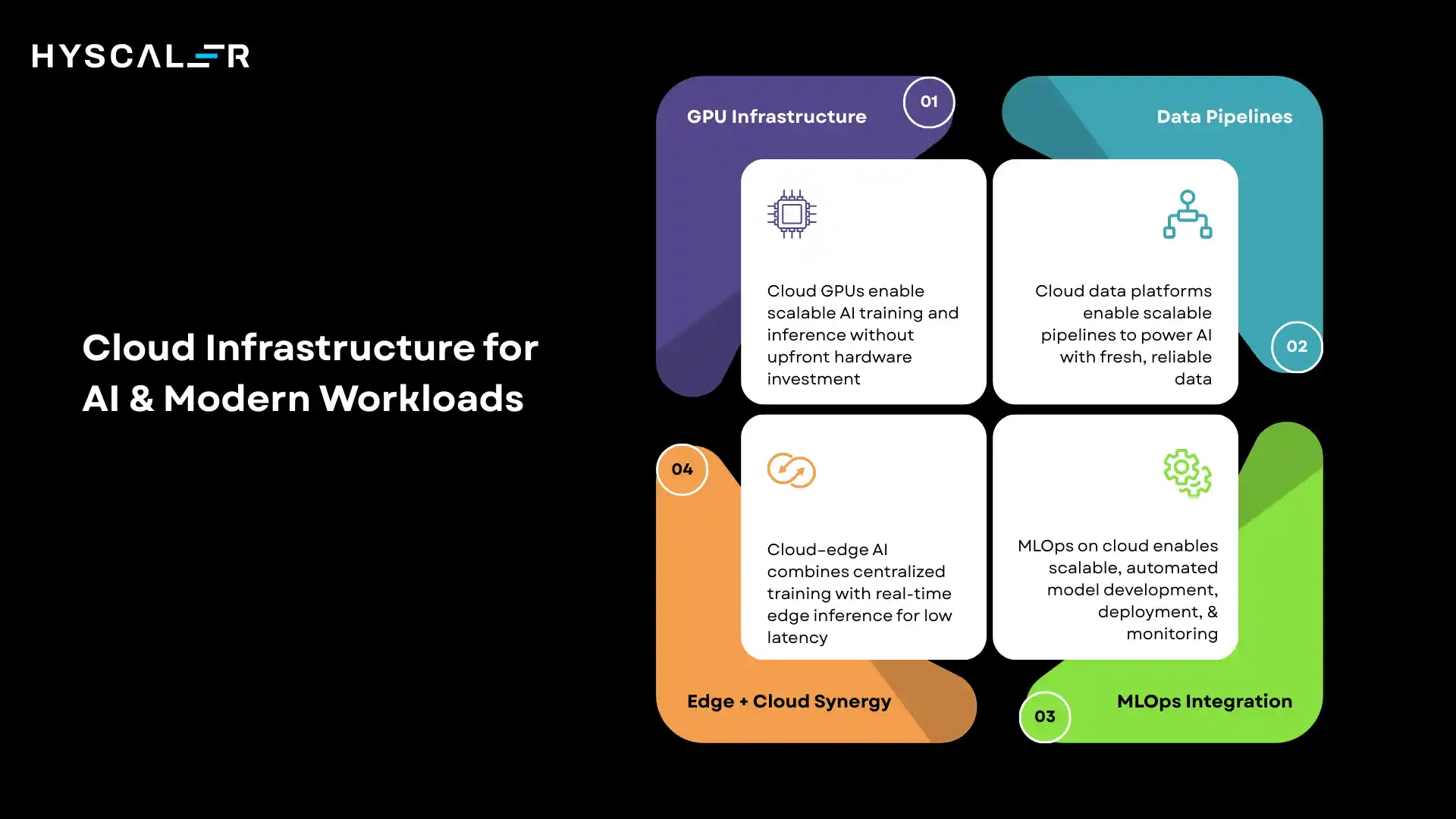

Cloud Infrastructure for AI & Modern Workloads

Cloud infrastructure has evolved specifically to support the computational demands and data requirements of artificial intelligence and emerging workload types.

GPU Infrastructure

Modern cloud platforms offer GPU-powered instances for parallel processing.

They enable training large AI models, running computer vision, scientific simulations, and real-time inference.

With no upfront cost, organizations can scale GPU clusters on demand and choose instance types optimized for training or cost-efficient inference.

Data Pipelines

AI workloads require advanced data infrastructure to handle large-scale data.

Cloud platforms offer managed services for pipelines, streaming ingestion, distributed processing, data lakes/warehouses, and feature stores.

These tools reduce operational complexity, enabling teams to build end-to-end pipelines that ensure data quality, lineage, and scalable model serving.

MLOps Integration

MLOps applies DevOps principles to machine learning, standardizing model development, deployment, and monitoring.

Cloud platforms support this with experiment tracking, model versioning, automated training, model registries, and deployment pipelines.

These capabilities enable rapid iteration, reproducibility, and continuous monitoring, reducing the gap between development and production.

Edge + Cloud Synergy

Advanced AI deployments combine cloud and edge.

The cloud handles large-scale model training, while the edge enables low-latency, real-time inference near data sources.

This hybrid approach supports use cases like IoT, retail, and healthcare, with the cloud managing edge devices, updating models, and aggregating insights.

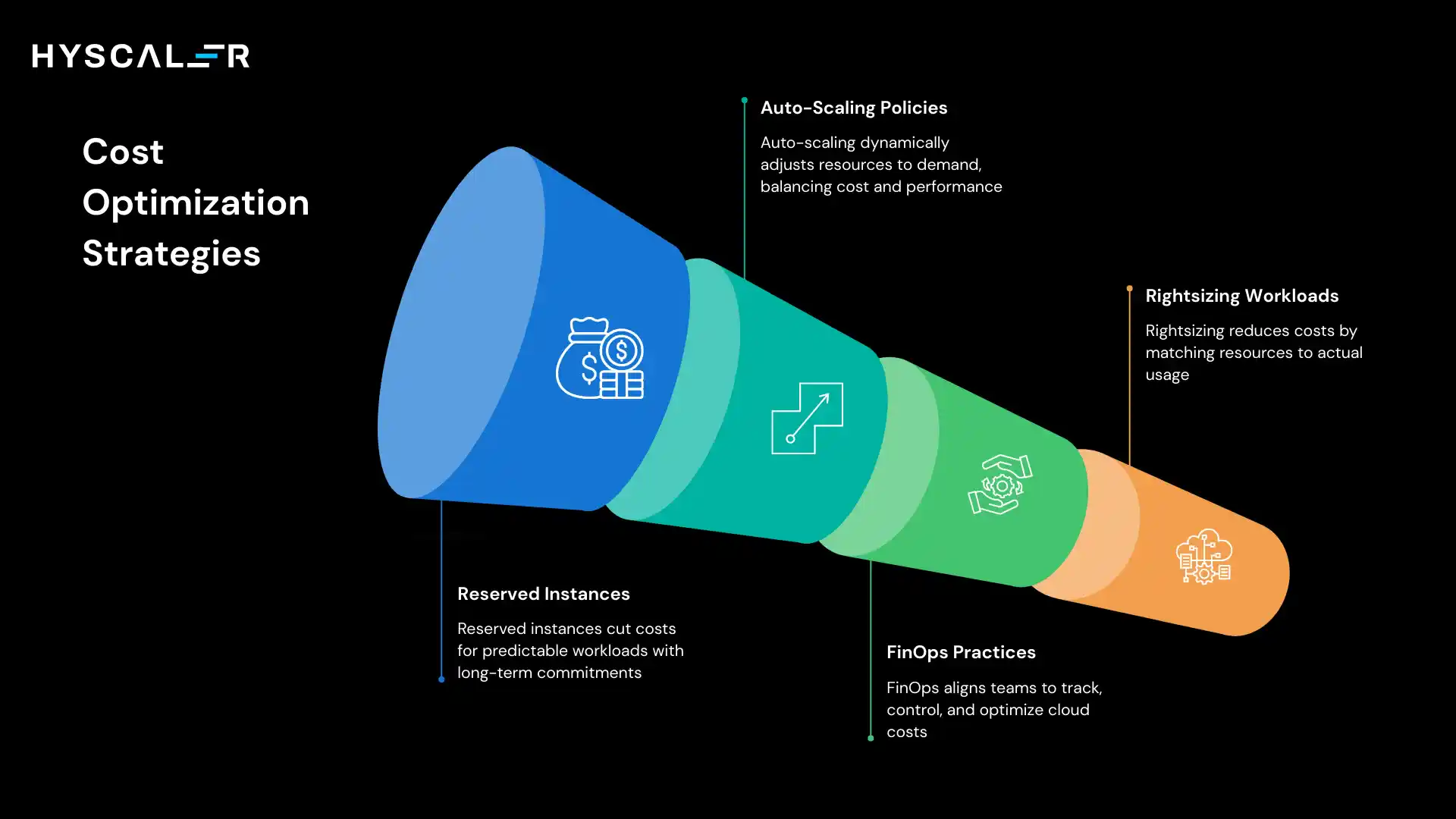

Cost Optimization Strategies

Effective cost management requires continuous attention and sophisticated approaches that go beyond simply turning off unused resources.

Reserved Instances

Reserved instances offer 30–70% cost savings by committing to usage for 1–3 years.

They suit predictable, steady workloads.

Organizations analyze usage to identify candidates, balance commitment vs. flexibility, and use convertible options when needs may change.

Unused reservations can also be sold via marketplaces.

Auto-Scaling Policies

Auto-scaling adjusts resources in real time based on metrics like CPU, traffic, or queue depth, while predictive scaling uses historical data to anticipate demand.

Proper configuration is key, too conservative wastes cost, too aggressive risks performance issues.

Teams continuously test and refine policies to balance cost and performance.

FinOps Practices

FinOps brings financial accountability to cloud operations by aligning engineering, finance, and business teams.

It includes real-time cost visibility, chargeback models, anomaly detection, and usage-based optimization.

Cost becomes a core KPI, with engineers optimizing usage and finance collaborating on cost-efficient architecture decisions.

Rightsizing Workloads

Rightsizing reduces costs by aligning resources with actual usage.

It involves monitoring utilization, identifying over-provisioned resources, testing smaller instances, and applying automated recommendations.

Continuous reviews and gradual changes ensure savings without impacting performance.

Cloud Infrastructure vs Traditional Infrastructure

The fundamental differences between cloud and traditional infrastructure extend beyond deployment location to operational philosophy and business model.

| Feature | Traditional | Cloud |

|---|---|---|

| Scalability | Limited by physical capacity: requires weeks/months to expand | Elastic: scale up/down in minutes based on demand |

| Cost Model | CapEx: Large upfront investment in hardware; 3-5 year amortization | OpEx: Pay-as-you-go; costs align with actual usage |

| Deployment Speed | Slow: procurement, installation, and configuration take weeks | Rapid: provision resources in minutes via API or console |

| Maintenance | Manual: requires dedicated staff for hardware, updates, and patching | Automated: provider handles infrastructure maintenance |

| Geographic Reach | Limited to owned data center locations | Global: deploy across dozens of regions worldwide |

| Disaster Recovery | Expensive: requires duplicate infrastructure in separate locations | Built-in: leverage provider redundancy and replication |

| Innovation Access | Slow: new capabilities require procurement and deployment | Immediate: new services available as they launch |

| Capacity Planning | Must forecast and build for peak demand, which leads to overprovisioning | Dynamic: match resources to actual demand patterns |

| Time to Market | Months from concept to production | Days or weeks from concept to production |

| Resource Utilization | Often, 10-30% utilization, hardware sits idle | Optimized: pay only for consumed resources |

Future Trends in Cloud Infrastructure (2026 & Beyond)

Cloud infrastructure continues evolving rapidly, with several trends poised to reshape the landscape in the coming years.

AI-Optimized Infrastructure

Cloud infrastructure is evolving to support AI-first workloads.

Providers now offer specialized accelerators, optimized networking, integrated ML platforms, and efficient inference engines.

Future platforms will make AI a core capability, simplifying development and deployment without requiring deep infrastructure expertise.

Edge Computing Growth

Edge computing is growing with demand from 5G, IoT, autonomous systems, and real-time analytics.

Cloud providers are extending infrastructure to the edge, enabling low-latency applications while maintaining centralized cloud management.

This hybrid model unlocks use cases not possible with cloud-only architectures.

Autonomous Cloud Operations (AIOps)

AI-driven operations (AIOps) automate cloud management with minimal human intervention.

They predict demand, auto-scale resources, detect and fix issues proactively, and optimize costs continuously.

This shifts operations from reactive to proactive, allowing teams to focus on strategy while AI handles execution.

Sustainable Cloud (Green Cloud)

Environmental sustainability is now a key focus in cloud computing.

Providers are investing in renewable-powered data centers, efficient hardware, carbon offset programs, and tools to track environmental impact.

Organizations increasingly factor sustainability into cloud decisions, optimizing not just for cost and performance but also carbon footprint.

Conclusion

Cloud infrastructure is no longer just IT, it’s a strategic driver of business growth.

From on-premise systems to distributed, AI-ready environments, it now powers innovation, scalability, and global reach.

Organizations that succeed will treat cloud as a platform for transformation, embracing cloud-native design, strong security, and continuous cost optimization.

While challenges like security, cost control, and complexity remain, the right strategy balances agility with governance.

Ultimately, cloud infrastructure defines how businesses compete in a digital-first world, those who get it right won’t just keep up, they’ll lead.

FAQ

What is the difference between cloud infrastructure and cloud computing?

Cloud infrastructure refers to the hardware and software components – servers, storage, networking, and virtualization – that power cloud environments.

Cloud computing is the delivery of services like applications, platforms, and software over the internet using that infrastructure.

How much does cloud infrastructure cost?

Cloud costs vary widely, from a few hundred dollars for small apps to millions for large enterprises.

With pay-as-you-go pricing, costs scale with usage, eliminating large upfront investments.

Is cloud infrastructure more secure than on-premises?

Cloud can be more secure than on-premises when properly configured, due to strong provider controls and compliance.

However, under the shared responsibility model, organizations must manage configurations, access, and data protection to prevent risks.

What skills are needed to manage cloud infrastructure?

Cloud infrastructure requires knowledge of networking, distributed systems, infrastructure-as-code tools like Terraform, container and orchestration technologies, security and compliance practices, cost optimization (FinOps), and cloud provider services. Certifications from AWS, Azure, or GCP help validate these skills.

How long does cloud migration take?

Cloud migrations can take weeks for simple apps or years for complex systems.

Timelines depend on complexity, data volume, refactoring, and compliance needs. Phased migrations often deliver value faster than big-bang approaches.

Can I avoid vendor lock-in with cloud infrastructure?

Avoiding lock-in completely is difficult, but risks can be reduced with multi-cloud strategies, abstraction layers, and portable tools like Kubernetes.

Limiting reliance on proprietary services and using containerization improves portability, though it may restrict access to provider-specific innovations.

What is the shared responsibility model?

The shared responsibility model splits security between providers and customers.

Providers secure infrastructure, networking, and managed services, while customers protect their applications, data, access, encryption, and configurations.

Responsibilities vary depending on the service type.

How do I optimize cloud infrastructure costs?

Cloud cost optimization involves rightsizing resources, using auto-scaling, reserving instances for predictable workloads, removing unused resources, and optimizing storage and data transfer. FinOps practices and regular reviews help maintain cost visibility and achieve ongoing savings.

What is Infrastructure as Code, and why does it matter?

Infrastructure as Code (IaC) defines infrastructure using code that can be version-controlled, tested, and automated. It enables consistent provisioning, reduces manual errors, improves disaster recovery, and provides clear audit trails for infrastructure changes.

Should my organization use public, private, or hybrid cloud?

The best approach depends on specific needs. Public cloud offers agility and global scalability, private cloud supports strict compliance and predictable workloads, and hybrid cloud balances control with flexibility. Most large enterprises adopt hybrid or multi-cloud strategies.