Table of Contents

Vendors oversell and underdeliver most AI integrations. They sell you a flawless demo. The reality is usually a brittle, disconnected system.

According to IBM’s Global AI Adoption Index, 42% of enterprise-scale organizations have AI actively deployed, and another 40% are testing or exploring, meaning over 80% of large enterprises are now somewhere in the AI journey.” According to MIT’s GenAI Divide report, based on analysis of 300 enterprise AI deployments, 95% of generative AI pilots fail to deliver measurable P&L impact. The core issue is not bad models. It is a flawed integration. You do not need another vendor list. You need a decision-making roadmap.

This guide helps technical leaders evaluate partners and push projects into production.

What AI Integration Services Actually Mean in 2026 (Beyond the Definition)

The old definition vs. the 2026 reality

Two years ago, AI integration meant connecting a single model to your existing systems. That old framing is dead. The 2026 reality requires orchestrating models, tools, data pipelines, and autonomous agents across your entire stack. The model itself is no longer the differentiator. System-level architecture is what actually matters. If you evaluate vendors based purely on their chosen model, you will build a fragile system.

The three integration layers every enterprise needs

A functioning AI system requires three distinct architecture layers.

- Layer 1 – Data layer: This includes your data pipelines, RAG implementations, and vector databases.

- Layer 2 – Model layer: This covers your core LLMs, fine-tuned models, and domain-specific AI.

- Layer 3 – Agent layer: This involves agentic workflows, MCP-based tool connections, and agent-to-agent coordination.

Most vendors only sell one or two of these layers. A complete integration partner covers all three to ensure stability.

What AI integration is NOT

- It is not a one-time project you can abandon after launch.

- It is not the same as buying a plug-and-play SaaS AI tool.

- It is not a replacement for basic data readiness.

- It is not a magic system that runs on autopilot after deployment.

The 5 Types of AI Integration Services, and When You Need Each One

Custom LLM Integration

What it is: Embedding a large language model (LLM) into your product, workflow, or internal tooling via API or SDK. An LLM is a foundational AI model. Researchers train these models on massive text datasets so they can understand and generate human language.

When you need it: You want a reasoning layer on top of your existing stack without rebuilding your entire architecture.

Red flag: Run from vendors who recommend a specific LLM before they understand your actual use case.

RAG Pipeline Development

What it is: Connecting an LLM to your proprietary data using a retrieval architecture. Retrieval-Augmented Generation (RAG) ensures the model answers from your specific documents, not its general training data.

When you need it: Internal documents, private databases, or institutional knowledge hold your competitive advantage.

Link: See how we built this successfully in our RAG Development Case Study.

Agentic AI Workflow Integration

What it is: Deploying autonomous software agents that can reason, plan, and take action across multiple tools. Agentic AI executes multi-step workflows without requiring human intervention at every single step.

When you need it: You need end-to-end process automation, not just isolated point solutions.

Note: Production-grade tool connectivity requires a Model Context Protocol (MCP) architecture. MCP standardizes how agents securely interact with your internal data and external tools.

System and API Integration

What it is: Connecting AI models to your existing CRM, ERP, HRIS, or legacy databases via secure APIs.

When you need it: You have functional AI capabilities, but they sit siloed away from the actual systems where your teams work.

The test: If your new AI output requires an employee to manually copy-paste it somewhere else, this integration layer is missing.

MLOps and Monitoring

What it is: The operational infrastructure for maintaining, updating, and monitoring AI models after deployment. MLOps applies DevOps principles to machine learning to ensure long-term model reliability.

When you need it: Your production models are starting to drift, or you want to prevent performance degradation before it happens.

Key tools: MLflow, Weights & Biases, DeepEval, LangSmith.

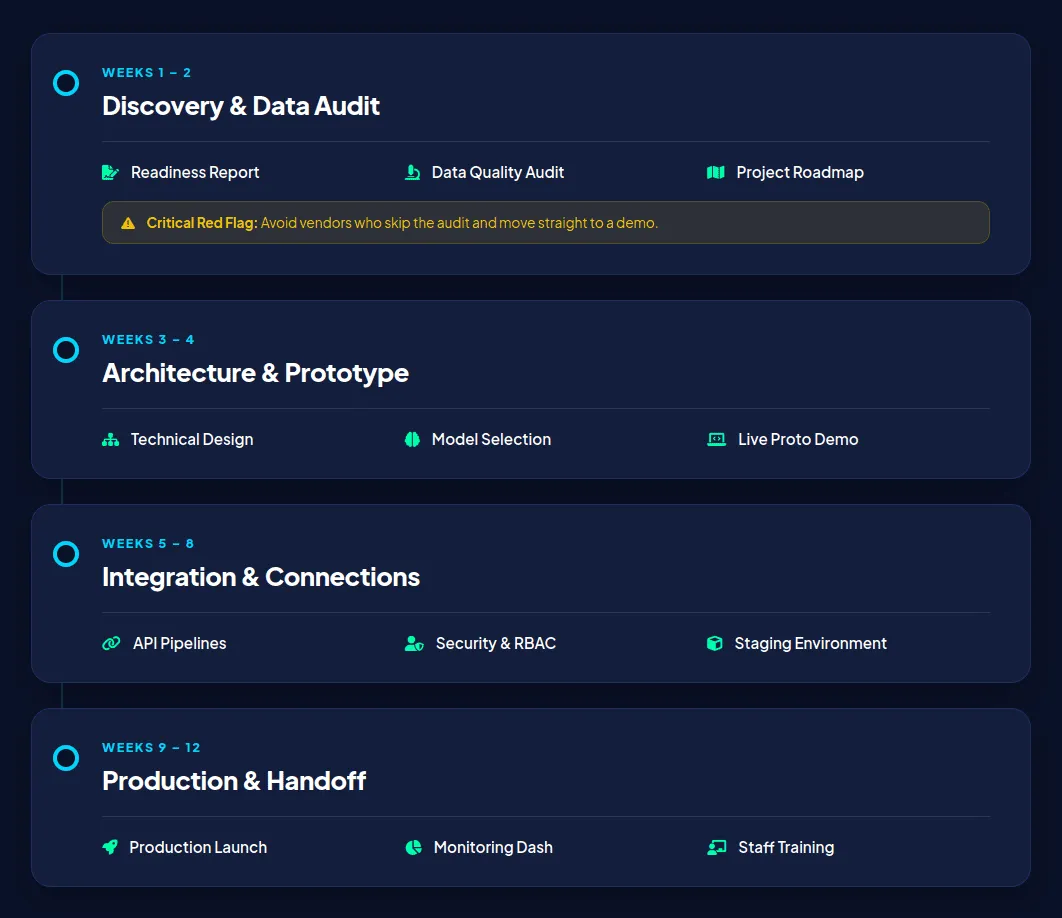

The Honest AI Integration Timeline: What to Expect Week by Week

Timelines vary by scope, but the deployment phases remain consistent across all serious integration partners.

Weeks 1–2 – Discovery, Data Audit, and Readiness Assessment

What happens: We conduct stakeholder interviews, map your current technology stack, assess your data quality, and prioritize use cases.

What you should receive: A written readiness report and a recommended project scope.

Red flag: A vendor who skips this vital assessment and moves straight to a demo is reckless.

Weeks 3–4 – Architecture Design and Prototype Delivery

What happens: The vendor drafts an integration architecture document and justifies their model selection. They build a working prototype in a controlled environment.

What you should receive: A technical architecture document and a live demo of the prototype running against your actual data.

Weeks 5–8 – Integration Build and Systems Connection

What happens: Engineers establish API connections, build your RAG pipeline, and configure your agent workflows. They also set up Role-Based Access Control (RBAC) and security safeguards.

What you should receive: Weekly progress updates, integration test results, and a functional staging environment.

Weeks 9–12 – Production Deployment, Monitoring Setup, and Handoff

What happens: The team executes the production launch, activates monitoring dashboards, and conducts staff training.

What you should receive: Uptime SLA documentation, an incident response protocol, and a post-launch review meeting.

Ongoing – MLOps, Drift Detection, and Retraining Cadence

What happens: Real partners conduct monthly model performance reviews, quarterly retraining assessments, and continuous security audits. A bad vendor simply leaves you with the code and zero ongoing support.

⚠️ Warning Signs: 5 Red Flags Your AI Integration Is Off Track

- No architecture document after Week 2.

- The demo only ever uses synthetic data, never yours.

- Security and compliance discussions keep being deferred.

- No post-launch monitoring plan exists in the contract.

- Milestones keep shifting without written change orders.

How to Choose an AI Integration Company: The 6 Questions That Actually Matter

The goal of this section is to give you six specific questions that a bad vendor cannot answer well.

Q1: Can you show me a live production deployment, not a demo?

What a good answer looks like: They show you a working system producing real business value. What deflection sounds like: “Our clients prefer confidentiality on specifics.” This means they lack production wins.

Q2: How do you handle model drift after launch?

MLOps maturity means actively tracking when a model’s accuracy degrades. Minimum acceptable answer: Your contract must define a monitoring cadence, specific drift thresholds, and a clear retraining protocol.

Q3: How is data security managed in your integration architecture?

What to look for: Read-only agent defaults, RBAC, AES-256 encryption, and clear audit trails. The MCP authentication question: Do you enforce OAuth 2.0 with short-lived tokens on your agentic stacks? If they hesitate, your data is at risk.

Q4: Do you work within our existing stack or propose replacing it?

A layering approach demonstrates a true partner mentality. A proposal to rebuild your entire stack is a massive red flag and forces a significantly larger budget conversation.

Q5: Which LLMs have you deployed in production, and how do you prevent vendor lock-in?

Good answer: Documented experience orchestrating multiple models like GPT-4, Claude, Gemini, or open-source options. Good practice: They build a model-agnostic architecture and offer ONNX export capabilities so you can switch models later.

Q6: What does your post-launch support model look like?

What to demand: Defined SLAs, named escalation contacts, and monthly performance reviews. The tell: If they hesitate or say “we will figure that out after launch,” walk away immediately.

HyScaler documents detailed answers to all six of these critical questions before you ever sign a contract. Contact our team to schedule a consultation and evaluate your AI readiness today.

The 5 Most Common AI Integration Failures, and How to Prevent Them

According to the RAND Corporation, more than 80% of AI projects fail, at twice the rate of standard IT projects. Most fail not because of bad models, but because of bad integration decisions.

Failure #1: Integrating Before the Data Is Ready

What happens: AI connects to messy, incomplete, or siloed data and produces unreliable outputs.

Prevention: Make a data audit mandatory in week one. If a vendor skips this step, that is your first red flag.

Link: See how we handled on-premise, GDPR-compliant architecture in our RAG Development Case Study.

Failure #2: Choosing the Framework Before Defining the Use Case

What happens: Teams fall in love with frameworks like LangChain or CrewAI. They then reverse-engineer a use case just to justify using that technology.

Prevention: You must define your use case and success metrics before making any architecture decisions.

Failure #3: No MLOps Plan, Deploying With No Monitoring

What happens: The model works flawlessly at launch. It then silently degrades over 3–6 months as real-world data drifts from training data.

Prevention: Agree on monitoring dashboards, drift thresholds, and retraining cadences before go-live.

Link: Read our insights on Why Enterprise AI Projects Actually Fail.

Failure #4: The MCP Authentication Gap in Agentic Stacks

What happens: Agentic systems are often deployed without OAuth 2.0 enforcement, short-lived tokens, or sender constraints. This creates massive unauthenticated attack surfaces.

Prevention: Treat every Model Context Protocol (MCP) server as critically exposed infrastructure. Enforce strict authentication protocols from day one.

Note: This is the most underreported production risk in 2026 agentic deployments.

Failure #5: Treating AI Integration as a One-Time Project

What happens: The model launches, the vendor exits, and nobody owns ongoing performance. AI outputs degrade, stakeholder trust erodes, and the initiative quietly dies. Prevention: Agree on an MLOps retainer and defined post-launch ownership before signing a contract.

AI Integration by Industry: What Good Looks Like in Your Sector

Healthcare

Key requirements: EHR/EMR integration, HIPAA compliance, ambient clinical documentation, and algorithmic bias mitigation.

What good looks like: AI layers over existing clinical systems without disrupting workflows. It should not be an entirely new platform that forces retraining.

HyScaler proof: Explore our Bridging Legacy Medical Equipment with Modern EHR Systems IoT case study and our Specialty Clinic EMR Development Service case study.

Finance and Banking

Key requirements: AML monitoring, automated underwriting, cross-border payment reconciliation, and transatlantic data privacy compliance.

What good looks like: High-volume transaction processing requires full audit trails and real-time compliance flagging.

Manufacturing

Key requirements: Predictive maintenance using sensor data, computer-vision quality control, and equipment-failure forecasting.

What good looks like: AI connects directly to operational systems. It should not be a separate dashboard that nobody checks.

Logistics and Supply Chain

Key requirements: Route optimization, demand sensing, inventory management, and carbon footprint tracking.

What good looks like: Dynamic rerouting agents respond to real-time data. Avoid static rule-based automation that cannot handle sudden supply chain disruptions.

Retail and E-Commerce

Key requirements: Personalization at scale, inventory automation, AI-powered customer support, and returns prediction. See how we achieved a 50% traffic boost in our E-commerce Platform Modernization: HyScaler’s 50% Traffic Boost with React, GraphQL, and AI/ML case study.

What AI Integration Services Cost in 2026, Real Budget Ranges

Cost varies significantly by project scope. The ranges below reflect real project budgets, not marketing estimates.

Discovery and Strategy Engagements

- Range: $5,000–$20,000

- What you get: Readiness assessment, use case prioritization, architecture recommendation, and a clear ROI model.

- Duration: 2–3 weeks.

MVP and Prototype Builds

- Range: $20,000–$75,000

- What you get: A working integration prototype tested against real data. You also receive a technical architecture document and integration test results.

- Duration: 4–6 weeks.

Full Production Integrations

- Range: $75,000–$250,000+

- What you get: A production-grade deployment with full systems integration. We include security setup, staff training, and comprehensive system documentation.

- Duration: 8–16 weeks, depending on overall complexity.

Ongoing MLOps and Support Retainers

- Range: $3,000–$15,000/month

- What you get: Active monitoring, drift detection, model updates, performance reporting, and incident response.

- Note: This is non-negotiable for production systems. You must factor this into your Year 1 budget from day one.

| Phase | Cost Range | Duration | Deliverables |

| Discovery & Strategy | $5K–$20K | 2–3 weeks | Readiness report, ROI model |

| MVP / Prototype | $20K–$75K | 4–6 weeks | Working prototype, architecture doc |

| Full Production | $75K–$250K+ | 8–16 weeks | Deployed system, security setup, training |

| MLOps Retainer | $3K–$15K/mo | Ongoing | Monitoring, drift detection, updates |

What Drives Costs Up, and How to Scope to Control Them

- Data readiness gaps remain the most common hidden cost multiplier.

- Legacy system complexity: Outdated infrastructure requires expensive, custom API wrappers.

- Compliance requirements: Strict regulatory certifications extend project timelines and security testing.

- Model variety: Scoping a high number of distinct LLMs and agent types increases infrastructure demands.

- Post-launch support: Intensive SLA models require dedicated, round-the-clock engineering resources.

⚠️ Red flag pricing patterns to watch for:

- Fixed-price quotes with no discovery phase: The vendor is just guessing.

- No line item for monitoring and MLOps: You will inevitably pay for it later.

- Retainer-only pricing with no milestone structure: This guarantees zero accountability.

How HyScaler Approaches AI Integration, From First Call to Production

HyScaler is a CMMI Level 3 and ISO 9001 certified technology partner. We bring 150+ engineers and 10+ years of experience to your projects. We deliver full-lifecycle AI integration across healthcare, finance, and manufacturing sectors.

We mirror the timeline above to ensure predictable delivery. We deliver clear architectural blueprints and ROI models during the discovery phase. We build functional, data-backed prototypes in the MVP phase. Finally, we deploy secure, monitored AI systems into your live production environment.

Real results from our deployments:

- We modernized clinical workflows in our Specialty Clinic EMR Development Service.

- We secured sensitive documents rapidly in our RAG Development Case Study.

- We drove medical productivity in our Comprehensive EHR Solution.

Frequently Asked Questions

Before you engage any AI integration partner, including us, here are the questions we hear most often from technical leaders evaluating their options.

What is the difference between AI integration and AI development?

AI development means building new models from scratch. AI integration means connecting AI models to your existing systems and workflows.

How long does AI integration typically take?

An MVP prototype takes 3–4 weeks. Full production deployments take 8–16 weeks, depending on system complexity and your data readiness.

Do I need to replace my existing systems to integrate AI?

No. Production-grade integration layers AI over your current stack using secure APIs and reliable data pipelines.

What is the difference between RAG and fine-tuning, and which do I need?

RAG retrieves dynamic, up-to-date information from your private data. Fine-tuning adjusts the model’s overall style, tone, or specific domain terminology.

How do I know if my data is ready for AI integration?

Your data must pass three tests. Is it accessible via API or a structured format? Is it clean and consistently formatted? Is it governed with clear access controls?

What is an agentic AI integration, and do I need one in 2026?

Agentic AI reasons, plans, and takes actions across multiple tools automatically. It executes workflows without requiring human intervention at every step. You need it when your use case spans more than one system or decision point.

What security risks should I watch for in AI integration projects?

Watch for MCP authentication gaps and over-permissioned autonomous agents. Also, avoid missing audit trails and static API credentials that are never rotated.

How much does enterprise AI integration cost?

Discovery costs $5K–$20K. MVP prototypes run $20K–$75K. Full production costs $75K–$250K+. You must also plan for an MLOps retainer of $3K–$15K per month.

Can small businesses afford AI integration services?

Yes, cloud-based platforms and open-source tools make modern AI integration highly cost-effective for smaller organizations. You avoid massive upfront costs by starting with a focused proof-of-concept that takes just four to eight weeks. Target projects that reduce operational friction and automate manual tasks to guarantee your integration generates a rapid return on investment.

What is the difference between AI integration and AI consulting?

AI consulting evaluates your data readiness and defines the strategic goals, governance policies, and guardrails for your project.

AI integration is the technical execution that embeds machine learning or generative AI models directly into your existing enterprise frameworks.

Consulting delivers the strategic roadmap, while integration connects the technology to your daily business workflows and enterprise systems.

Conclusion

AI integration is not a product you buy; it is an operational capability you build with the right partner.

Use this checklist to separate the serious engineers from the demo-builders before you commit your budget.

The 6-Question Vendor Evaluation Checklist

- Q1: Can you show me a live production deployment, not a demo?

- Q2: How do you handle model drift after launch?

- Q3: How is data security managed in your integration architecture?

- Q4: Do you work within our existing stack or propose replacing it?

- Q5: Which LLMs have you deployed in production, and how do you prevent vendor lock-in?

- Q6: What does your post-launch support model look like?

The organizations that win in 2026 are not the ones running the most pilots. They are the ones who know how to push one project all the way to production and build from there. Partner with a team that engineers for scale, security, and measurable business value.

Explore our Artificial Intelligence Services to see our full capabilities, or schedule a consultation to discuss your integration roadmap today.